2, since these quantities are related to the training error. Instead, we wish to choose a model with a low test error. As is evident here, and as we show in Chapter 2, the training error can be a poor estimate of the test error. Therefore, RSS and *R*2 are not suitable for selecting the best model among a collection of models with diferent numbers of predictors.

In order to select the best model with respect to test error, we need to estimate this test error. There are two common approaches:

- 1. We can indirectly estimate test error by making an *adjustment* to the training error to account for the bias due to overftting.

- 2. We can *directly* estimate the test error, using either a validation set approach or a cross-validation approach, as discussed in Chapter 5.

We consider both of these approaches below.

3Like forward stepwise selection, backward stepwise selection performs a *guided* search over model space, and so efectively considers substantially more than 1 + *p*(*p* + 1)*/*2 models.

6.1 Subset Selection 233

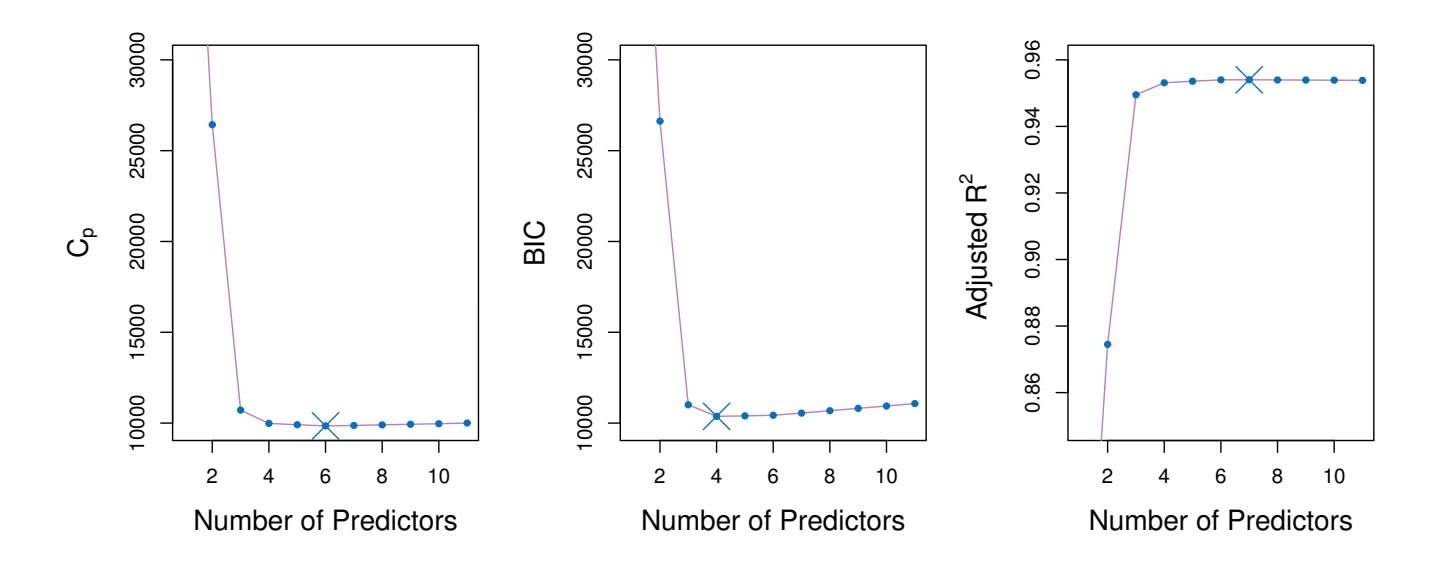

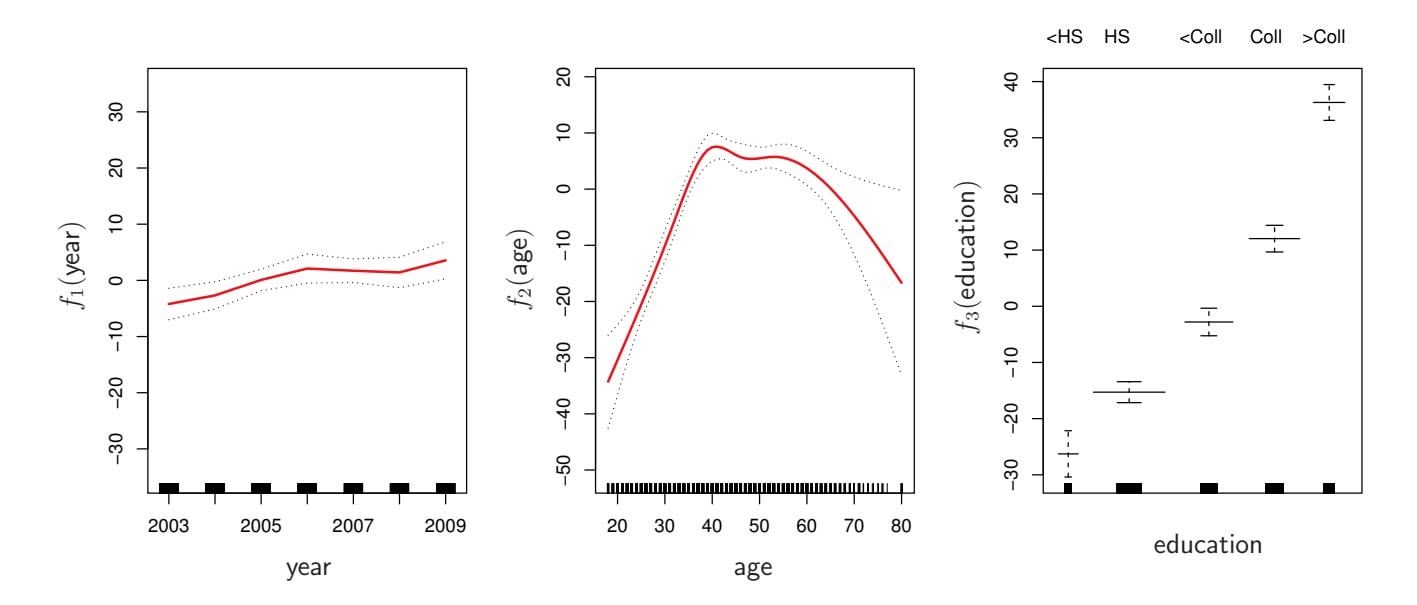

**FIGURE 6.2.** *Cp, BIC, and adjusted R*2 *are shown for the best models of each size for the* Credit *data set (the lower frontier in Figure 6.1). Cp and BIC are estimates of test MSE. In the middle plot we see that the BIC estimate of test error shows an increase after four variables are selected. The other two plots are rather fat after four variables are included.*

# *Cp*, AIC, BIC, and Adjusted *R*2

We show in Chapter 2 that the training set $MSE$ is generally an underestimate of the test $MSE$ . (Recall that $MSE = RSS/n$ .) This is because when we fit a model to the training data using least squares, we specifically estimate the regression coefficients such that the training $RSS$ (but not the test $RSS$ ) is as small as possible. In particular, the training error will decrease as more variables are included in the model, but the test error may not. Therefore, training set $RSS$ and training set $R^2$ cannot be used to select from among a set of models with different numbers of variables.

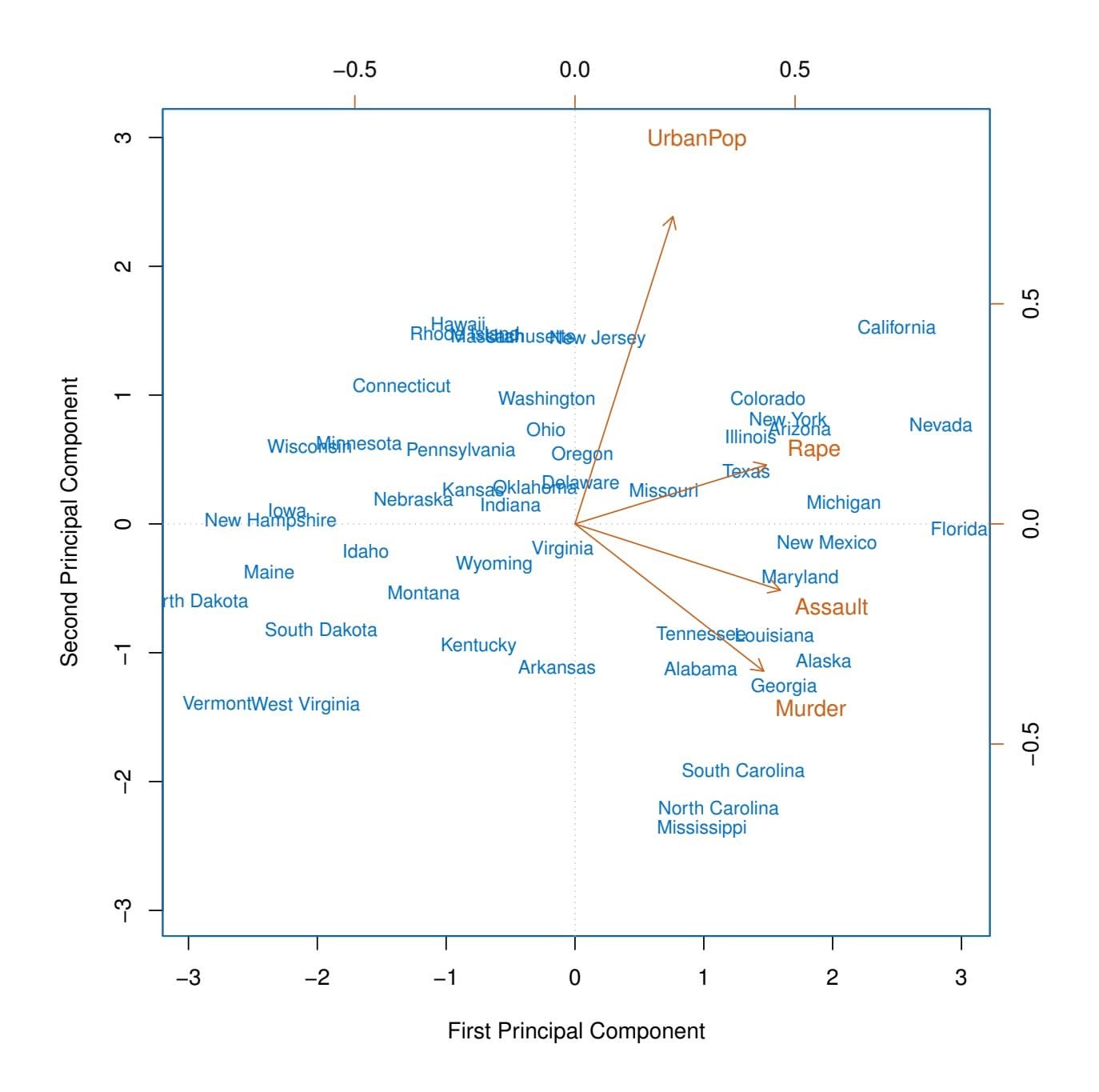

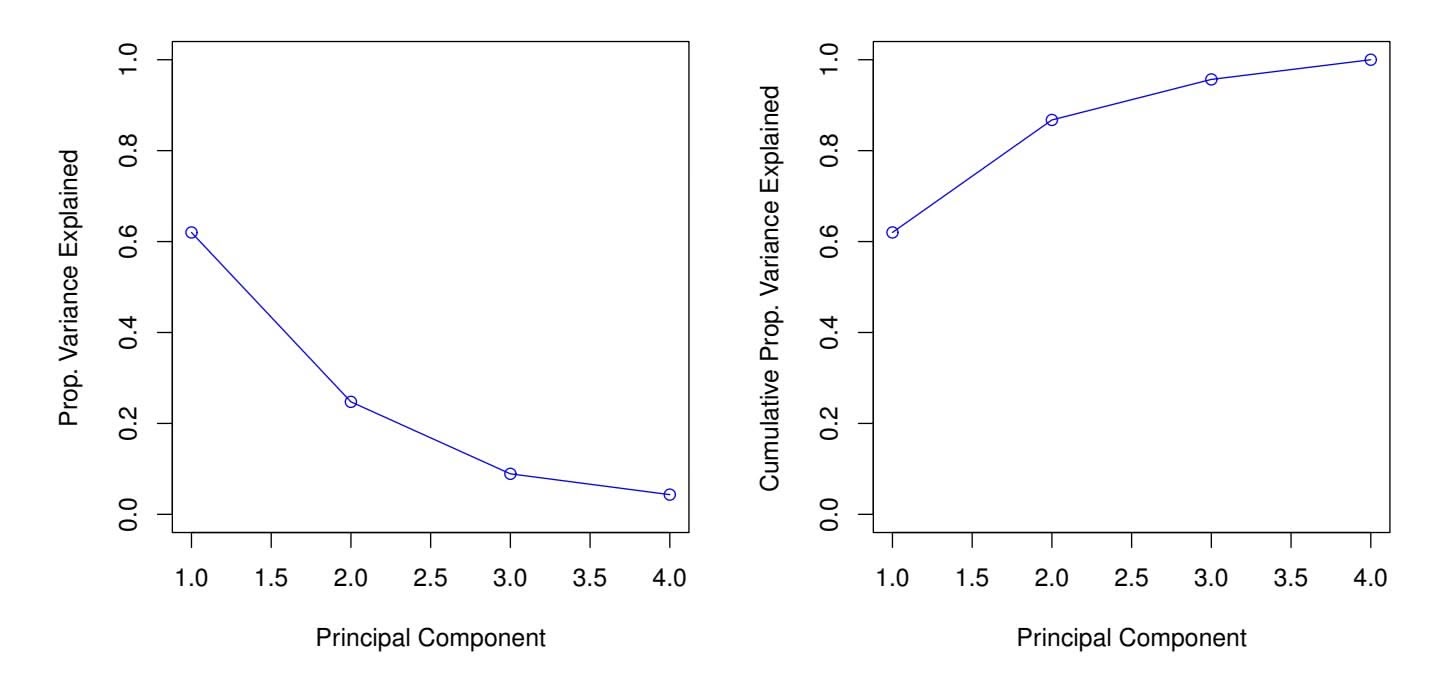

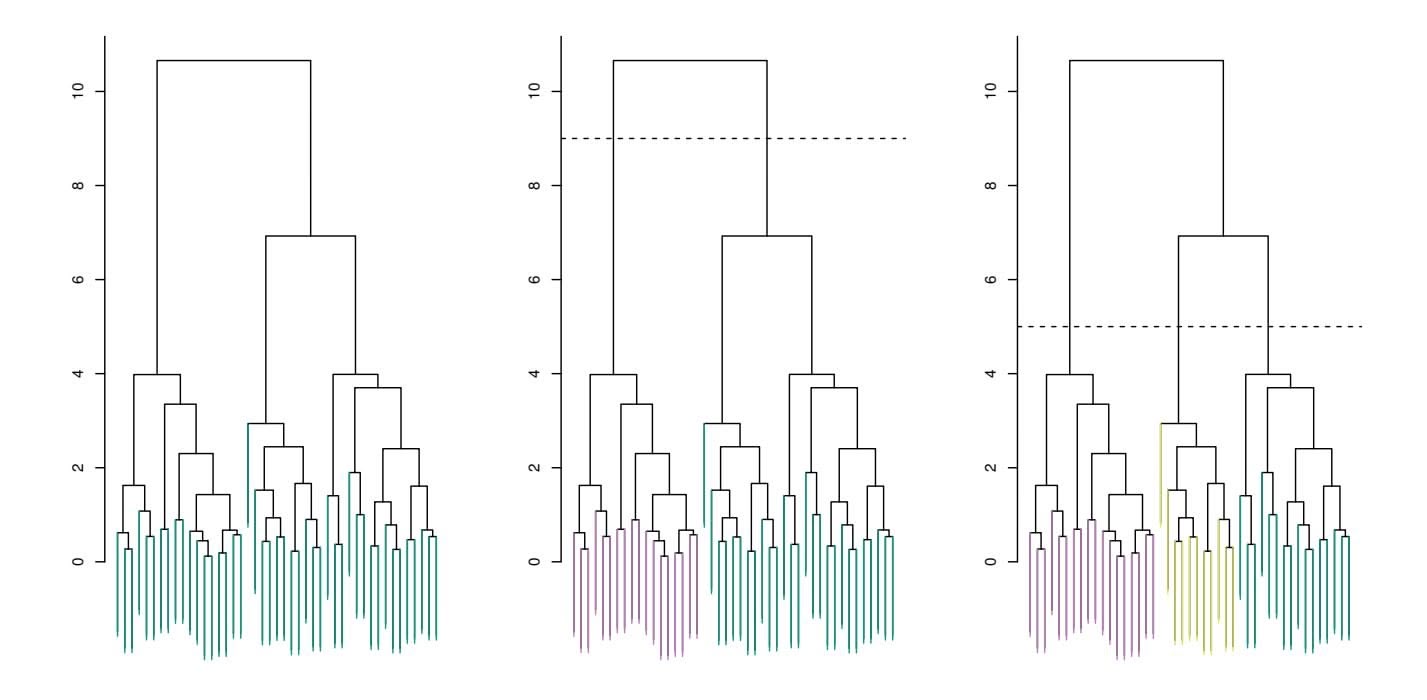

However, a number of techniques for *adjusting* the training error for the model size are available. These approaches can be used to select among a set of models with diferent numbers of variables. We now consider four such approaches: *Cp*, *Akaike information criterion* (AIC), *Bayesian information Cp criterion* (BIC), and *adjusted R*2. Figure 6.2 displays *Cp*, BIC, and adjusted *R*2 for the best model of each size produced by best subset selection on the Credit data set.

For a ftted least squares model containing *d* predictors, the *Cp* estimate of test MSE is computed using the equation

Akaike information criterion Bayesian information criterion adjusted *R*2

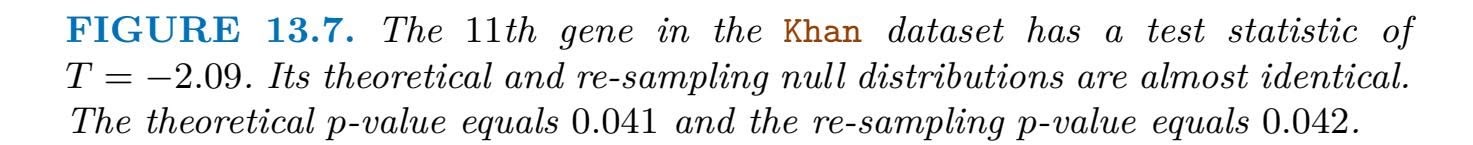

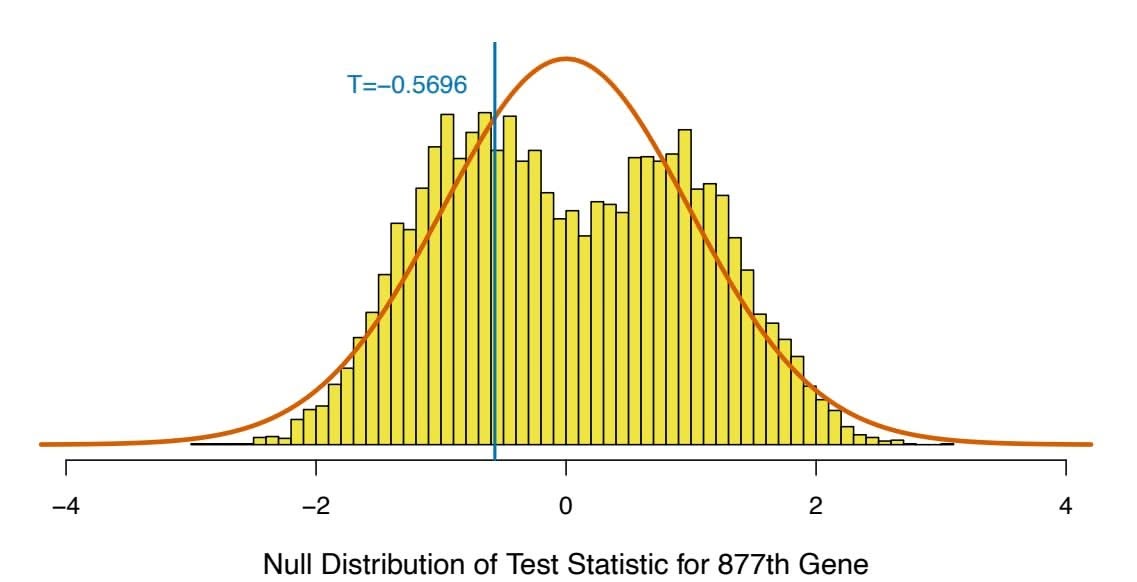

$$

C_p = \frac{1}{n} \left( \text{RSS} + 2d\hat{\sigma}^2 \right) \quad (6.2)

$$

where σˆ2 is an estimate of the variance of the error ϵ associated with each response measurement in (6.1).4 Typically σˆ2 is estimated using the full

4Mallow's $C_p$ is sometimes defined as $C'_p = RSS/\hat{\sigma}^2 + 2d - n$ . This is equivalent to the definition given above in the sense that $C_p = \frac{1}{n} \hat{\sigma}^2(C'_p + n)$ , and so the model with smallest $C_p$ also has smallest $C'_p$ .

# 234 6. Linear Model Selection and Regularization

model containing all predictors. Essentially, the $C_p$ statistic adds a penalty of $2d\hat{\sigma}^2$ to the training RSS in order to adjust for the fact that the training error tends to underestimate the test error. Clearly, the penalty increases as the number of predictors in the model increases; this is intended to adjust for the corresponding decrease in training RSS. Though it is beyond the scope of this book, one can show that if $\hat{\sigma}^2$ is an unbiased estimate of $\sigma^2$ in (6.2), then $C_p$ is an unbiased estimate of test MSE. As a consequence, the $C_p$ statistic tends to take on a small value for models with a low test error, so when determining which of a set of models is best, we choose the model with the lowest $C_p$ value. In Figure 6.2, $C_p$ selects the six-variable model containing the predictors income, limit, rating, cards, age and student.

The AIC criterion is defned for a large class of models ft by maximum likelihood. In the case of the model (6.1) with Gaussian errors, maximum likelihood and least squares are the same thing. In this case AIC is given by

$$

AIC = \frac{1}{n} (RSS + 2d\hat{\sigma}^2),

$$

where, for simplicity, we have omitted irrelevant constants.5 Hence for least squares models, *Cp* and AIC are proportional to each other, and so only *Cp* is displayed in Figure 6.2.

BIC is derived from a Bayesian point of view, but ends up looking similar to $C_p$ (and AIC) as well. For the least squares model with $d$ predictors, the BIC is, up to irrelevant constants, given by

$$

\text{BIC} = \frac{1}{n} \left( \text{RSS} + \log(n) d\hat{\sigma}^2 \right). \quad (6.3)

$$

Like $C_p$ , the BIC will tend to take on a small value for a model with a low test error, and so generally we select the model that has the lowest BIC value. Notice that BIC replaces the $2d\hat{\sigma}^2$ used by $C_p$ with a $\log(n)d\hat{\sigma}^2$ term, where $n$ is the number of observations. Since $\log n > 2$ for any $n > 7$ , the BIC statistic generally places a heavier penalty on models with many variables, and hence results in the selection of smaller models than $C_p$ . In Figure 6.2, we see that this is indeed the case for the Credit data set; BIC chooses a model that contains only the four predictors income, limit, cards, and student. In this case the curves are very flat and so there does not appear to be much difference in accuracy between the four-variable and six-variable models.

The adjusted *R*2 statistic is another popular approach for selecting among a set of models that contain diferent numbers of variables. Recall from

5There are two formulas for AIC for least squares regression. The formula that we provide here requires an expression for σ2, which we obtain using the full model containing all predictors. The second formula is appropriate when σ2 is unknown and we do not want to explicitly estimate it; that formula has a log(RSS) term instead of an RSS term. Detailed derivations of these two formulas are outside of the scope of this book.

6.1 Subset Selection 235

Recall from Chapter 3 that the usual $R^2$ is defined as $1 - RSS/TSS$ , where $TSS = \sum(y_i - \bar{y})^2$ is the *total sum of squares* for the response. Since RSS always decreases as more variables are added to the model, the $R^2$ always increases as more variables are added. For a least squares model with $d$ variables, the adjusted $R^2$ statistic is calculated as

$$

\text{Adjusted } R^2 = 1 - \frac{\text{RSS}/(n - d - 1)}{\text{TSS}/(n - 1)} \quad (6.4)

$$

Unlike $C_p$ , AIC, and BIC, for which a *small* value indicates a model with a low test error, a *large* value of adjusted $R^2$ indicates a model with a small test error. Maximizing the adjusted $R^2$ is equivalent to minimizing $\frac{RSS}{n-d-1}$ . While RSS always decreases as the number of variables in the model increases, $\frac{RSS}{n-d-1}$ may increase or decrease, due to the presence of *d* in the denominator.The intuition behind the adjusted *R*2 is that once all of the correct variables have been included in the model, adding additional *noise* variables will lead to only a very small decrease in RSS. Since adding noise variables leads to an increase in *d*, such variables will lead to an increase in $\frac{RSS}{n-d-1}$ , and consequently a decrease in the adjusted *R*2. Therefore, in theory, the model with the largest adjusted *R*2 will have only correct variables and no noise variables. Unlike the *R*2 statistic, the adjusted *R*2 statistic *pays a price* for the inclusion of unnecessary variables in the model. Figure 6.2 displays the adjusted *R*2 for the Credit data set. Using this statistic results in the selection of a model that contains seven variables, adding one to the model selected by *C**p* and AIC.

$C_p$ , AIC, and BIC all have rigorous theoretical justifications that are beyond the scope of this book. These justifications rely on asymptotic arguments (scenarios where the sample size $n$ is very large). Despite its popularity, and even though it is quite intuitive, the adjusted $R^2$ is not as well motivated in statistical theory as AIC, BIC, and $C_p$ . All of these measures are simple to use and compute. Here we have presented their formulas in the case of a linear model fit using least squares; however, AIC and BIC can also be defined for more general types of models.

### Validation and Cross-Validation

As an alternative to the approaches just discussed, we can directly estimate the test error using the validation set and cross-validation methods discussed in Chapter 5. We can compute the validation set error or the cross-validation error for each model under consideration, and then select the model for which the resulting estimated test error is smallest. This procedure has an advantage relative to AIC, BIC, $C_p$ , and adjusted $R^2$ , in that it provides a direct estimate of the test error, and makes fewer assumptions about the true underlying model. It can also be used in a wider range of model selection tasks, even in cases where it is hard to pinpoint the model

236 6. Linear Model Selection and Regularization

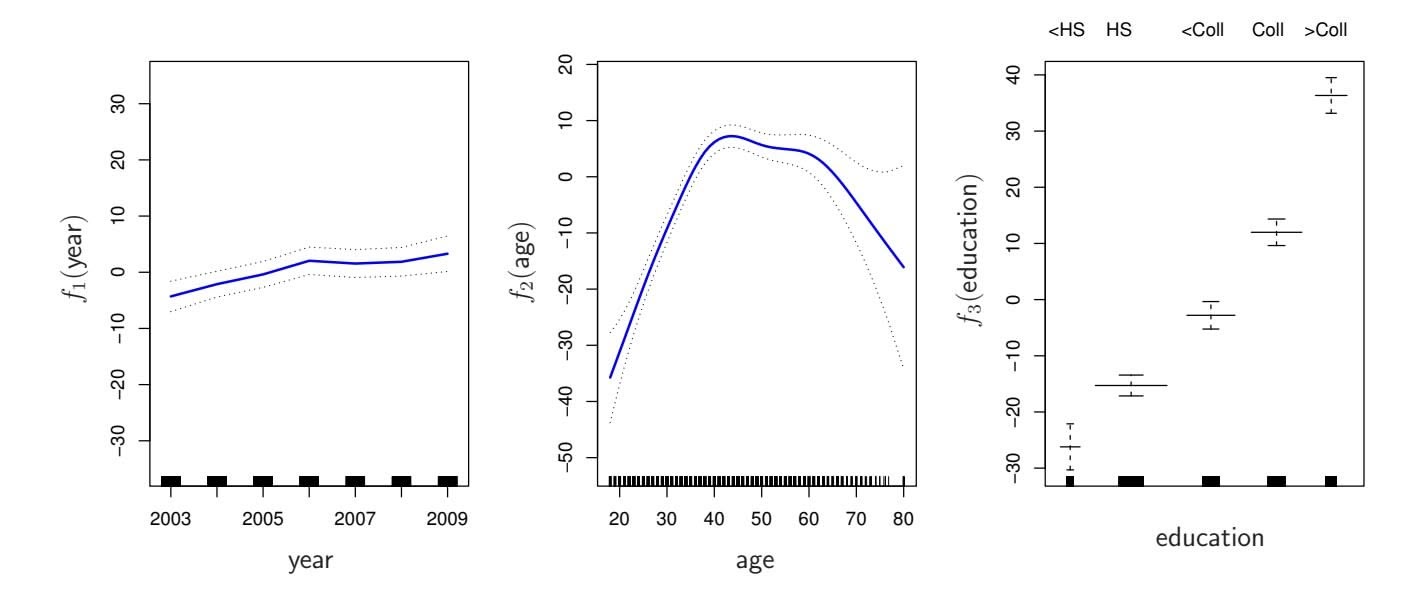

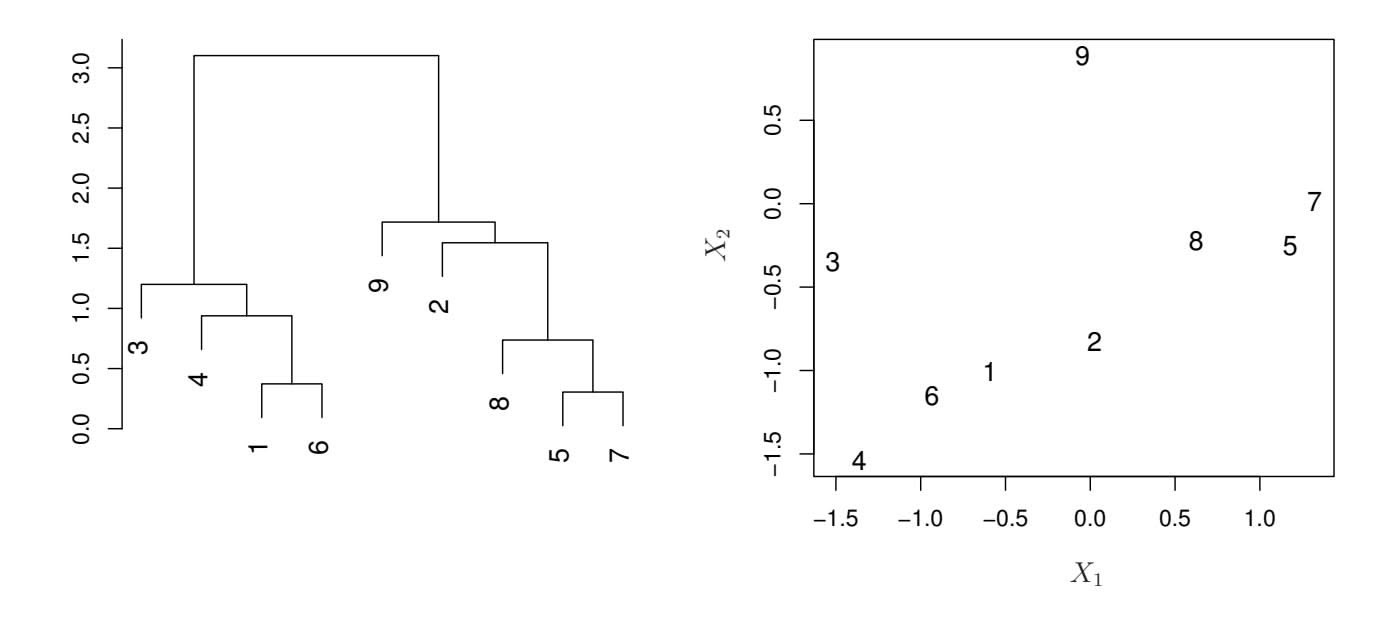

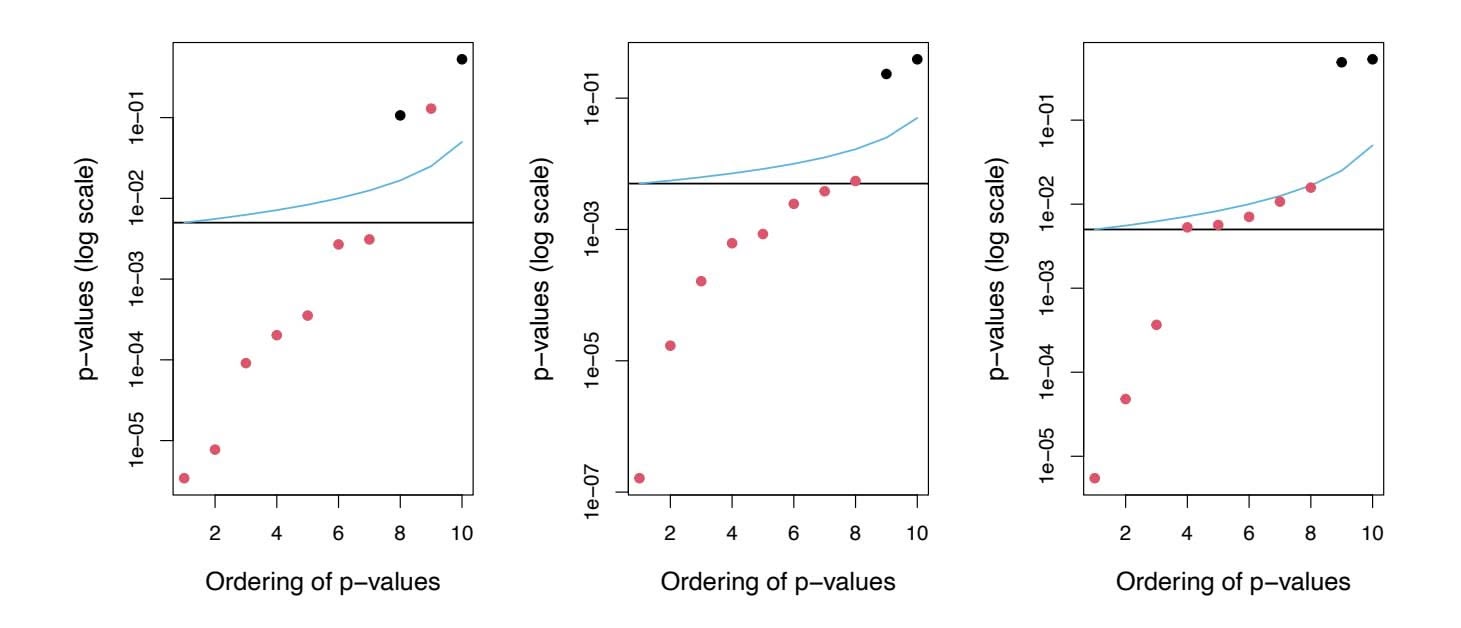

**FIGURE 6.3.** *For the* Credit *data set, three quantities are displayed for the best model containing d predictors, for d ranging from* 1 *to* 11*. The overall* best *model, based on each of these quantities, is shown as a blue cross.* Left: *Square root of BIC.* Center: *Validation set errors.* Right: *Cross-validation errors.*

degrees of freedom (e.g. the number of predictors in the model) or hard to estimate the error variance σ2. Note that when cross-validation is used, the sequence of models *Mk* in Algorithms 6.1–6.3 is determined separately for each training fold, and the validation errors are averaged over all folds for each model size *k*. This means, for example with best-subset regression, that *Mk*, the best subset of size *k*, can difer across the folds. Once the best size *k* is chosen, we fnd the best model of that size on the full data set.

In the past, performing cross-validation was computationally prohibitive for many problems with large *p* and/or large *n*, and so AIC, BIC, *Cp*, and adjusted *R*2 were more attractive approaches for choosing among a set of models. However, nowadays with fast computers, the computations required to perform cross-validation are hardly ever an issue. Thus, crossvalidation is a very attractive approach for selecting from among a number of models under consideration.

Figure 6.3 displays, as a function of *d*, the BIC, validation set errors, and cross-validation errors on the Credit data, for the best *d*-variable model. The validation errors were calculated by randomly selecting three-quarters of the observations as the training set, and the remainder as the validation set. The cross-validation errors were computed using *k* = 10 folds. In this case, the validation and cross-validation methods both result in a six-variable model. However, all three approaches suggest that the four-, fve-, and six-variable models are roughly equivalent in terms of their test errors.

In fact, the estimated test error curves displayed in the center and righthand panels of Figure 6.3 are quite fat. While a three-variable model clearly has lower estimated test error than a two-variable model, the estimated test errors of the 3- to 11-variable models are quite similar. Furthermore, if we 6.2 Shrinkage Methods 237

repeated the validation set approach using a diferent split of the data into a training set and a validation set, or if we repeated cross-validation using a diferent set of cross-validation folds, then the precise model with the lowest estimated test error would surely change. In this setting, we can select a model using the *one-standard-error rule*. We frst calculate the onestandard error of the estimated test MSE for each model size, and then select the smallest model for which the estimated test error is within one standard error of the lowest point on the curve. The rationale here is that if a set of models appear to be more or less equally good, then we might as well choose the simplest model—that is, the model with the smallest number of predictors. In this case, applying the one-standard-error rule to the validation set or cross-validation approach leads to selection of the three-variable model.

standarderror rule

# 6.2 Shrinkage Methods

The subset selection methods described in Section 6.1 involve using least squares to ft a linear model that contains a subset of the predictors. As an alternative, we can ft a model containing all *p* predictors using a technique that *constrains* or *regularizes* the coeffcient estimates, or equivalently, that *shrinks* the coeffcient estimates towards zero. It may not be immediately obvious why such a constraint should improve the ft, but it turns out that shrinking the coeffcient estimates can signifcantly reduce their variance. The two best-known techniques for shrinking the regression coeffcients towards zero are *ridge regression* and the *lasso*.

# *6.2.1 Ridge Regression*

Recall from Chapter 3 that the least squares fitting procedure estimates $\beta_0, \beta_1, \dots, \beta_p$ using the values that minimize

$$

RSS = \sum_{i=1}^{n} \left( y_i - \beta_0 - \sum_{j=1}^{p} \beta_j x_{ij} \right)^2.

$$

*Ridge regression* is very similar to least squares, except that the coeffcients ridge regression are estimated by minimizing a slightly diferent quantity. In particular, the ridge regression coeffcient estimates βˆ*R* are the values that minimize

ridge regression

$$

\sum_{i=1}^{n} \left( y_i - \beta_0 - \sum_{j=1}^{p} \beta_j x_{ij} \right)^2 + \lambda \sum_{j=1}^{p} \beta_j^2 = \text{RSS} + \lambda \sum_{j=1}^{p} \beta_j^2 \quad (6.5)

$$

where λ ≥ 0 is a *tuning parameter*, to be determined separately. Equa- tuning

parameter

238 6. Linear Model Selection and Regularization

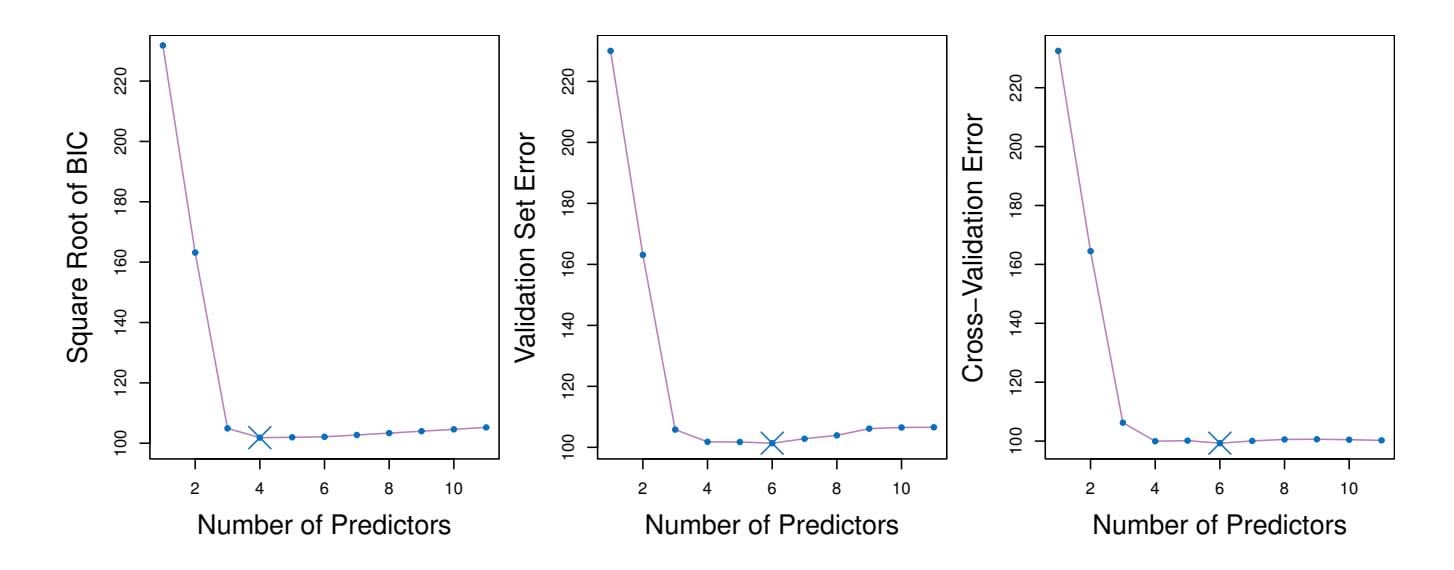

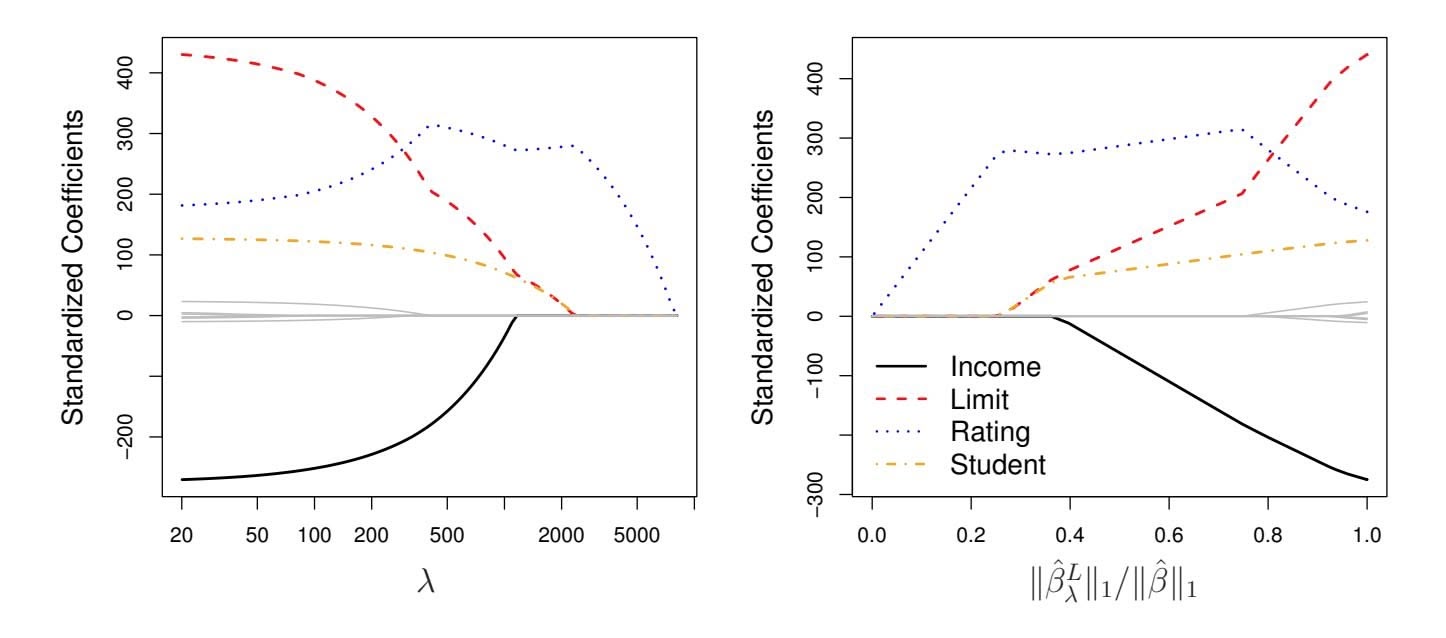

**FIGURE 6.4.** *The standardized ridge regression coefficients are displayed for the* Credit *data set, as a function of* $\lambda$ *and* $\|\hat{\beta}_{\lambda}^{R}\|_2 / \|\hat{\beta}\|_2$ .tion 6.5 trades off two different criteria. As with least squares, ridge regression seeks coefficient estimates that fit the data well, by making the RSS small. However, the second term, $\lambda \sum_{j} \beta_{j}^{2}$ , called a *shrinkage penalty*, is small when $\beta_{1}, \dots, \beta_{p}$ are close to zero, and so it has the effect of *shrinking* the estimates of $\beta_{j}$ towards zero. The tuning parameter $\lambda$ serves to control the relative impact of these two terms on the regression coefficient estimates. When $\lambda = 0$ , the penalty term has no effect, and ridge regression will produce the least squares estimates. However, as $\lambda \to \infty$ , the impact of the shrinkage penalty grows, and the ridge regression coefficient estimates will approach zero. Unlike least squares, which generates only one set of coefficient estimates, ridge regression will produce a different set of coefficient estimates, $\hat{\beta}_{\lambda}^{R}$ , for each value of $\lambda$ . Selecting a good value for $\lambda$ is critical; we defer this discussion to Section 6.2.3, where we use cross-validation.

Note that in (6.5), the shrinkage penalty is applied to $\beta_1, \dots, \beta_p$ , but not to the intercept $\beta_0$ . We want to shrink the estimated association of each variable with the response; however, we do not want to shrink the intercept, which is simply a measure of the mean value of the response when $x_{i1} = x_{i2} = \dots = x_{ip} = 0$ . If we assume that the variables—that is, the columns of the data matrix $\mathbf{X}$ —have been centered to have mean zero before ridge regression is performed, then the estimated intercept will take the form $\hat{\beta}_0 = \bar{y} = \sum_{i=1}^n y_i/n$ .

### An Application to the Credit Data

In Figure 6.4, the ridge regression coefficient estimates for the Credit data set are displayed. In the left-hand panel, each curve corresponds to the ridge regression coefficient estimate for one of the ten variables, plotted as a function of $\lambda$ . For example, the black solid line represents the ridge regression estimate for the income coefficient, as $\lambda$ is varied. At the extreme

6.2 Shrinkage Methods 239

left-hand side of the plot, $λ$ is essentially zero, and so the corresponding ridge coefficient estimates are the same as the usual least squares estimates. But as $λ$ increases, the ridge coefficient estimates shrink towards zero. When $λ$ is extremely large, then all of the ridge coefficient estimates are basically zero; this corresponds to the *null model* that contains no predictors. In this plot, the income, limit, rating, and student variables are displayed in distinct colors, since these variables tend to have by far the largest coefficient estimates. While the ridge coefficient estimates tend to decrease in aggregate as $λ$ increases, individual coefficients, such as rating and income, may occasionally increase as $λ$ increases.

The right-hand panel of Figure 6.4 displays the same ridge coefficient estimates as the left-hand panel, but instead of displaying $\lambda$ on the *x*-axis, we now display $||\hat{\beta}_\lambda^R||_2 / ||\hat{\beta}||_2$ , where $\hat{\beta}$ denotes the vector of least squares coefficient estimates. The notation $||\beta||_2$ denotes the $\ell_2$ *norm* (pronounced “ell 2”) of a vector, and is defined as $||\beta||_2 = \sqrt{\sum_{j=1}^p \beta_j^2}$ . It measures the distance of $\beta$ from zero. As $\lambda$ increases, the $\ell_2$ norm of $\hat{\beta}_\lambda^R$ will *always* decrease, and so will $||\hat{\beta}_\lambda^R||_2 / ||\hat{\beta}||_2$ . The latter quantity ranges from 1 (when $\lambda = 0$ , in which case the ridge regression coefficient estimate is the same as the least squares estimate, and so their $\ell_2$ norms are the same) to 0 (when $\lambda = \infty$ , in which case the ridge regression coefficient estimate is a vector of zeros, with $\ell_2$ norm equal to zero). Therefore, we can think of the *x*-axis in the right-hand panel of Figure 6.4 as the amount that the ridge regression coefficient estimates have been shrunken towards zero; a small value indicates that they have been shrunken very close to zero.

The standard least squares coefficient estimates discussed in Chapter 3 are *scale equivariant*: multiplying $X_j$ by a constant $c$ simply leads to a scaling of the least squares coefficient estimates by a factor of $1/c$ . In other words, regardless of how the *j*th predictor is scaled, $X_j \hat{\beta}_j$ will remain the same. In contrast, the ridge regression coefficient estimates can change *substantially* when multiplying a given predictor by a constant. For instance, consider the income variable, which is measured in dollars. One could reasonably have measured income in thousands of dollars, which would result in a reduction in the observed values of income by a factor of 1,000. Now due to the sum of squared coefficients term in the ridge regression formulation (6.5), such a change in scale will not simply cause the ridge regression coefficient estimate for income to change by a factor of 1,000. In other words, $X_j \hat{\beta}_{j, \lambda}^R$ will depend not only on the value of $\lambda$ , but also on the scaling of the *j*th predictor. In fact, the value of $X_j \hat{\beta}_{j, \lambda}^R$ may even depend on the scaling of the *other* predictors! Therefore, it is best to apply ridge regression after *standardizing the predictors*, using the formula

$$

\tilde{x}_{ij} = \frac{x_{ij}}{\sqrt{\frac{1}{n} \sum_{i=1}^{n} (x_{ij} - \overline{x}_j)^2}}, \quad (6.6)

$$

240 6. Linear Model Selection and Regularization

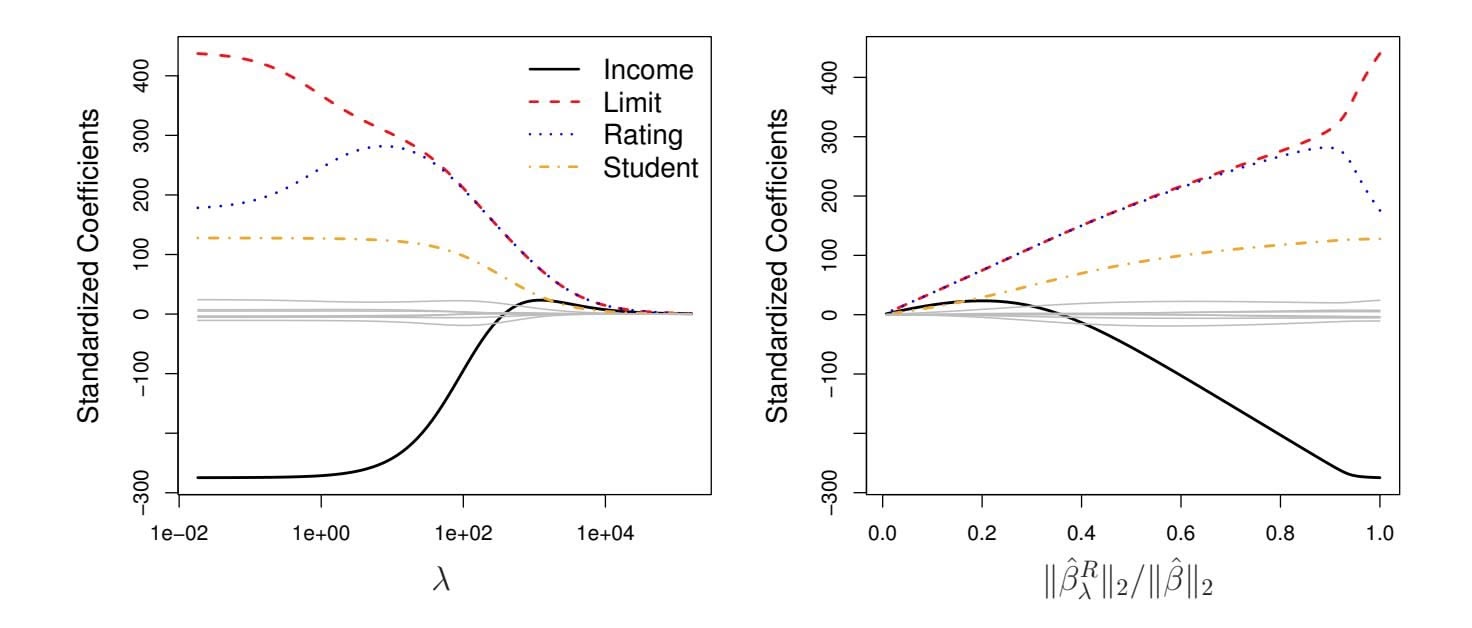

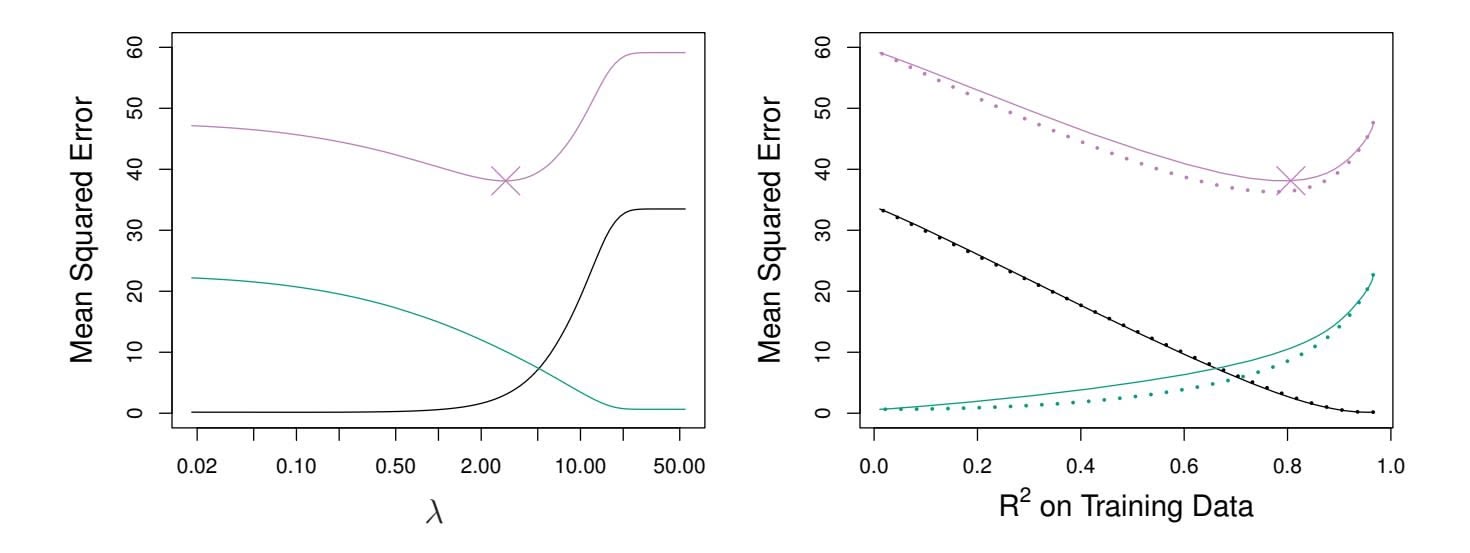

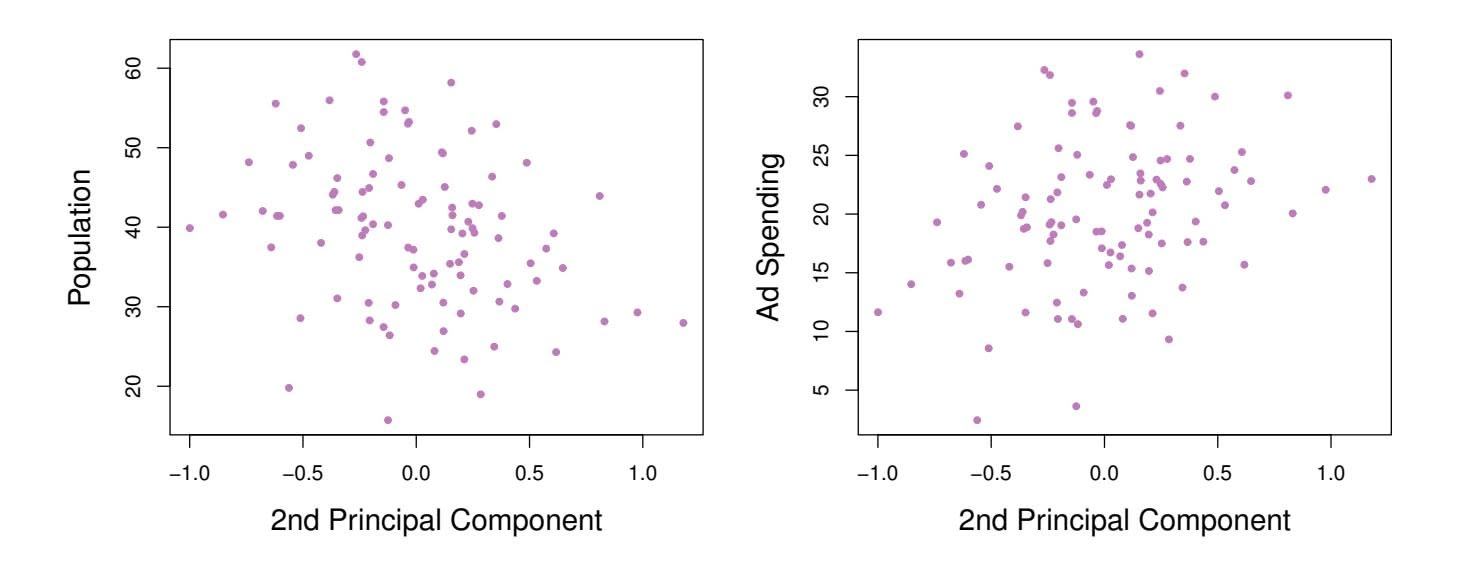

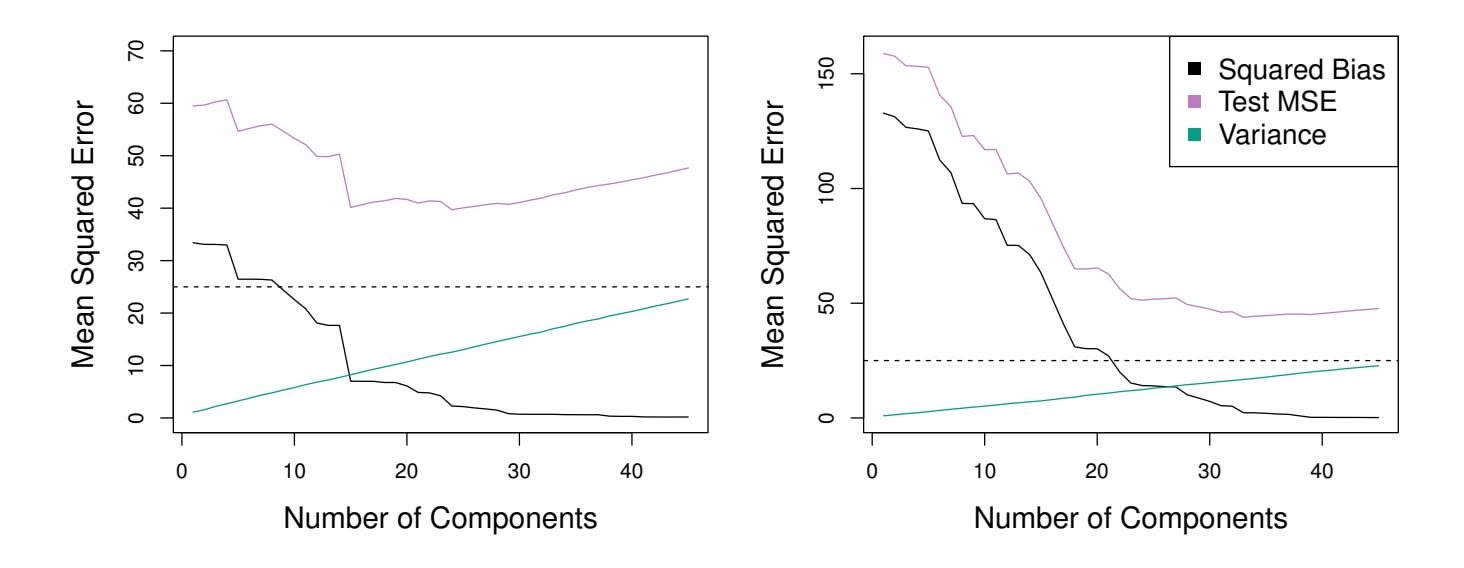

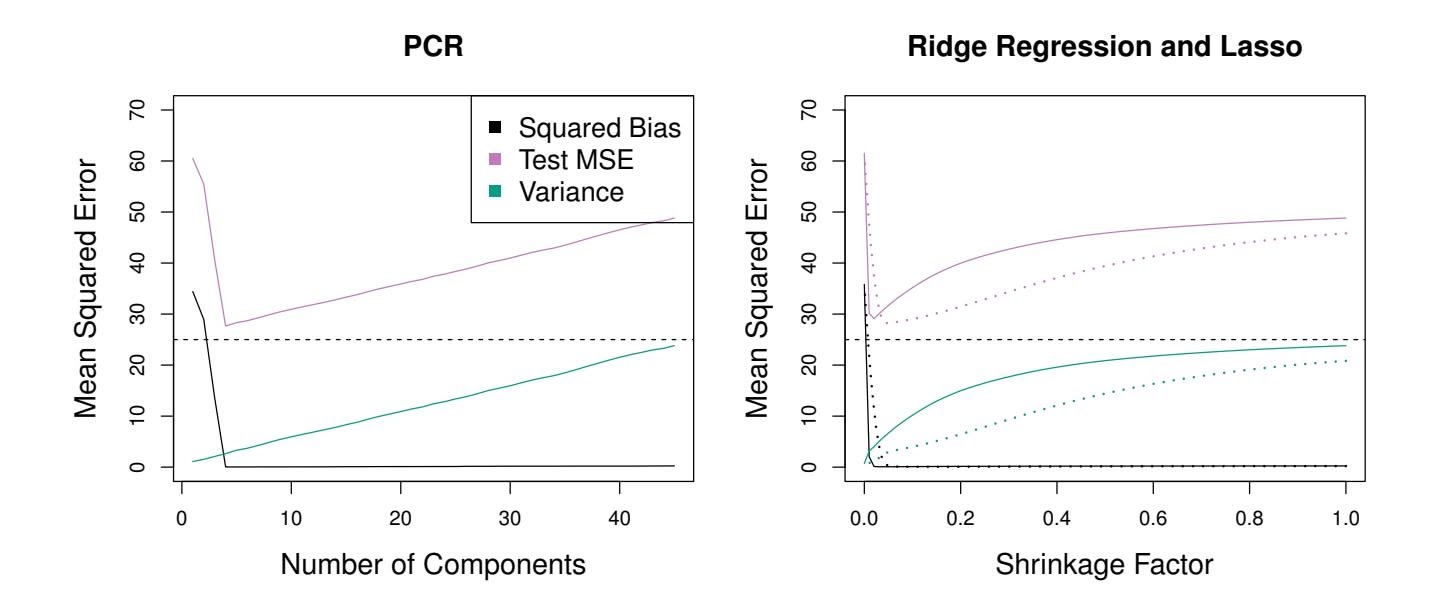

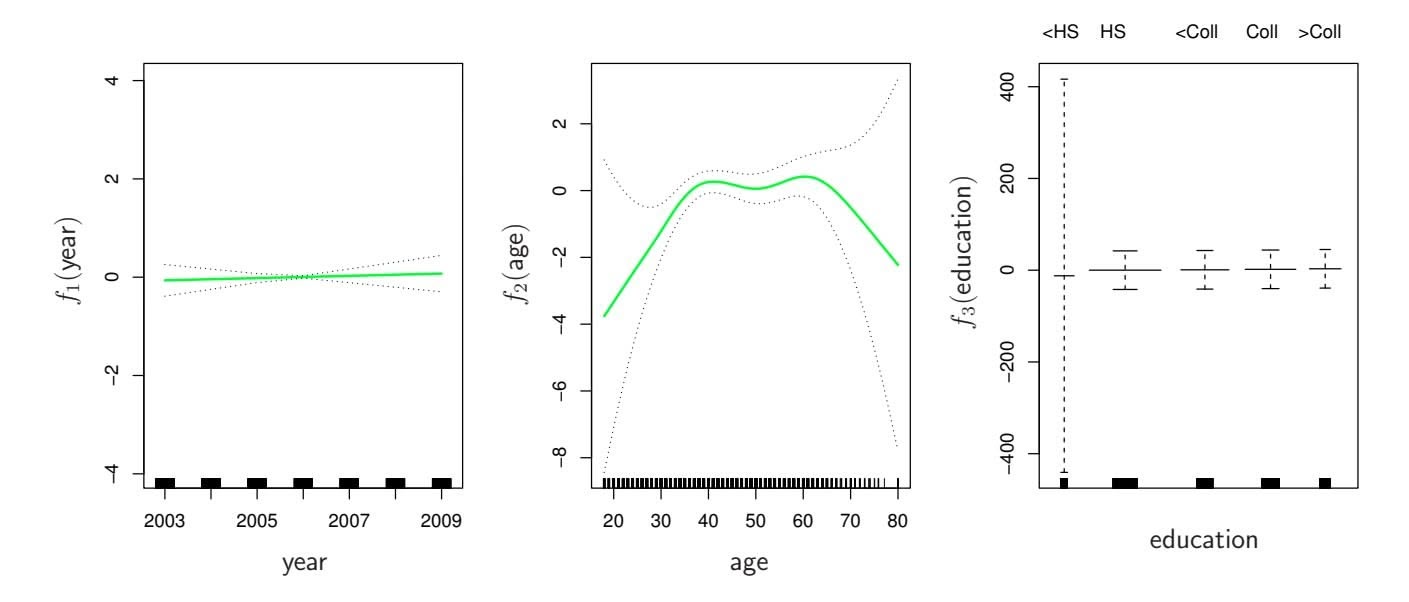

**FIGURE 6.5.** *Squared bias (black), variance (green), and test mean squared error (purple) for the ridge regression predictions on a simulated data set, as a function of $\lambda$ and $\|\hat{\beta}_{\lambda}^{R}\|_2 / \|\hat{\beta}\|_2$ . The horizontal dashed lines indicate the minimum possible MSE. The purple crosses indicate the ridge regression models for which the MSE is smallest.*

so that they are all on the same scale. In (6.6), the denominator is the estimated standard deviation of the $j$ th predictor. Consequently, all of the standardized predictors will have a standard deviation of one. As a result the final fit will not depend on the scale on which the predictors are measured. In Figure 6.4, the $y$ -axis displays the standardized ridge regression coefficient estimates—that is, the coefficient estimates that result from performing ridge regression using standardized predictors.

### Why Does Ridge Regression Improve Over Least Squares?

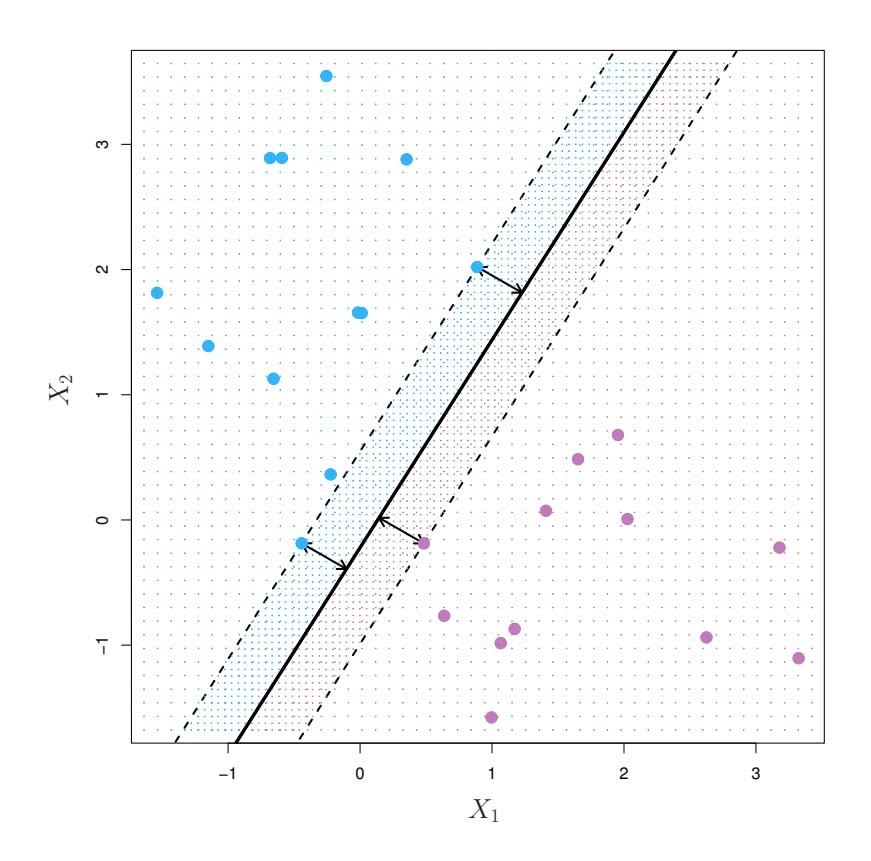

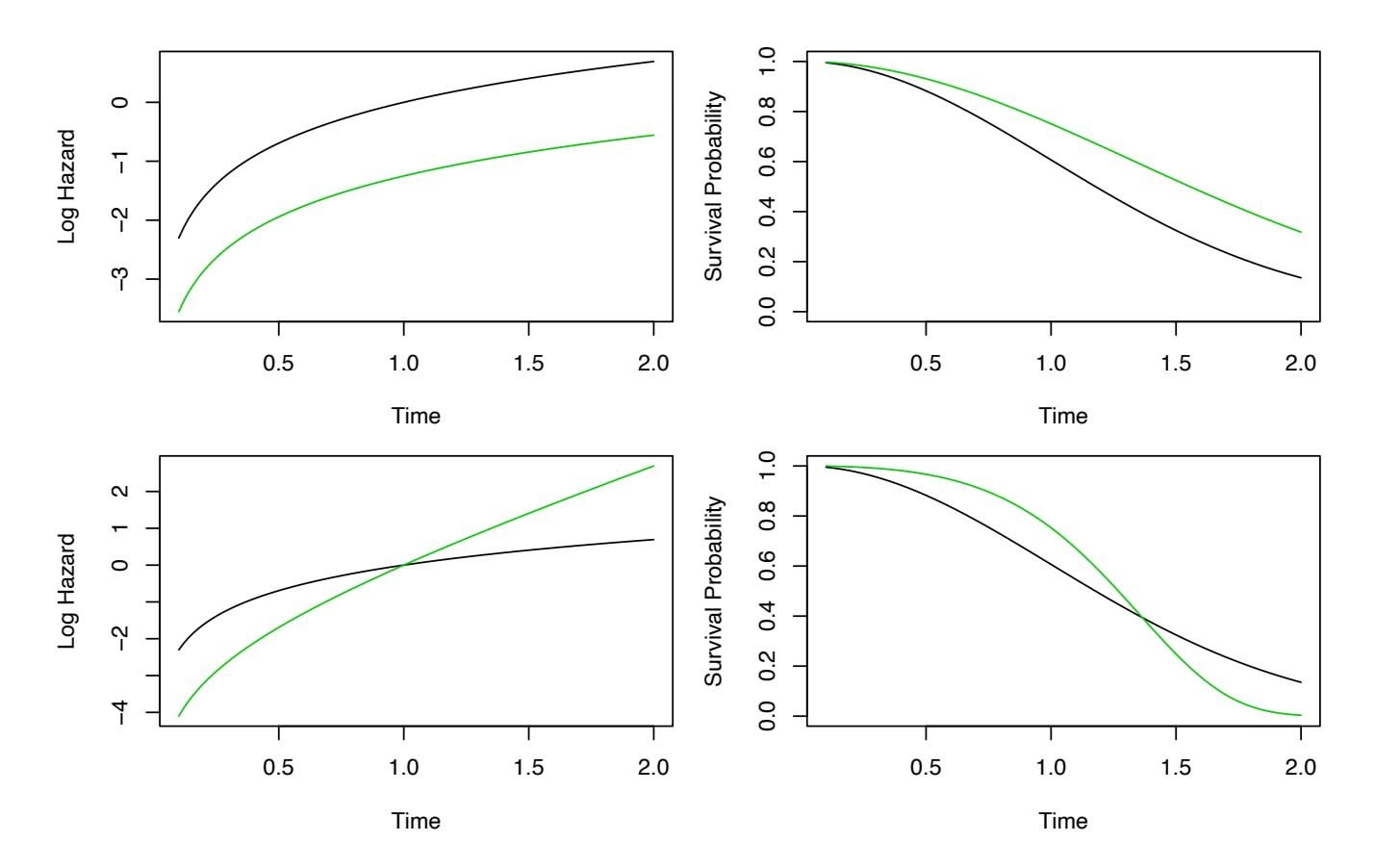

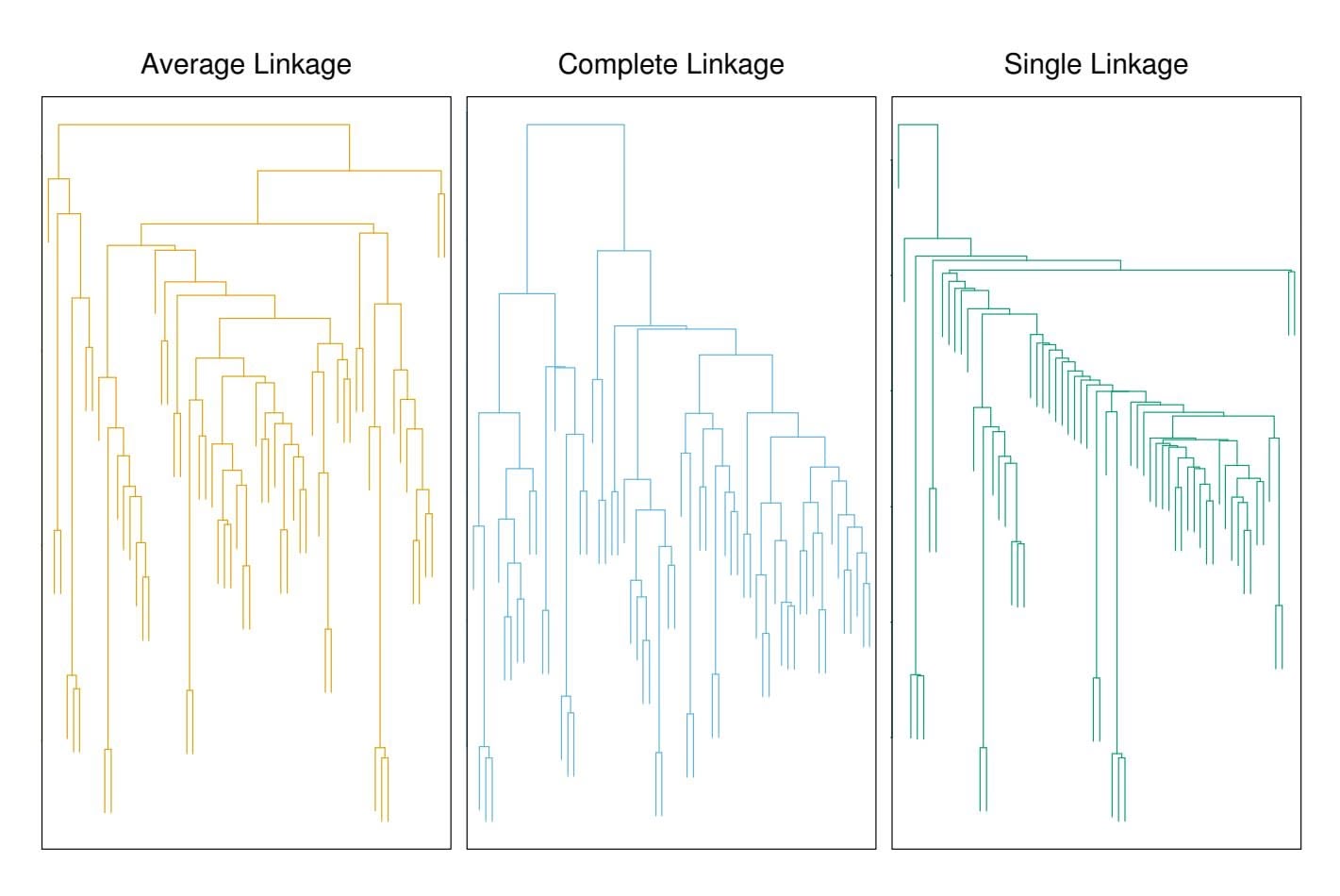

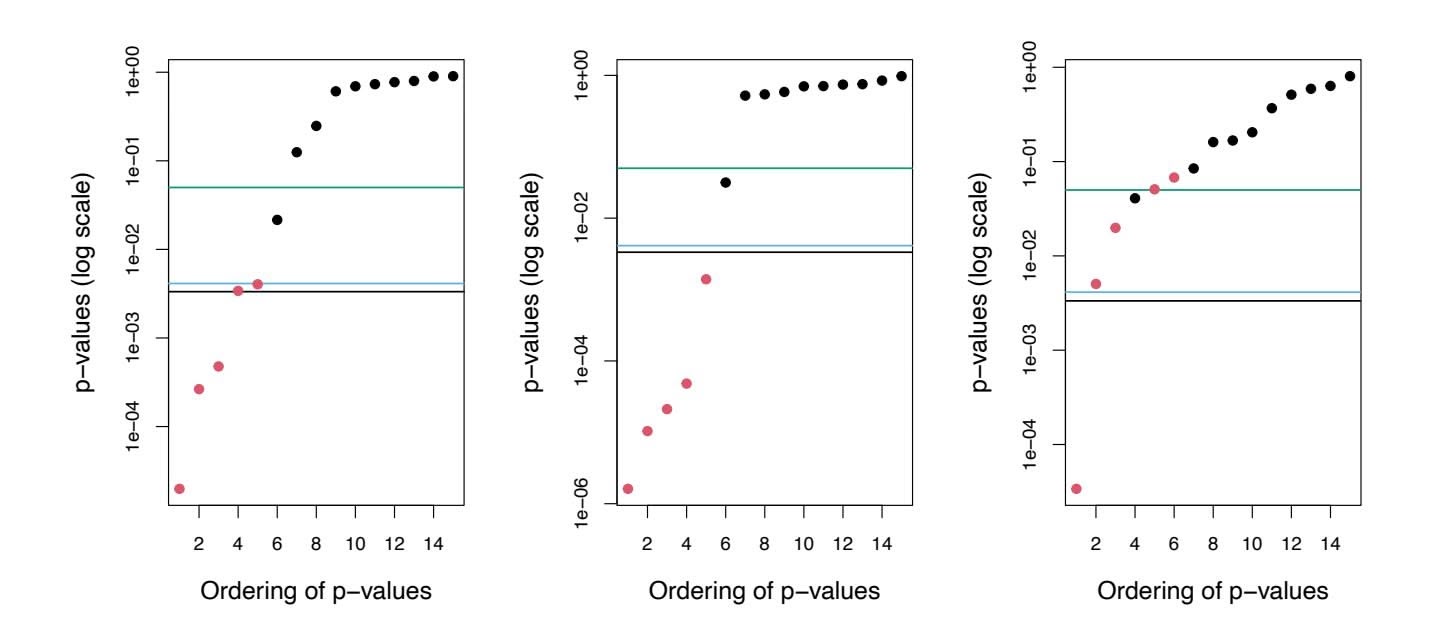

Ridge regression's advantage over least squares is rooted in the *bias-variance trade-off*. As $\lambda$ increases, the flexibility of the ridge regression fit decreases, leading to decreased variance but increased bias. This is illustrated in the left-hand panel of Figure 6.5, using a simulated data set containing $p = 45$ predictors and $n = 50$ observations. The green curve in the left-hand panel of Figure 6.5 displays the variance of the ridge regression predictions as a function of $\lambda$ . At the least squares coefficient estimates, which correspond to ridge regression with $\lambda = 0$ , the variance is high but there is no bias. But as $\lambda$ increases, the shrinkage of the ridge coefficient estimates leads to a substantial reduction in the variance of the predictions, at the expense of a slight increase in bias. Recall that the test mean squared error (MSE), plotted in purple, is closely related to the variance plus the squared bias. For values of $\lambda$ up to about 10, the variance decreases rapidly, with very little increase in bias, plotted in black. Consequently, the MSE drops considerably as $\lambda$ increases from 0 to 10. Beyond this point, the decrease in variance due to increasing $\lambda$ slows, and the shrinkage on the coefficients causes them to be significantly underestimated, resulting in a large increase in the bias. The minimum MSE is achieved at approximately $\lambda = 30$ . Interestingly,6.2 Shrinkage Methods 241

because of its high variance, the MSE associated with the least squares ft, when λ = 0, is almost as high as that of the null model for which all coeffcient estimates are zero, when λ = ∞. However, for an intermediate value of λ, the MSE is considerably lower.

The right-hand panel of Figure 6.5 displays the same curves as the lefthand panel, this time plotted against the ℓ2 norm of the ridge regression coeffcient estimates divided by the ℓ2 norm of the least squares estimates. Now as we move from left to right, the fts become more fexible, and so the bias decreases and the variance increases.

In general, in situations where the relationship between the response and the predictors is close to linear, the least squares estimates will have low bias but may have high variance. This means that a small change in the training data can cause a large change in the least squares coeffcient estimates. In particular, when the number of variables *p* is almost as large as the number of observations *n*, as in the example in Figure 6.5, the least squares estimates will be extremely variable. And if *p>n*, then the least squares estimates do not even have a unique solution, whereas ridge regression can still perform well by trading of a small increase in bias for a large decrease in variance. Hence, ridge regression works best in situations where the least squares estimates have high variance.

Ridge regression also has substantial computational advantages over best subset selection, which requires searching through 2*p* models. As we discussed previously, even for moderate values of *p*, such a search can be computationally infeasible. In contrast, for any fxed value of λ, ridge regression only fts a single model, and the model-ftting procedure can be performed quite quickly. In fact, one can show that the computations required to solve (6.5), *simultaneously for all values of* λ, are almost identical to those for ftting a model using least squares.

# *6.2.2 The Lasso*

Ridge regression does have one obvious disadvantage. Unlike best subset, forward stepwise, and backward stepwise selection, which will generally select models that involve just a subset of the variables, ridge regression will include all *p* predictors in the final model. The penalty $λ Σ β_j^2$ in (6.5) will shrink all of the coefficients towards zero, but it will not set any of them exactly to zero (unless $λ = ∞$ ). This may not be a problem for prediction accuracy, but it can create a challenge in model interpretation in settings in which the number of variables *p* is quite large. For example, in the Credit data set, it appears that the most important variables are income, limit, rating, and student. So we might wish to build a model including just these predictors. However, ridge regression will always generate a model involving all ten predictors. Increasing the value of $λ$ will tend to reduce the magnitudes of the coefficients, but will not result in exclusion of any of the variables.

# 242 6. Linear Model Selection and Regularization

The *lasso* is a relatively recent alternative to ridge regression that overcomes this disadvantage. The lasso coefficients, $\hat{\beta}_{\lambda}^{L}$ , minimize the quantity

$$

\sum_{i=1}^{n} \left( y_i - \beta_0 - \sum_{j=1}^{p} \beta_j x_{ij} \right)^2 + \lambda \sum_{j=1}^{p} |\beta_j| = \text{RSS} + \lambda \sum_{j=1}^{p} |\beta_j| \quad (6.7)

$$

Comparing (6.7) to (6.5), we see that the lasso and ridge regression have similar formulations. The only difference is that the $\beta_j^2$ term in the ridge regression penalty (6.5) has been replaced by $|\beta_j|$ in the lasso penalty (6.7). In statistical parlance, the lasso uses an $\ell_1$ (pronounced "ell 1") penalty instead of an $\ell_2$ penalty. The $\ell_1$ norm of a coefficient vector $\beta$ is given by $||\beta||_1 = \sum |\beta_j|$ .

As with ridge regression, the lasso shrinks the coeffcient estimates towards zero. However, in the case of the lasso, the ℓ1 penalty has the efect of forcing some of the coeffcient estimates to be exactly equal to zero when the tuning parameter λ is suffciently large. Hence, much like best subset selection, the lasso performs *variable selection*. As a result, models generated from the lasso are generally much easier to interpret than those produced by ridge regression. We say that the lasso yields *sparse* models—that is, sparse models that involve only a subset of the variables. As in ridge regression, selecting a good value of λ for the lasso is critical; we defer this discussion to Section 6.2.3, where we use cross-validation.

sparse

As an example, consider the coeffcient plots in Figure 6.6, which are generated from applying the lasso to the Credit data set. When λ = 0, then the lasso simply gives the least squares ft, and when λ becomes suffciently large, the lasso gives the null model in which all coeffcient estimates equal zero. However, in between these two extremes, the ridge regression and lasso models are quite diferent from each other. Moving from left to right in the right-hand panel of Figure 6.6, we observe that at frst the lasso results in a model that contains only the rating predictor. Then student and limit enter the model almost simultaneously, shortly followed by income. Eventually, the remaining variables enter the model. Hence, depending on the value of λ, the lasso can produce a model involving any number of variables. In contrast, ridge regression will always include all of the variables in the model, although the magnitude of the coeffcient estimates will depend on λ.

6.2 Shrinkage Methods 243

**FIGURE 6.6.** *The standardized lasso coeffcients on the* Credit *data set are shown as a function of* λ *and* ∥βˆ*L* λ ∥1*/*∥βˆ∥1*.*

### Another Formulation for Ridge Regression and the Lasso

One can show that the lasso and ridge regression coeffcient estimates solve the problems

$$

\underset{\beta}{\text{minimize}} \left\{ \sum_{i=1}^{n} \left( y_i - \beta_0 - \sum_{j=1}^{p} \beta_j x_{ij} \right)^2 \right\} \quad \text{subject to} \quad \sum_{j=1}^{p} |\beta_j| \le s \quad (6.8)

$$

and

minimizeβ

$$

\left\{ \sum_{i=1}^{n} \left( y_i - \beta_0 - \sum_{j=1}^{p} \beta_j x_{ij} \right)^2 \right\} \text{ subject to } \sum_{j=1}^{p} \beta_j^2 \leq s, \tag{6.9}

$$

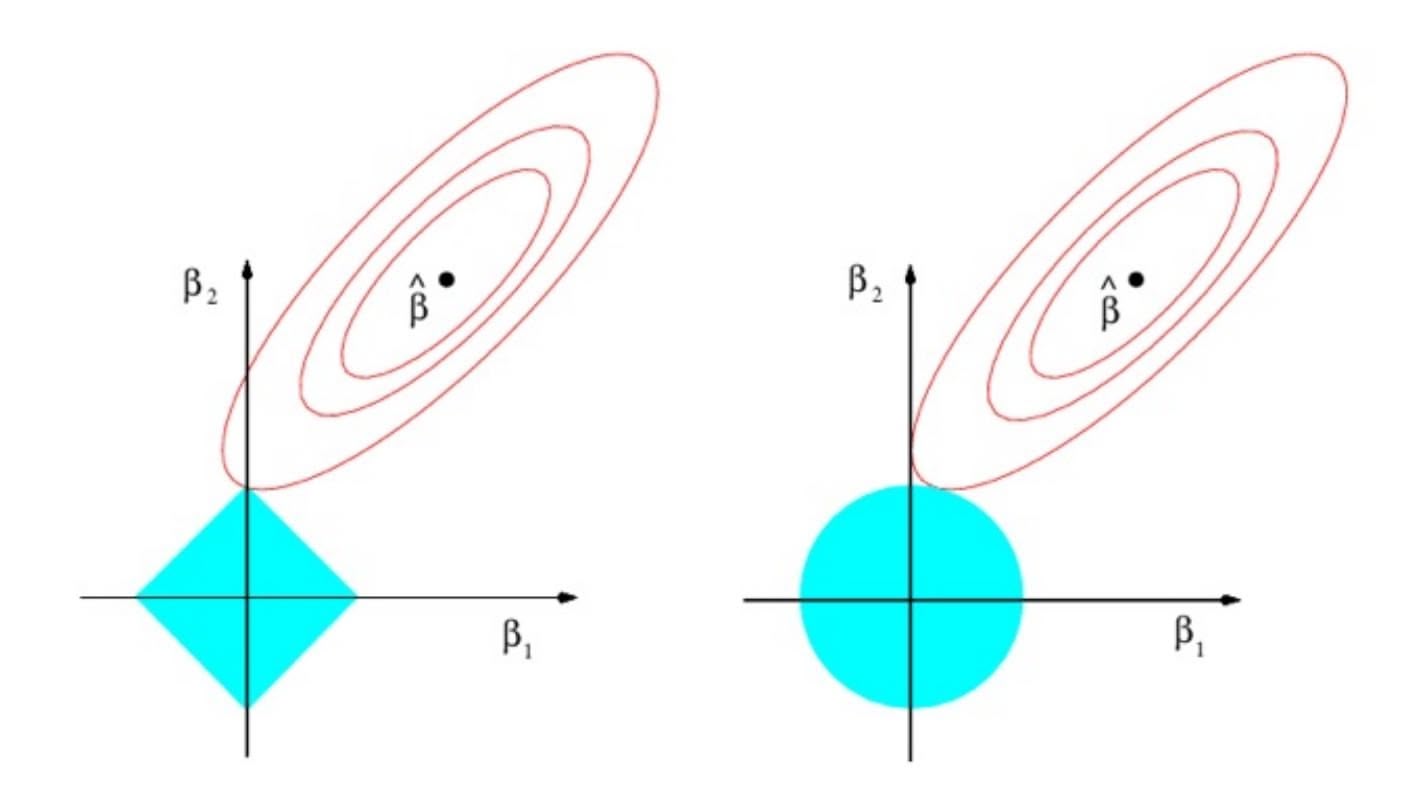

respectively. In other words, for every value of $\lambda$ , there is some *s* such that the Equations (6.7) and (6.8) will give the same lasso coefficient estimates. Similarly, for every value of $\lambda$ there is a corresponding *s* such that Equations (6.5) and (6.9) will give the same ridge regression coefficient estimates. When $p = 2$ , then (6.8) indicates that the lasso coefficient estimates have the smallest RSS out of all points that lie within the diamond defined by $|\beta_1| + |\beta_2| \le s$ . Similarly, the ridge regression estimates have the smallest RSS out of all points that lie within the circle defined by $\beta_1^2 + \beta_2^2 \le s$ .

We can think of (6.8) as follows. When we perform the lasso we are trying to find the set of coefficient estimates that lead to the smallest RSS, subject to the constraint that there is a *budget s* for how large $\sum_{j=1}^{p} |\beta_j|$ can be. When *s* is extremely large, then this budget is not very restrictive, and so the coefficient estimates can be large. In fact, if *s* is large enough that the least squares solution falls within the budget, then (6.8) will simply yield the least squares solution. In contrast, if *s* is small, then $\sum_{j=1}^{p} |\beta_j|$ must be

# 244 6. Linear Model Selection and Regularization

small in order to avoid violating the budget. Similarly, (6.9) indicates that when we perform ridge regression, we seek a set of coefficient estimates such that the RSS is as small as possible, subject to the requirement that $\sum_{j=1}^{p} \beta_{j}^{2}$ not exceed the budget *s*.The formulations $(6.8)$ and $(6.9)$ reveal a close connection between the lasso, ridge regression, and best subset selection. Consider the problem

$$

\underset{\beta}{\text{minimize}} \left\{ \sum_{i=1}^{n} \left( y_i - \beta_0 - \sum_{j=1}^{p} \beta_j x_{ij} \right)^2 \right\} \quad \text{subject to} \quad \sum_{j=1}^{p} I(\beta_j \neq 0) \leq s. \tag{6.10}

$$

Here $I(\beta_j \neq 0)$ is an indicator variable: it takes on a value of 1 if $\beta_j \neq 0$ , and equals zero otherwise. Then (6.10) amounts to finding a set of coefficient estimates such that RSS is as small as possible, subject to the constraint that no more than *s* coefficients can be nonzero. The problem (6.10) is equivalent to best subset selection. Unfortunately, solving (6.10) is computationally infeasible when *p* is large, since it requires considering all $\binom{p}{s}$ models containing *s* predictors. Therefore, we can interpret ridge regression and the lasso as computationally feasible alternatives to best subset selection that replace the intractable form of the budget in (6.10) with forms that are much easier to solve. Of course, the lasso is much more closely related to best subset selection, since the lasso performs feature selection for *s* sufficiently small in (6.8), while ridge regression does not.

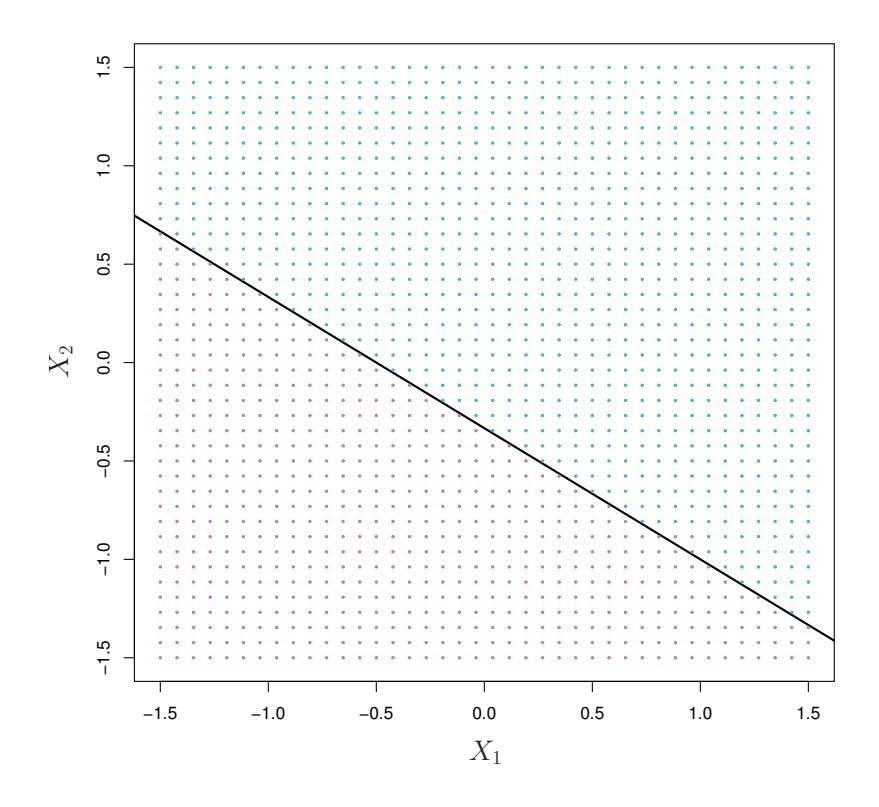

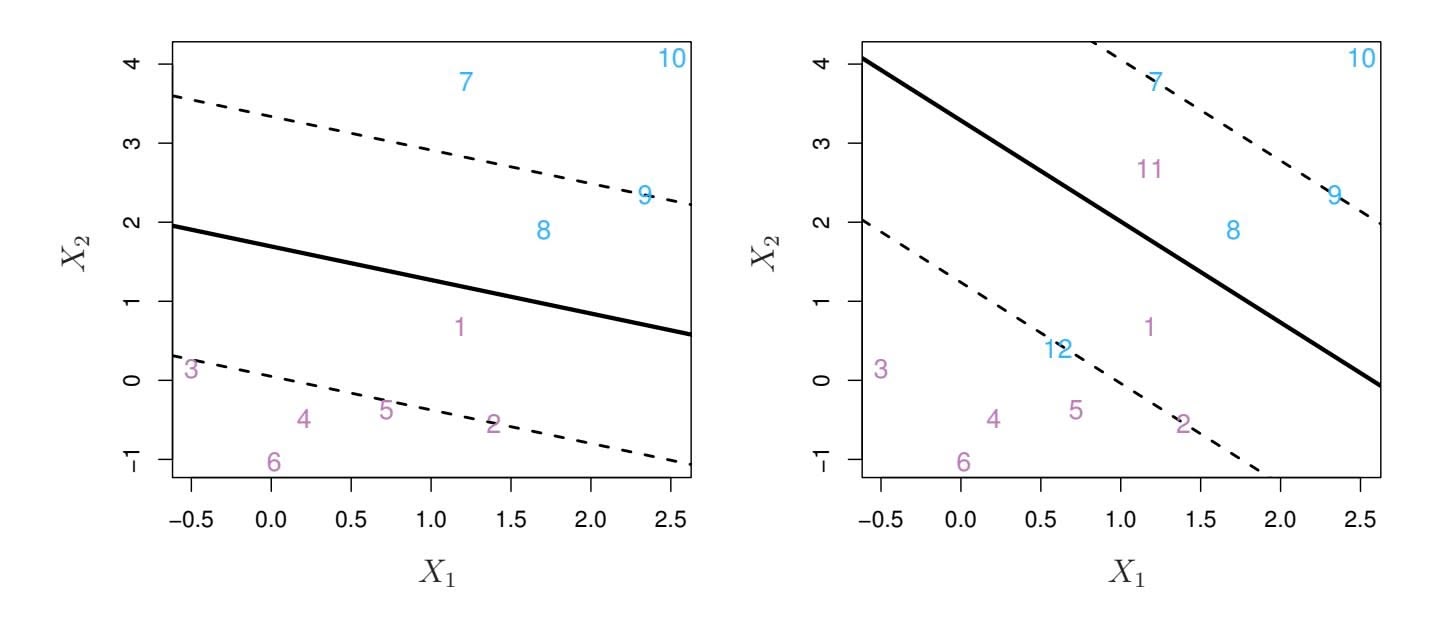

### The Variable Selection Property of the Lasso

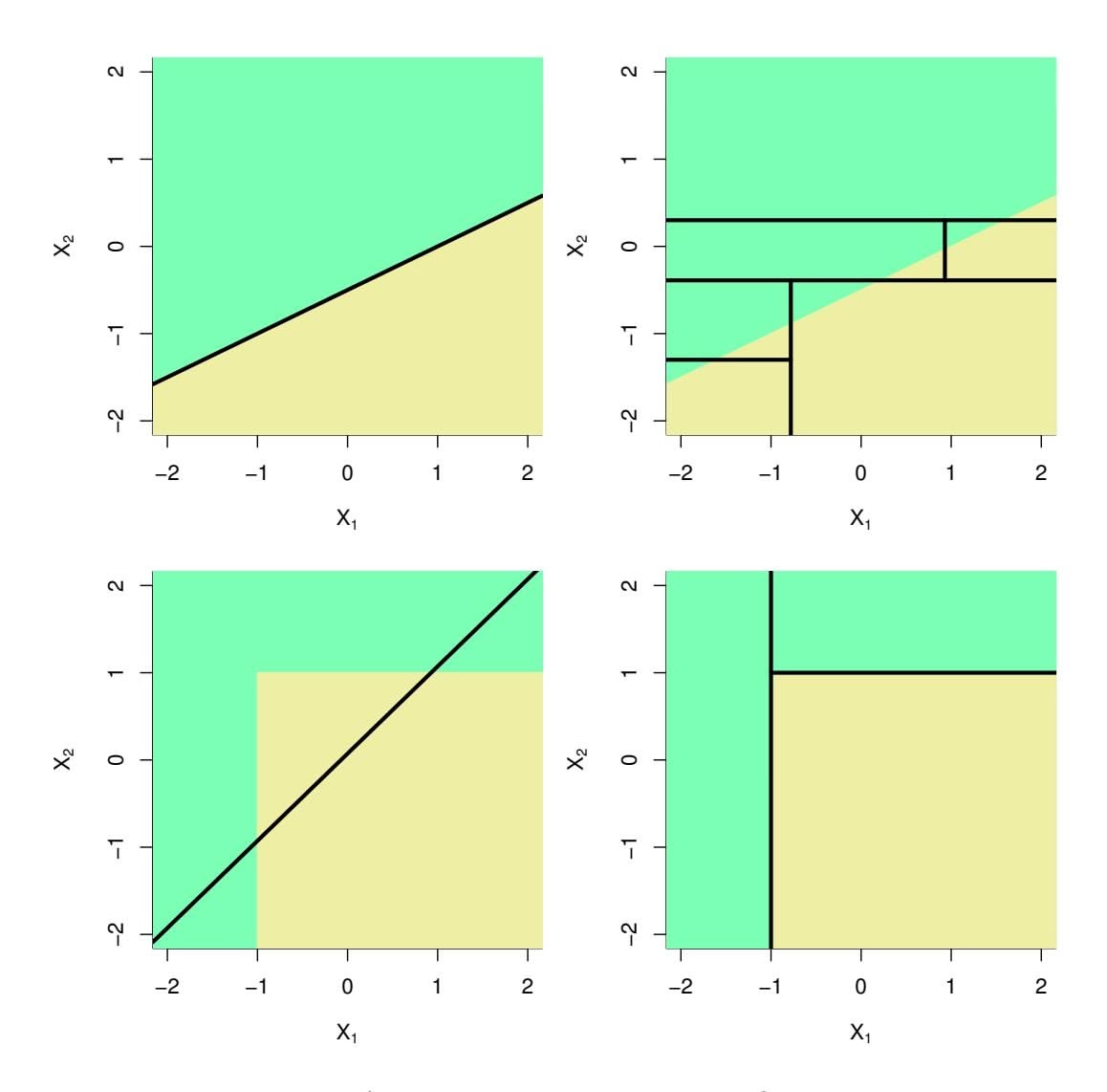

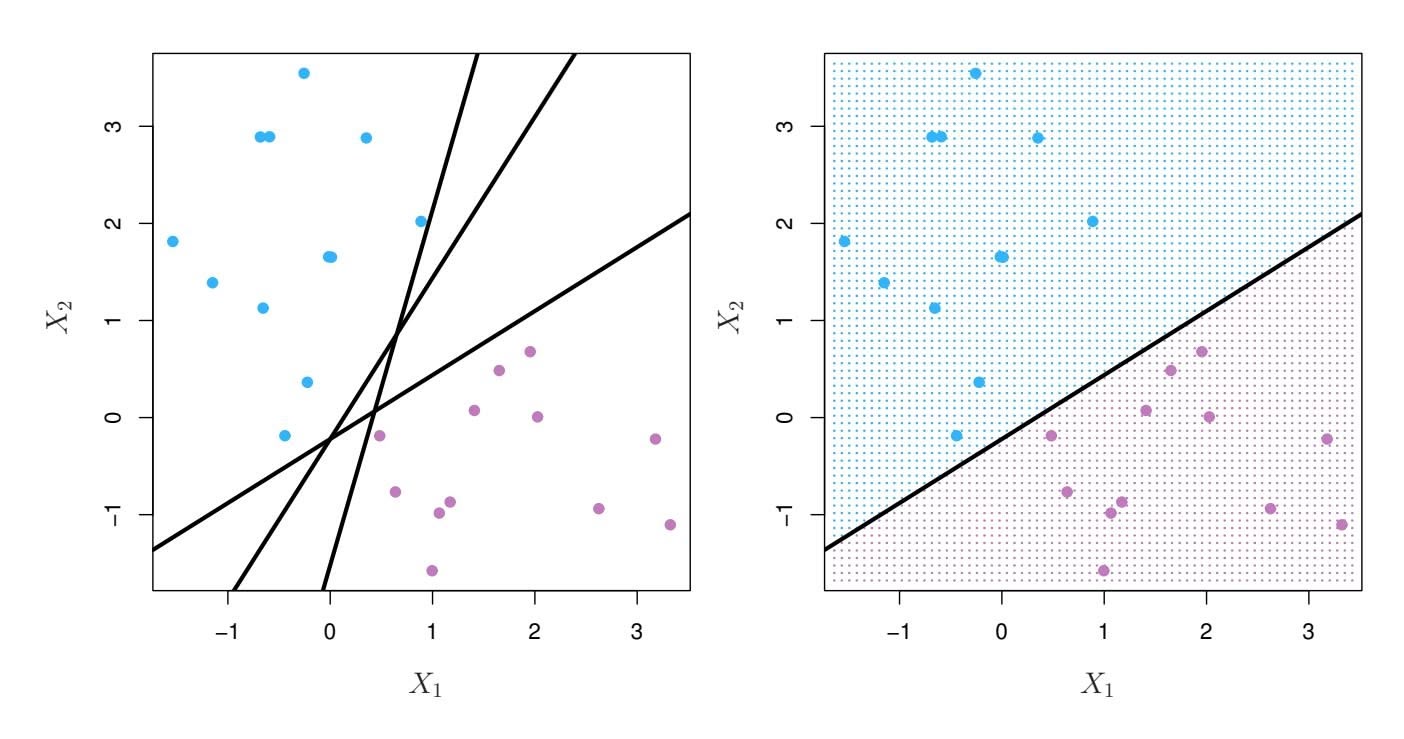

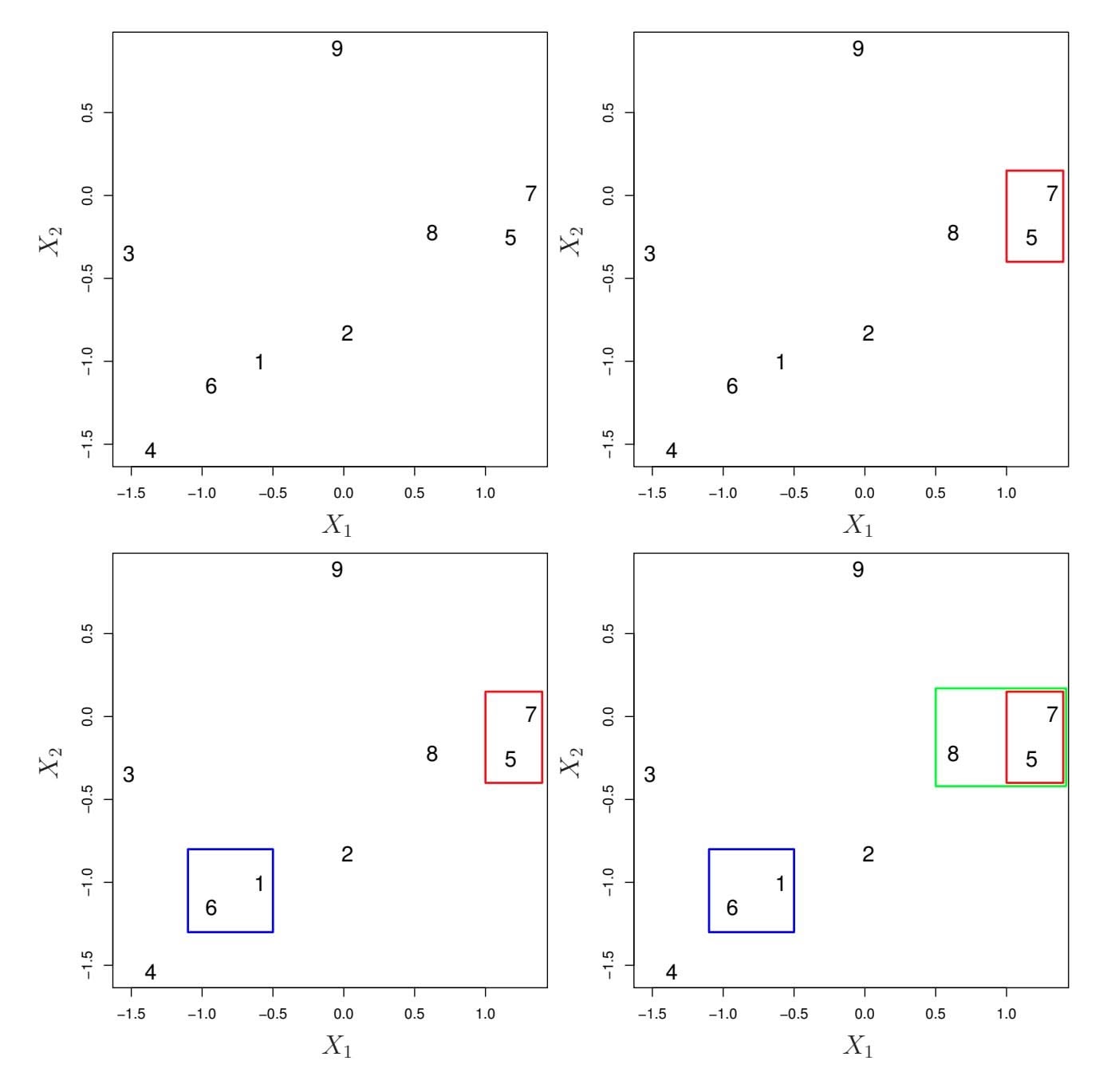

Why is it that the lasso, unlike ridge regression, results in coefficient estimates that are exactly equal to zero? The formulations (6.8) and (6.9) can be used to shed light on the issue. Figure 6.7 illustrates the situation. The least squares solution is marked as $\hat{\beta}$ , while the blue diamond and circle represent the lasso and ridge regression constraints in (6.8) and (6.9), respectively. If *s* is sufficiently large, then the constraint regions will contain $\hat{\beta}$ , and so the ridge regression and lasso estimates will be the same as the least squares estimates. (Such a large value of *s* corresponds to $\lambda = 0$ in (6.5) and (6.7).) However, in Figure 6.7 the least squares estimates lie outside of the diamond and the circle, and so the least squares estimates are not the same as the lasso and ridge regression estimates.

Each of the ellipses centered around $\hat{\beta}$ represents a *contour*: this means that all of the points on a particular ellipse have the same RSS value. As the ellipses expand away from the least squares coefficient estimates, the RSS increases. Equations (6.8) and (6.9) indicate that the lasso and ridge regression coefficient estimates are given by the first point at which an ellipse contacts the constraint region. Since ridge regression has a circular constraint with no sharp points, this intersection will not generally occur on an axis, and so the ridge regression coefficient estimates will be exclusively

6.2 Shrinkage Methods 245

**FIGURE 6.7.** *Contours of the error and constraint functions for the lasso* (left) *and ridge regression* (right)*. The solid blue areas are the constraint regions,* $|\beta_1| + |\beta_2| \le s$ *and* $\beta_1^2 + \beta_2^2 \le s$ *, while the red ellipses are the contours of the RSS.*

non-zero. However, the lasso constraint has *corners* at each of the axes, and so the ellipse will often intersect the constraint region at an axis. When this occurs, one of the coefficients will equal zero. In higher dimensions, many of the coefficient estimates may equal zero simultaneously. In Figure 6.7, the intersection occurs at $β_1 = 0$ , and so the resulting model will only include $β_2$ .In Figure 6.7, we considered the simple case of $p = 2$ . When $p = 3$ , then the constraint region for ridge regression becomes a sphere, and the constraint region for the lasso becomes a polyhedron. When $p > 3$ , the constraint for ridge regression becomes a hypersphere, and the constraint for the lasso becomes a polytope. However, the key ideas depicted in Figure 6.7 still hold. In particular, the lasso leads to feature selection when $p > 2$ due to the sharp corners of the polyhedron or polytope.

### Comparing the Lasso and Ridge Regression

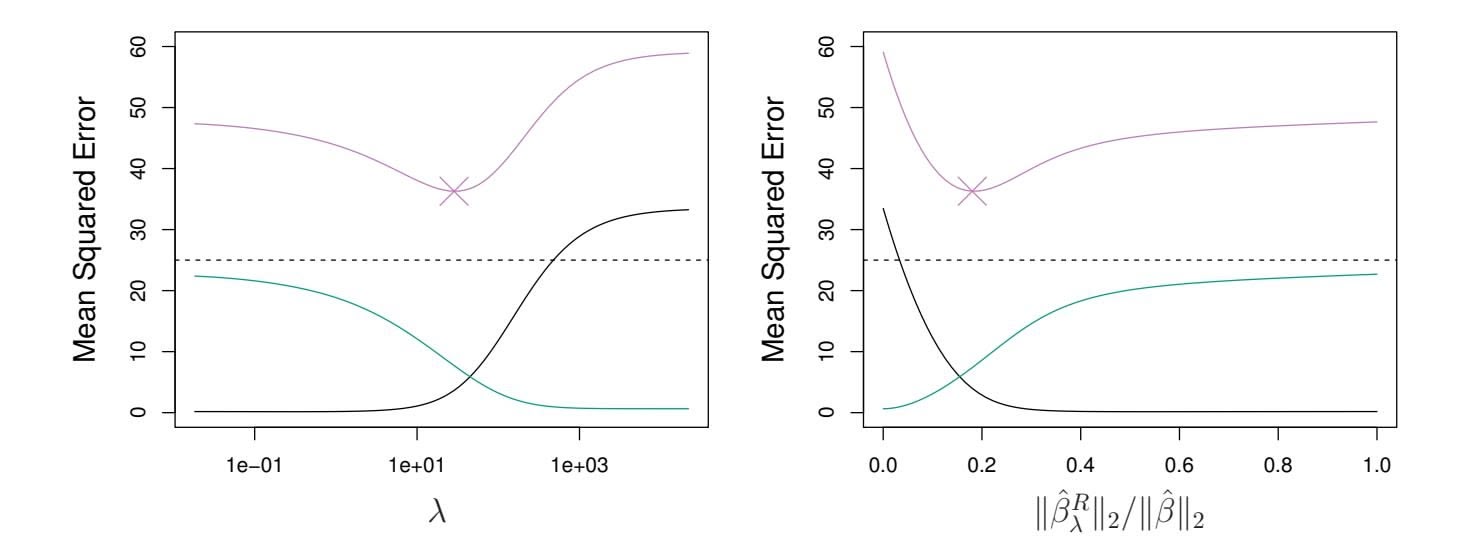

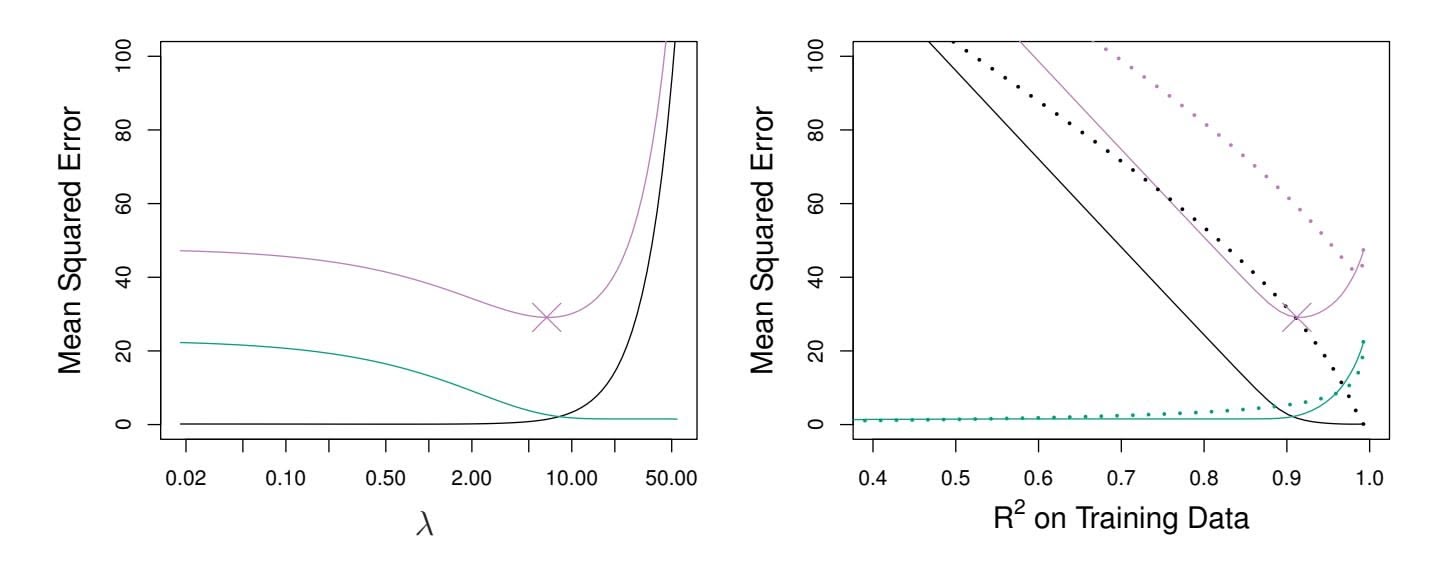

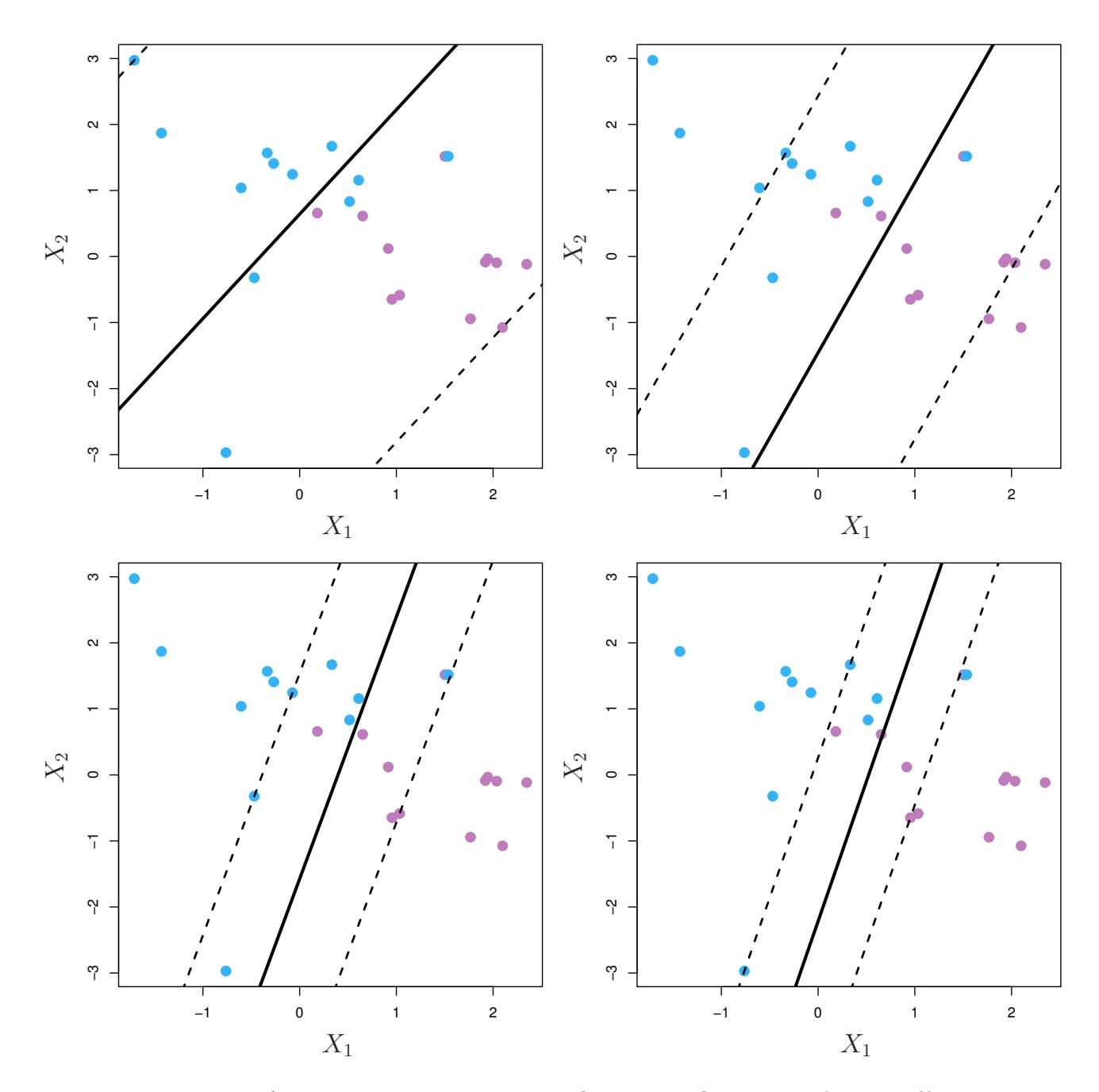

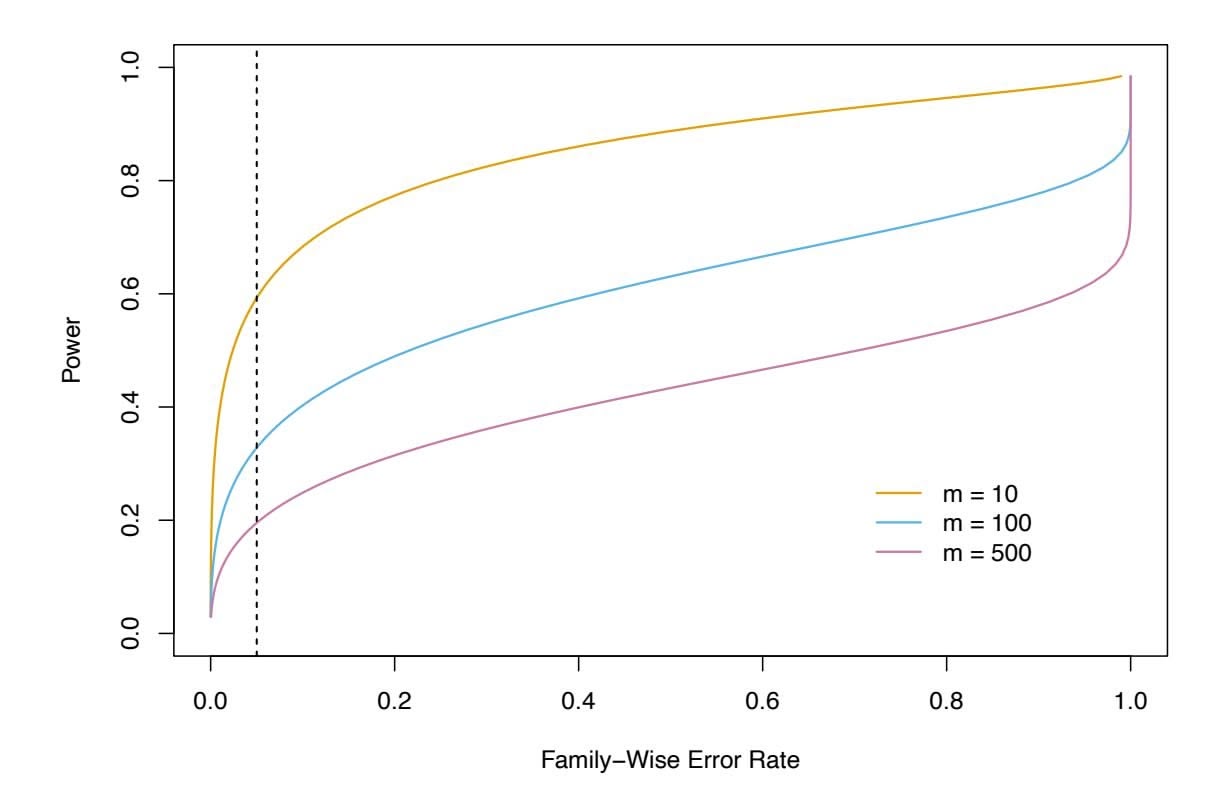

It is clear that the lasso has a major advantage over ridge regression, in that it produces simpler and more interpretable models that involve only a subset of the predictors. However, which method leads to better prediction accuracy? Figure 6.8 displays the variance, squared bias, and test MSE of the lasso applied to the same simulated data as in Figure 6.5. Clearly the lasso leads to qualitatively similar behavior to ridge regression, in that as $\lambda$ increases, the variance decreases and the bias increases. In the right-hand panel of Figure 6.8, the dotted lines represent the ridge regression fits. Here we plot both against their $R^2$ on the training data. This is another

246 6. Linear Model Selection and Regularization

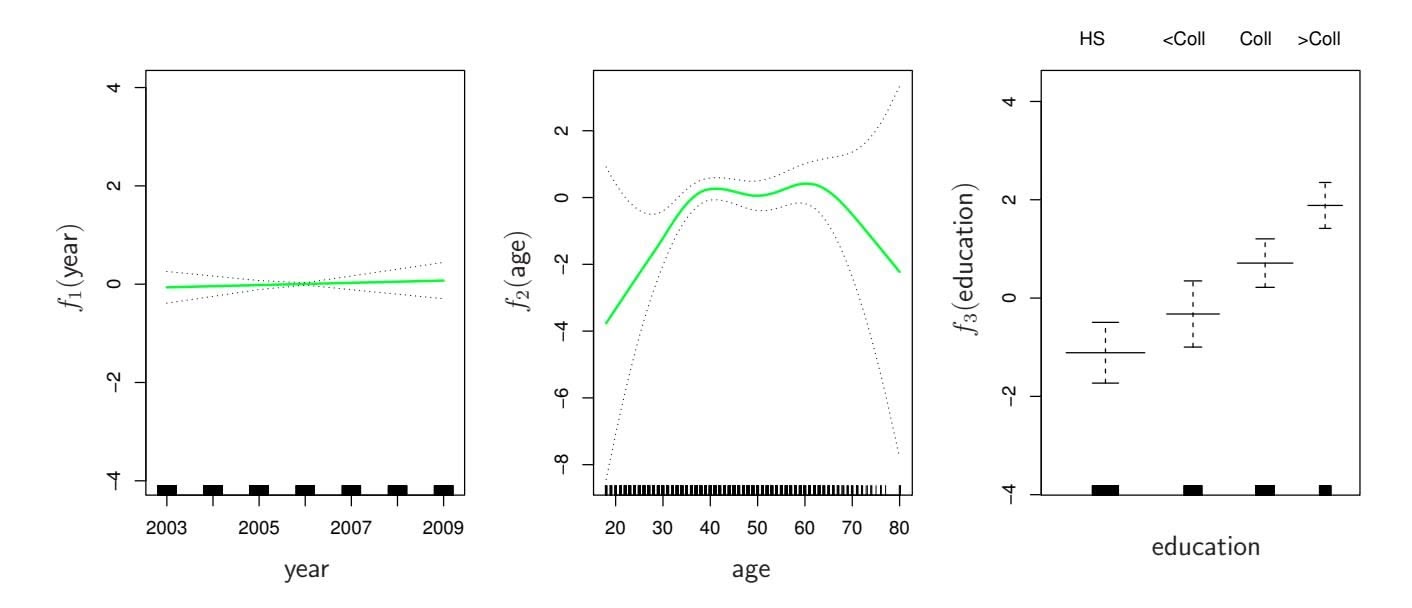

**FIGURE 6.8.** Left: *Plots of squared bias (black), variance (green), and test MSE (purple) for the lasso on a simulated data set.* Right: *Comparison of squared bias, variance, and test MSE between lasso (solid) and ridge (dotted). Both are plotted against their R*2 *on the training data, as a common form of indexing. The crosses in both plots indicate the lasso model for which the MSE is smallest.*

useful way to index models, and can be used to compare models with diferent types of regularization, as is the case here. In this example, the lasso and ridge regression result in almost identical biases. However, the variance of ridge regression is slightly lower than the variance of the lasso. Consequently, the minimum MSE of ridge regression is slightly smaller than that of the lasso.

However, the data in Figure 6.8 were generated in such a way that all 45 predictors were related to the response—that is, none of the true coeffcients β1*,...,* β45 equaled zero. The lasso implicitly assumes that a number of the coeffcients truly equal zero. Consequently, it is not surprising that ridge regression outperforms the lasso in terms of prediction error in this setting. Figure 6.9 illustrates a similar situation, except that now the response is a function of only 2 out of 45 predictors. Now the lasso tends to outperform ridge regression in terms of bias, variance, and MSE.

These two examples illustrate that neither ridge regression nor the lasso will universally dominate the other. In general, one might expect the lasso to perform better in a setting where a relatively small number of predictors have substantial coeffcients, and the remaining predictors have coeffcients that are very small or that equal zero. Ridge regression will perform better when the response is a function of many predictors, all with coeffcients of roughly equal size. However, the number of predictors that is related to the response is never known *a priori* for real data sets. A technique such as cross-validation can be used in order to determine which approach is better on a particular data set.

As with ridge regression, when the least squares estimates have excessively high variance, the lasso solution can yield a reduction in variance at the expense of a small increase in bias, and consequently can gener-

6.2 Shrinkage Methods 247

**FIGURE 6.9.** Left: *Plots of squared bias (black), variance (green), and test MSE (purple) for the lasso. The simulated data is similar to that in Figure 6.8, except that now only two predictors are related to the response.* Right: *Comparison of squared bias, variance, and test MSE between lasso (solid) and ridge (dotted). Both are plotted against their R*2 *on the training data, as a common form of indexing. The crosses in both plots indicate the lasso model for which the MSE is smallest.*

ate more accurate predictions. Unlike ridge regression, the lasso performs variable selection, and hence results in models that are easier to interpret.

There are very effcient algorithms for ftting both ridge and lasso models; in both cases the entire coeffcient paths can be computed with about the same amount of work as a single least squares ft. We will explore this further in the lab at the end of this chapter.

### A Simple Special Case for Ridge Regression and the Lasso

In order to obtain a better intuition about the behavior of ridge regression and the lasso, consider a simple special case with $n = p$ , and $X$ a diagonal matrix with 1's on the diagonal and 0's in all off-diagonal elements. To simplify the problem further, assume also that we are performing regression without an intercept. With these assumptions, the usual least squares problem simplifies to finding $β_1, ..., β_p$ that minimize

$$

\sum_{j=1}^{p} (y_j - \beta_j)^2 \quad (6.11)

$$

In this case, the least squares solution is given by

$$

\hat{\beta}_j = y_j.

$$

And in this setting, ridge regression amounts to fnding β1*,...,* β*p* such that

$$

\sum_{j=1}^{p} (y_j - \beta_j)^2 + \lambda \sum_{j=1}^{p} \beta_j^2 \quad (6.12)

$$

248 6. Linear Model Selection and Regularization

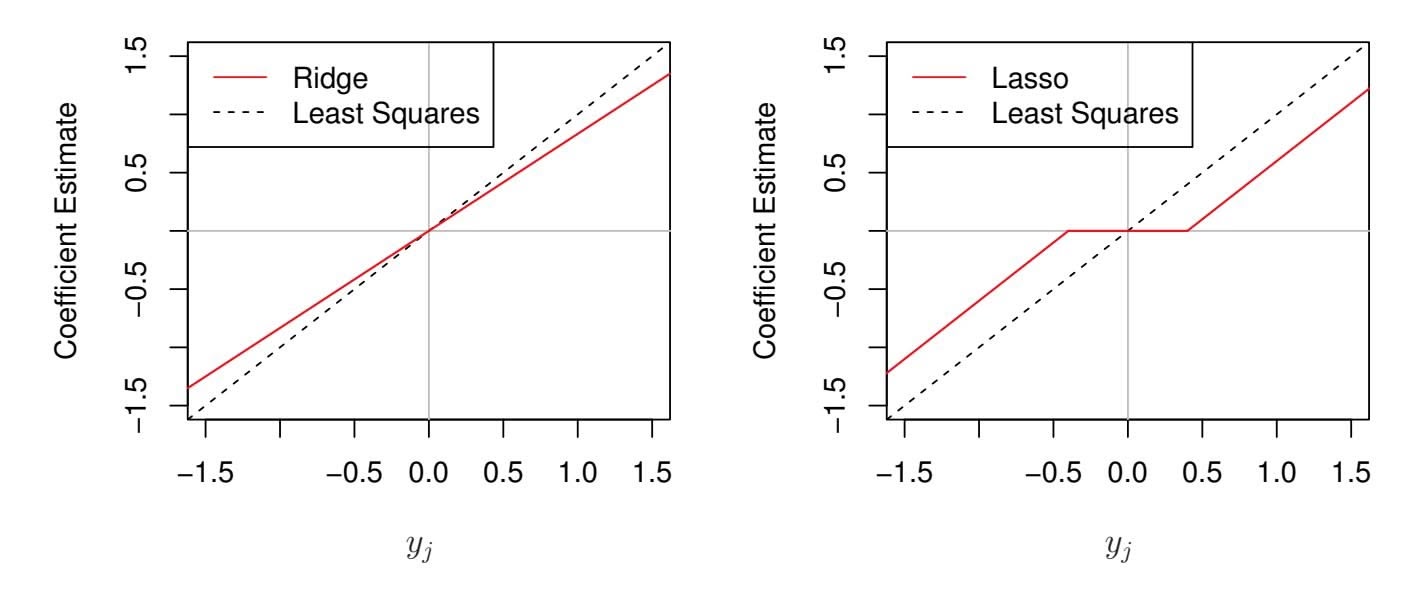

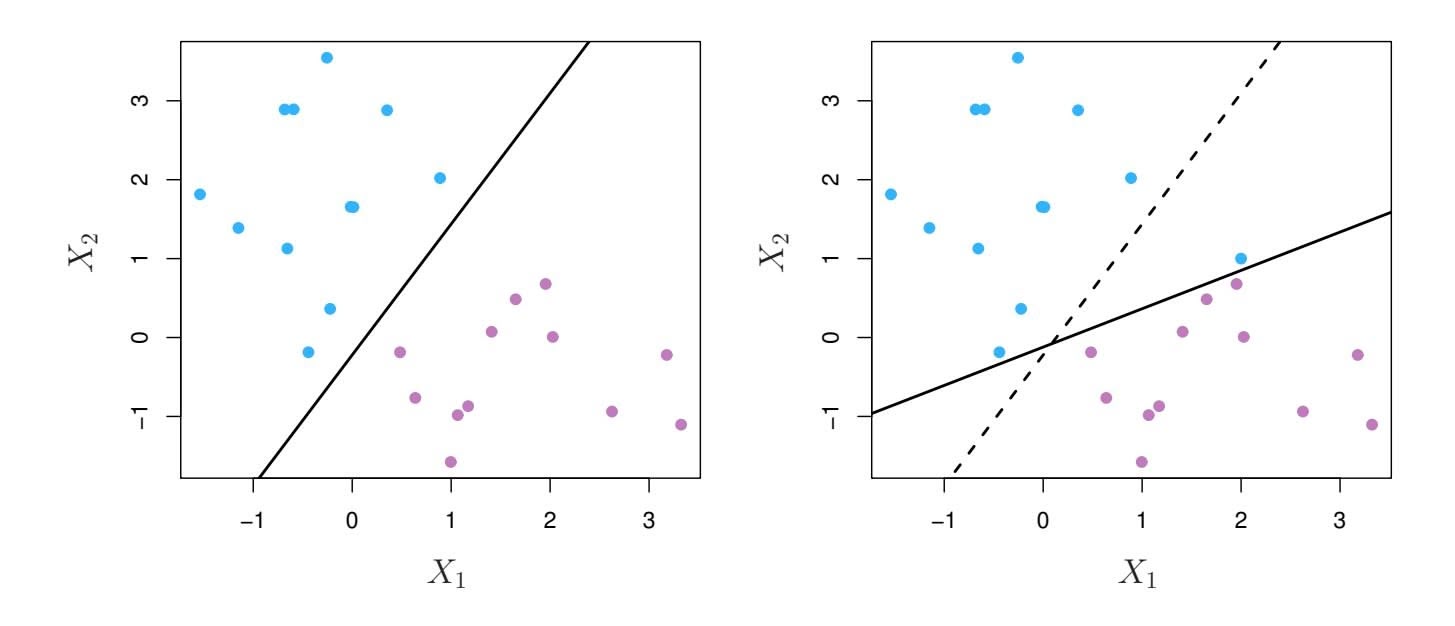

**FIGURE 6.10.** *The ridge regression and lasso coeffcient estimates for a simple setting with n* = *p and* X *a diagonal matrix with* 1*'s on the diagonal.* Left: *The ridge regression coeffcient estimates are shrunken proportionally towards zero, relative to the least squares estimates.* Right: *The lasso coeffcient estimates are soft-thresholded towards zero.*

is minimized, and the lasso amounts to fnding the coeffcients such that

$$

\sum_{j=1}^{p} (y_j - \beta_j)^2 + \lambda \sum_{j=1}^{p} |\beta_j| \quad (6.13)

$$

is minimized. One can show that in this setting, the ridge regression estimates take the form

$$

\hat{\beta}_j^R = \frac{y_j}{1 + \lambda}, \tag{6.14}

$$

and the lasso estimates take the form

$$

\hat{\beta}_j^L = \begin{cases} y_j - \lambda/2 & \text{if } y_j > \lambda/2; \\ y_j + \lambda/2 & \text{if } y_j < -\lambda/2; \\ 0 & \text{if } |y_j| \le \lambda/2. \end{cases} \tag{6.15}

$$

Figure 6.10 displays the situation. We can see that ridge regression and the lasso perform two very diferent types of shrinkage. In ridge regression, each least squares coeffcient estimate is shrunken by the same proportion. In contrast, the lasso shrinks each least squares coeffcient towards zero by a constant amount, λ*/*2; the least squares coeffcients that are less than λ*/*2 in absolute value are shrunken entirely to zero. The type of shrinkage performed by the lasso in this simple setting (6.15) is known as *softthresholding*. The fact that some lasso coeffcients are shrunken entirely to softthresholding zero explains why the lasso performs feature selection.

soft-

thresholding

In the case of a more general data matrix X, the story is a little more complicated than what is depicted in Figure 6.10, but the main ideas still hold approximately: ridge regression more or less shrinks every dimension of the data by the same proportion, whereas the lasso more or less shrinks

6.2 Shrinkage Methods 249

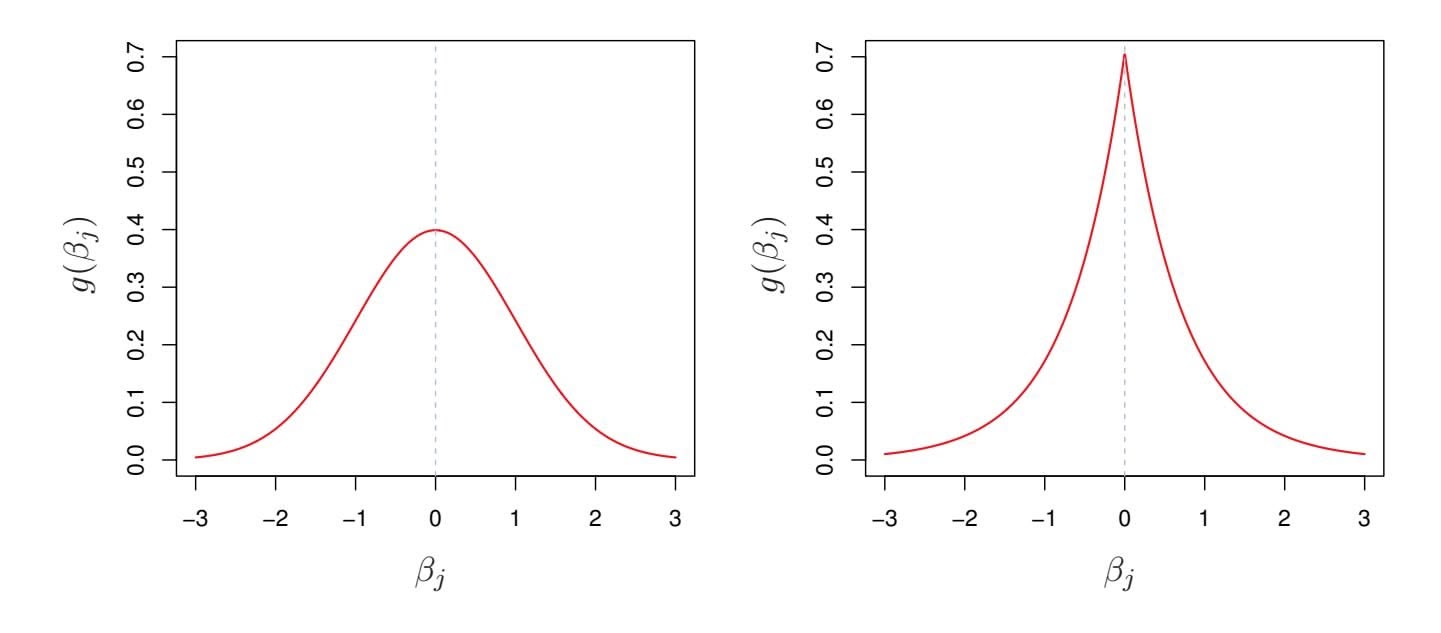

**FIGURE 6.11.** Left: *Ridge regression is the posterior mode for* β *under a Gaussian prior.* Right: *The lasso is the posterior mode for* β *under a double-exponential prior.*

all coeffcients toward zero by a similar amount, and suffciently small coeffcients are shrunken all the way to zero.

### Bayesian Interpretation of Ridge Regression and the Lasso

We now show that one can view ridge regression and the lasso through a Bayesian lens. A Bayesian viewpoint for regression assumes that the coefficient vector $\beta$ has some *prior* distribution, say $p(\beta)$ , where $\beta = (\beta_0, \beta_1, \dots, \beta_p)^T$ . The likelihood of the data can be written as $f(Y|X, \beta)$ , where $X = (X_1, \dots, X_p)$ . Multiplying the prior distribution by the likelihood gives us (up to a proportionality constant) the *posterior distribution*, which takes the form

posterior

distribution

$$

p(\beta|X, Y) \propto f(Y|X, \beta)p(\beta|X) = f(Y|X, \beta)p(\beta),

$$

where the proportionality above follows from Bayes' theorem, and the equality above follows from the assumption that *X* is fxed.

We assume the usual linear model,

$$

Y = \beta_0 + X_1 \beta_1 + \dots + X_p \beta_p + \epsilon,

$$

and suppose that the errors are independent and drawn from a normal distribution. Furthermore, assume that $p(\beta) = \prod_{j=1}^{p} g(\beta_j)$ , for some density function $g$ . It turns out that ridge regression and the lasso follow naturally from two special cases of $g$ :

• If *g* is a Gaussian distribution with mean zero and standard deviation a function of λ, then it follows that the *posterior mode* for β—that posterior mode is, the most likely value for β, given the data—is given by the ridge

posterior

mode

250 6. Linear Model Selection and Regularization

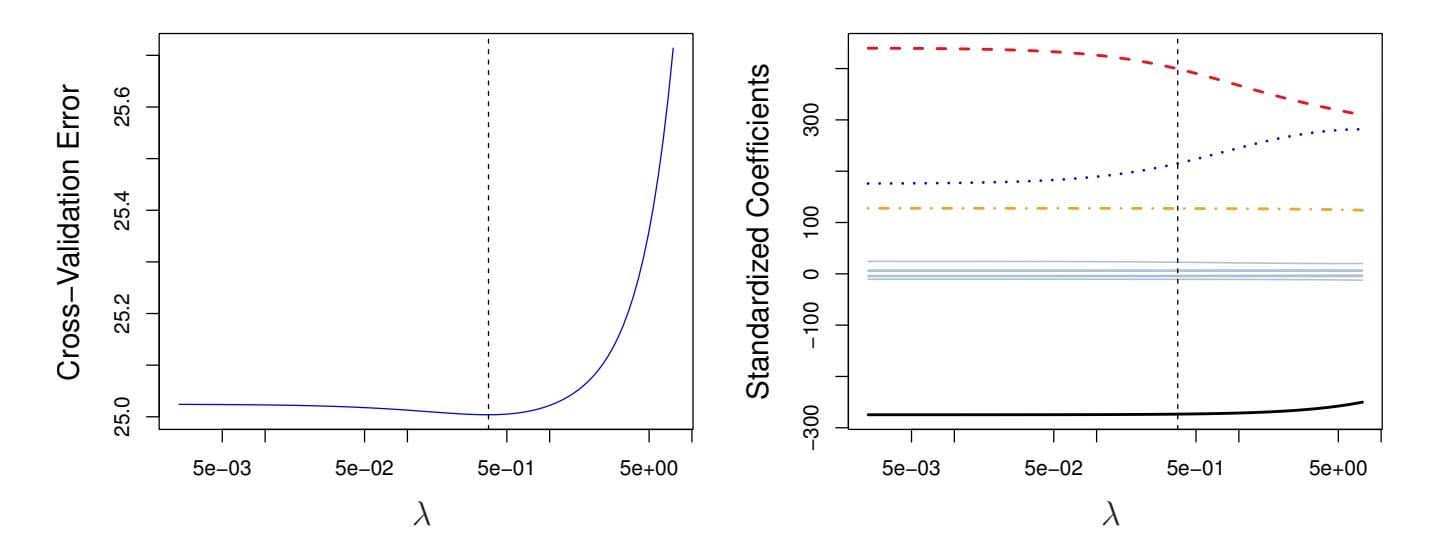

**FIGURE 6.12.** Left: *Cross-validation errors that result from applying ridge regression to the* Credit *data set with various values of* λ*.* Right: *The coeffcient estimates as a function of* λ*. The vertical dashed lines indicate the value of* λ *selected by cross-validation.*

regression solution. (In fact, the ridge regression solution is also the posterior mean.)

• If *g* is a double-exponential (Laplace) distribution with mean zero and scale parameter a function of λ, then it follows that the posterior mode for β is the lasso solution. (However, the lasso solution is *not* the posterior mean, and in fact, the posterior mean does not yield a sparse coeffcient vector.)

The Gaussian and double-exponential priors are displayed in Figure 6.11. Therefore, from a Bayesian viewpoint, ridge regression and the lasso follow directly from assuming the usual linear model with normal errors, together with a simple prior distribution for β. Notice that the lasso prior is steeply peaked at zero, while the Gaussian is fatter and fatter at zero. Hence, the lasso expects a priori that many of the coeffcients are (exactly) zero, while ridge assumes the coeffcients are randomly distributed about zero.

# *6.2.3 Selecting the Tuning Parameter*

Just as the subset selection approaches considered in Section 6.1 require a method to determine which of the models under consideration is best, implementing ridge regression and the lasso requires a method for selecting a value for the tuning parameter λ in (6.5) and (6.7), or equivalently, the value of the constraint *s* in (6.9) and (6.8). Cross-validation provides a simple way to tackle this problem. We choose a grid of λ values, and compute the cross-validation error for each value of λ, as described in Chapter 5. We then select the tuning parameter value for which the cross-validation error is smallest. Finally, the model is re-ft using all of the available observations and the selected value of the tuning parameter.

6.2 Shrinkage Methods 251

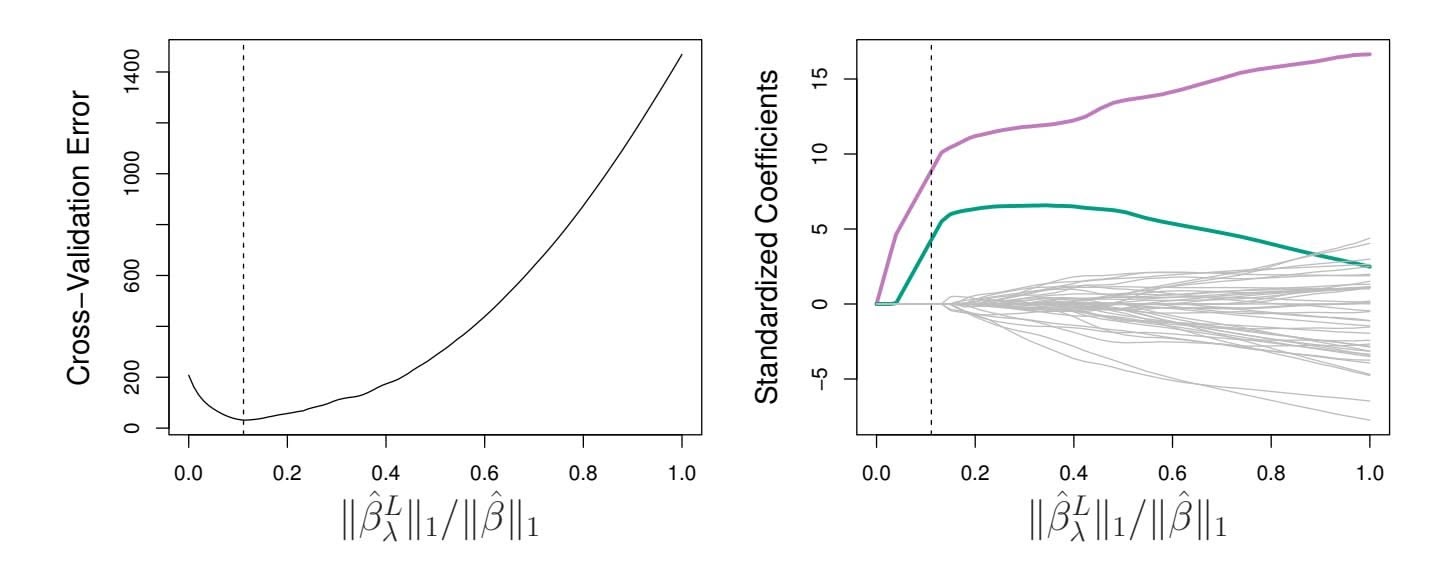

**FIGURE 6.13.** Left*: Ten-fold cross-validation MSE for the lasso, applied to the sparse simulated data set from Figure 6.9.* Right: *The corresponding lasso coeffcient estimates are displayed. The two signal variables are shown in color, and the noise variables are in gray. The vertical dashed lines indicate the lasso ft for which the cross-validation error is smallest.*

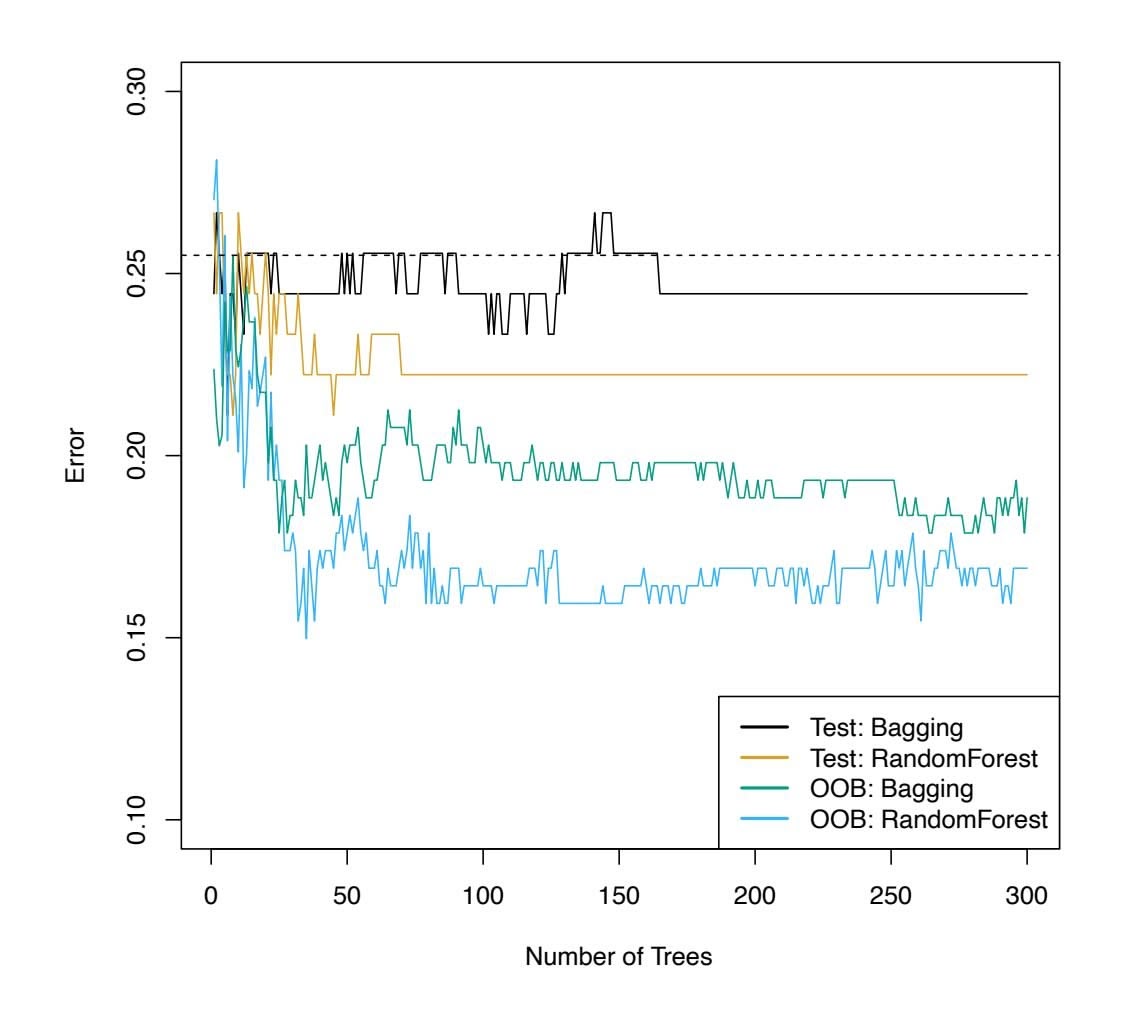

Figure 6.12 displays the choice of λ that results from performing leaveone-out cross-validation on the ridge regression fts from the Credit data set. The dashed vertical lines indicate the selected value of λ. In this case the value is relatively small, indicating that the optimal ft only involves a small amount of shrinkage relative to the least squares solution. In addition, the dip is not very pronounced, so there is rather a wide range of values that would give a very similar error. In a case like this we might simply use the least squares solution.

Figure 6.13 provides an illustration of ten-fold cross-validation applied to the lasso fts on the sparse simulated data from Figure 6.9. The left-hand panel of Figure 6.13 displays the cross-validation error, while the right-hand panel displays the coeffcient estimates. The vertical dashed lines indicate the point at which the cross-validation error is smallest. The two colored lines in the right-hand panel of Figure 6.13 represent the two predictors that are related to the response, while the grey lines represent the unrelated predictors; these are often referred to as *signal* and *noise* variables, signal respectively. Not only has the lasso correctly given much larger coeffcient estimates to the two signal predictors, but also the minimum crossvalidation error corresponds to a set of coeffcient estimates for which only the signal variables are non-zero. Hence cross-validation together with the lasso has correctly identifed the two signal variables in the model, even though this is a challenging setting, with *p* = 45 variables and only *n* = 50 observations. In contrast, the least squares solution—displayed on the far right of the right-hand panel of Figure 6.13—assigns a large coeffcient estimate to only one of the two signal variables.

252 6. Linear Model Selection and Regularization

# 6.3 Dimension Reduction Methods

The methods that we have discussed so far in this chapter have controlled variance in two diferent ways, either by using a subset of the original variables, or by shrinking their coeffcients toward zero. All of these methods are defned using the original predictors, *X*1*, X*2*,...,Xp*. We now explore a class of approaches that *transform* the predictors and then ft a least squares model using the transformed variables. We will refer to these techniques as *dimension reduction* methods. dimension

reduction Let *Z*1*, Z*2*,...,ZM* represent *Mm* are linearly independent, then (6.18) poses no constraints. In this case, no dimension reduction occurs, and so ftting (6.17) is equivalent to performing least squares on the original *p* predictors.

All dimension reduction methods work in two steps. First, the transformed predictors *Z*1*, Z*2*,...,ZM* are obtained. Second, the model is ft using these *M* predictors. However, the choice of *Z*1*, Z*2*,...,ZM*, or equivalently, the selection of the φ*jm*'s, can be achieved in diferent ways. In this chapter, we will consider two approaches for this task: *principal components* and *partial least squares*.

# *6.3.1 Principal Components Regression*

*Principal components analysis* (PCA) is a popular approach for deriving principal a low-dimensional set of features from a large set of variables. PCA is discussed in greater detail as a tool for *unsupervised learning* in Chapter 12. Here we describe its use as a dimension reduction technique for regression.

components analysis

### An Overview of Principal Components Analysis

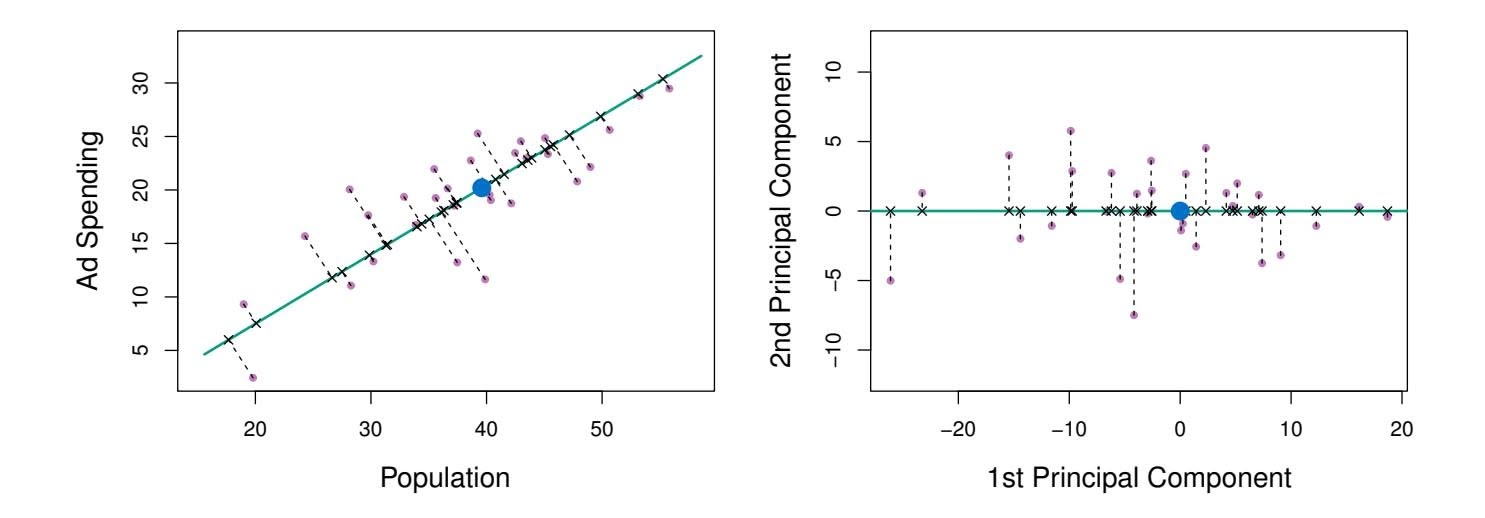

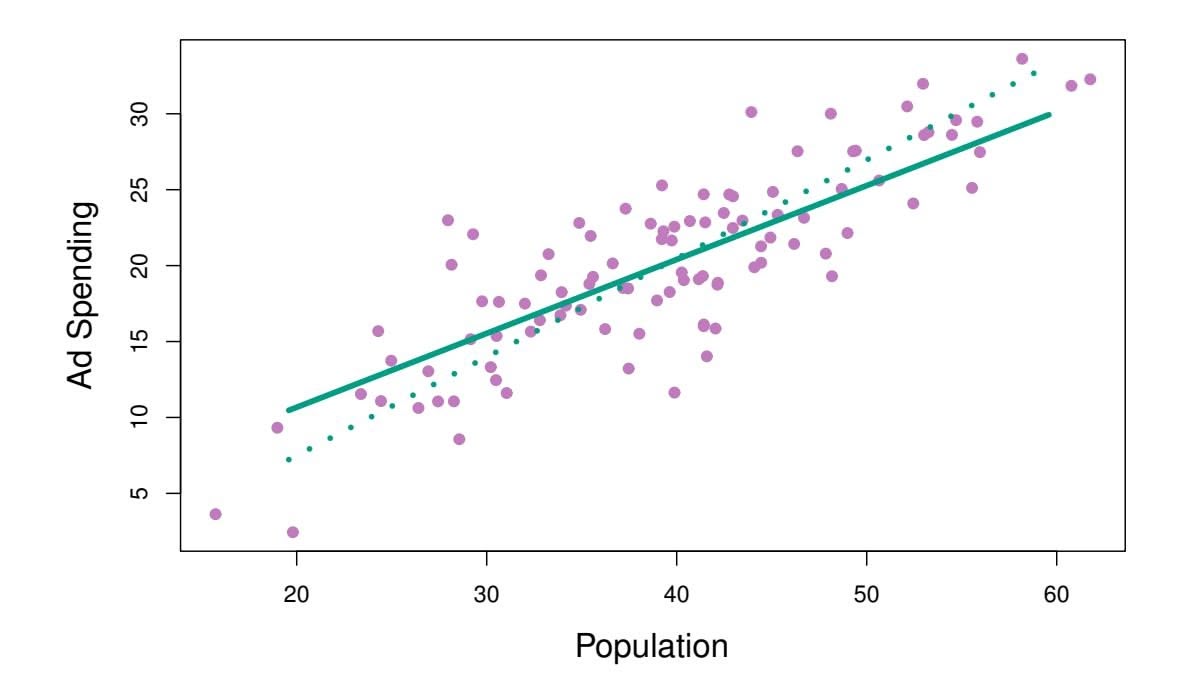

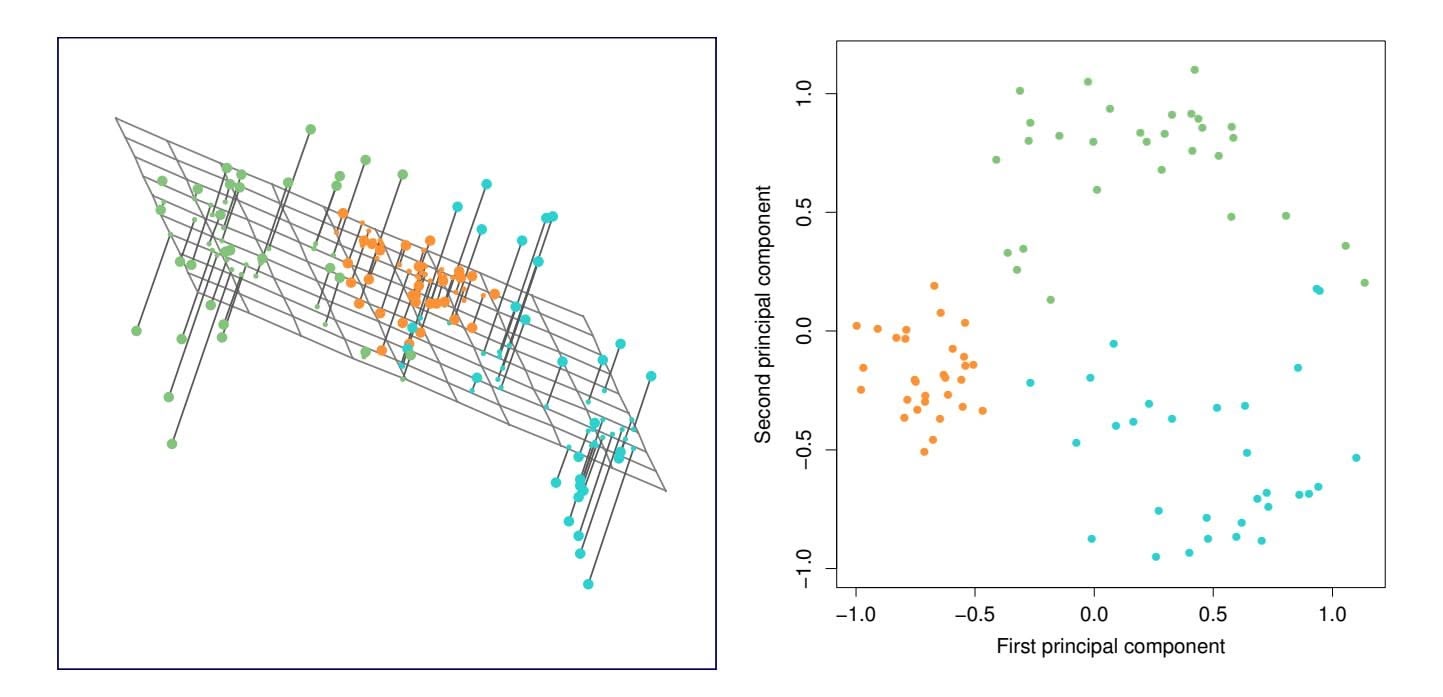

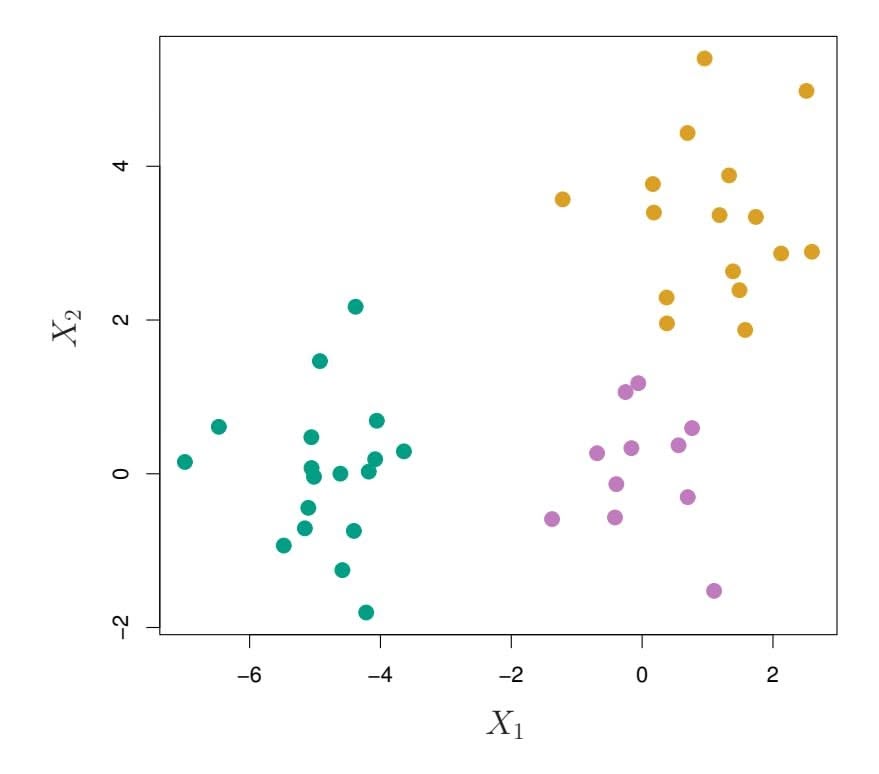

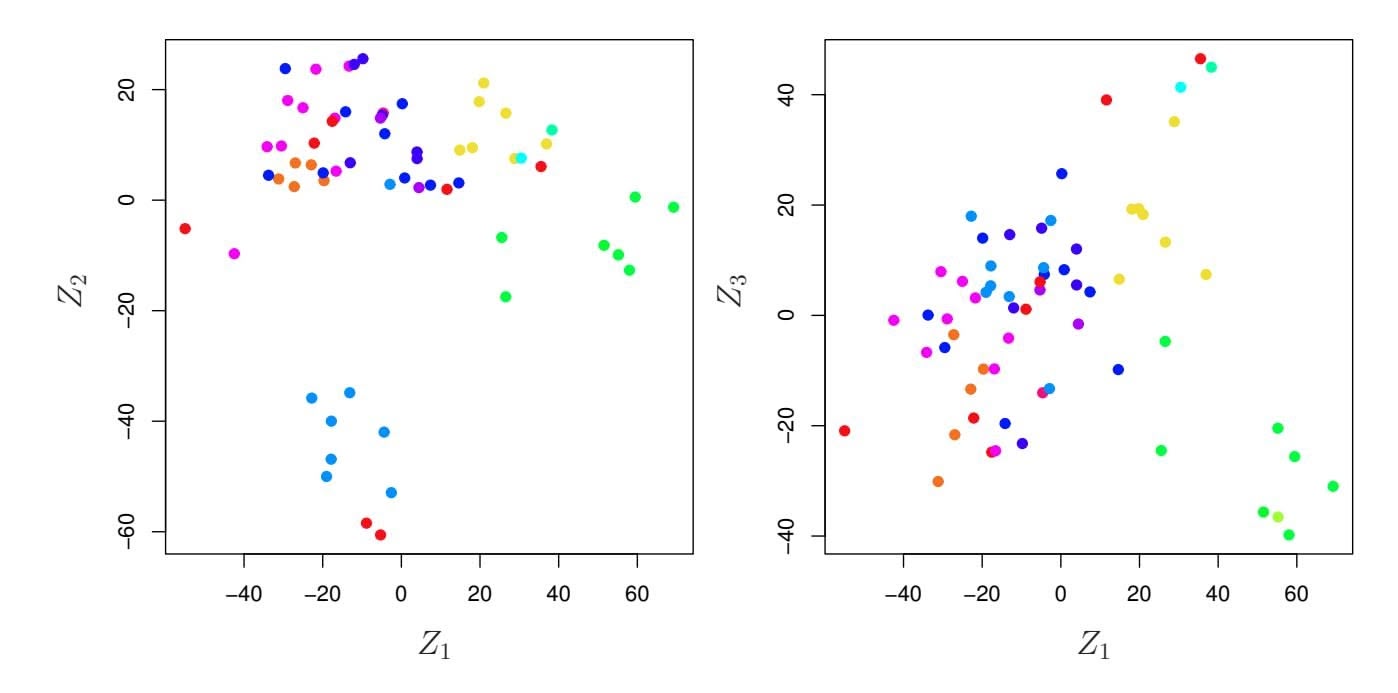

PCA is a technique for reducing the dimension of an $n \times p$ data matrix $\mathbf{X}$ . The *first principal component* direction of the data is that along which the observations *vary the most*. For instance, consider Figure 6.14, which shows population size (pop) in tens of thousands of people, and ad spending for a

# 254 6. Linear Model Selection and Regularization

particular company (ad) in thousands of dollars, for 100 cities.6 The green solid line represents the frst principal component direction of the data. We can see by eye that this is the direction along which there is the greatest variability in the data. That is, if we *projected* the 100 observations onto this line (as shown in the left-hand panel of Figure 6.15), then the resulting projected observations would have the largest possible variance; projecting the observations onto any other line would yield projected observations with lower variance. Projecting a point onto a line simply involves fnding the location on the line which is closest to the point.

The frst principal component is displayed graphically in Figure 6.14, but how can it be summarized mathematically? It is given by the formula

$$

Z_{1} = 0.839 \times (\text{pop} - \overline{\text{pop}}) + 0.544 \times (\text{ad} - \overline{\text{ad}}) \quad (6.19)

$$

Here $\phi_{11} = 0.839$ and $\phi_{21} = 0.544$ are the principal component loadings, which define the direction referred to above. In (6.19), $\overline{\text{pop}}$ indicates the mean of all pop values in this data set, and $\overline{\text{ad}}$ indicates the mean of all advertising spending. The idea is that out of every possible *linear combination* of pop and ad such that $\phi_{11}^2 + \phi_{21}^2 = 1$ , this particular linear combination yields the highest variance: i.e. this is the linear combination for which $\text{Var}(\phi_{11} \times (\text{pop} - \overline{\text{pop}}) + \phi_{21} \times (\text{ad} - \overline{\text{ad}}))$ is maximized. It is necessary to consider only linear combinations of the form $\phi_{11}^2 + \phi_{21}^2 = 1$ , since otherwise we could increase $\phi_{11}$ and $\phi_{21}$ arbitrarily in order to blow up the variance. In (6.19), the two loadings are both positive and have similar size, and so $Z_1$ is almost an *average* of the two variables.

Since *n* = 100, pop and ad are vectors of length 100, and so is *Z*1 in (6.19). For instance,

$$

z_{i1} = 0.839 \times (\text{pop}_{i} - \overline{\text{pop}}) + 0.544 \times (\text{ad}_{i} - \overline{\text{ad}}).

$$

(6.20)

The values of *z*11*,...,zn*1 are known as the *principal component scores*, and can be seen in the right-hand panel of Figure 6.15.

There is also another interpretation of PCA: the frst principal component vector defnes the line that is *as close as possible* to the data. For instance, in Figure 6.14, the frst principal component line minimizes the sum of the squared perpendicular distances between each point and the line. These distances are plotted as dashed line segments in the left-hand panel of Figure 6.15, in which the crosses represent the *projection* of each point onto the frst principal component line. The frst principal component has been chosen so that the projected observations are *as close as possible* to the original observations.

In the right-hand panel of Figure 6.15, the left-hand panel has been rotated so that the frst principal component direction coincides with the *x*-axis. It is possible to show that the *frst principal component score* for

6This dataset is distinct from the Advertising data discussed in Chapter 3.

6.3 Dimension Reduction Methods 255

**FIGURE 6.15.** *A subset of the advertising data. The mean* pop *and* ad *budgets are indicated with a blue circle.* Left: *The first principal component direction is shown in green. It is the dimension along which the data vary the most, and it also defines the line that is closest to all* $n$ *of the observations. The distances from each observation to the principal component are represented using the black dashed line segments. The blue dot represents* $(\overline{\text{pop}}, \overline{\text{ad}})$ *.* Right: *The left-hand panel has been rotated so that the first principal component direction coincides with the x-axis.*

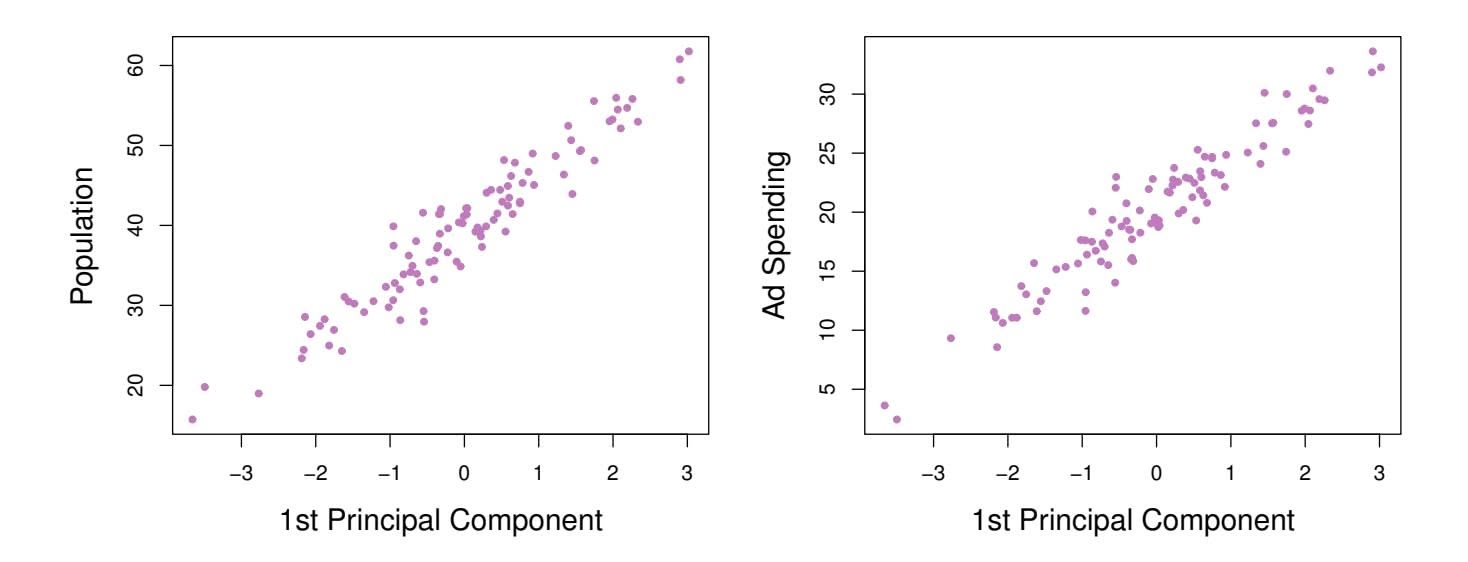

the *i*th observation, given in (6.20), is the distance in the *x*-direction of the *i*th cross from zero. So for example, the point in the bottom-left corner of the left-hand panel of Figure 6.15 has a large negative principal component score, $z_{i1} = -26.1$ , while the point in the top-right corner has a large positive score, $z_{i1} = 18.7$ . These scores can be computed directly using (6.20).We can think of the values of the principal component $Z_1$ as single-number summaries of the joint pop and ad budgets for each location. In this example, if $z_{i1} = 0.839 \times (\text{pop}_i - \overline{\text{pop}}) + 0.544 \times (\text{ad}_i - \overline{\text{ad}}) < 0$ , then this indicates a city with below-average population size and below-average ad spending. A positive score suggests the opposite. How well can a single number represent both pop and ad? In this case, Figure 6.14 indicates that pop and ad have approximately a linear relationship, and so we might expect that a single-number summary will work well. Figure 6.16 displays $z_{i1}$ versus both pop and ad.[7](#7) The plots show a strong relationship between the first principal component and the two features. In other words, the first principal component appears to capture most of the information contained in the pop and ad predictors.

So far we have concentrated on the first principal component. In general, one can construct up to $p$ distinct principal components. The second principal component $Z_2$ is a linear combination of the variables that is uncorrelated with $Z_1$ , and has largest variance subject to this constraint. The

7The principal components were calculated after first standardizing both pop and ad, a common approach. Hence, the x-axes on Figures 6.15 and 6.16 are not on the same scale.256 6. Linear Model Selection and Regularization

**FIGURE 6.16.** *Plots of the frst principal component scores zi*1 *versus* pop *and* ad*. The relationships are strong.*

second principal component direction is illustrated as a dashed blue line in Figure 6.14. It turns out that the zero correlation condition of *Z*1 with *Z*2 is equivalent to the condition that the direction must be *perpendicular*, or perpen*orthogonal*, to the frst principal component direction. The second principal dicular component is given by the formula orthogonal

perpen-

dicular

orthogonal

$$

Z_2 = 0.544 \times (pop - \overline{pop}) - 0.839 \times (ad - \overline{ad}).

$$

Since the advertising data has two predictors, the frst two principal components contain all of the information that is in pop and ad. However, by construction, the frst component will contain the most information. Consider, for example, the much larger variability of *zi*1 (the *x*-axis) versus *zi*2 (the *y*-axis) in the right-hand panel of Figure 6.15. The fact that the second principal component scores are much closer to zero indicates that this component captures far less information. As another illustration, Figure 6.17 displays *zi*2 versus pop and ad. There is little relationship between the second principal component and these two predictors, again suggesting that in this case, one only needs the frst principal component in order to accurately represent the pop and ad budgets.

With two-dimensional data, such as in our advertising example, we can construct at most two principal components. However, if we had other predictors, such as population age, income level, education, and so forth, then additional components could be constructed. They would successively maximize variance, subject to the constraint of being uncorrelated with the preceding components.

### The Principal Components Regression Approach

The *principal components regression* (PCR) approach involves construct- principal ing the frst *M* principal components, *Z*1*,...,ZM*, and then using these components as the predictors in a linear regression model that is ft using least squares. The key idea is that often a small number of principal

components regression

6.3 Dimension Reduction Methods 257

**FIGURE 6.17.** *Plots of the second principal component scores zi*2 *versus* pop *and* ad*. The relationships are weak.*

**FIGURE 6.18.** *PCR was applied to two simulated data sets. In each panel, the horizontal dashed line represents the irreducible error.* Left: *Simulated data from Figure 6.8.* Right: *Simulated data from Figure 6.9.*

components suffce to explain most of the variability in the data, as well as the relationship with the response. In other words, we assume that *the directions in which X*1*,...,Xp show the most variation are the directions that are associated with Y* . While this assumption is not guaranteed to be true, it often turns out to be a reasonable enough approximation to give good results.

If the assumption underlying PCR holds, then fitting a least squares model to $Z_1, \dots, Z_M$ will lead to better results than fitting a least squares model to $X_1, \dots, X_p$ , since most or all of the information in the data that relates to the response is contained in $Z_1, \dots, Z_M$ , and by estimating only $M \ll p$ coefficients we can mitigate overfitting. In the advertising data, the first principal component explains most of the variance in both pop and ad, so a principal component regression that uses this single variable to predict some response of interest, such as sales, will likely perform quite well.

258 6. Linear Model Selection and Regularization

**FIGURE 6.19.** *PCR, ridge regression, and the lasso were applied to a simulated data set in which the frst fve principal components of X contain all the information about the response Y . In each panel, the irreducible error* Var(ϵ) *is shown as a horizontal dashed line.* Left: *Results for PCR.* Right: *Results for lasso (solid) and ridge regression (dotted). The x-axis displays the shrinkage factor of the coeffcient estimates, defned as the* ℓ2 *norm of the shrunken coeffcient estimates divided by the* ℓ2 *norm of the least squares estimate.*

Figure 6.18 displays the PCR $fits$ on the simulated data sets from Figures 6.8 and 6.9. Recall that both data sets were generated using $n = 50$ observations and $p = 45$ predictors. However, while the response in the first data set was a function of all the predictors, the response in the second data set was generated using only two of the predictors. The curves are plotted as a function of $M$ , the number of principal components used as predictors in the regression model. As more principal components are used in the regression model, the bias decreases, but the variance increases. This results in a typical U-shape for the mean squared error. When $M = p = 45$ , then PCR amounts simply to a least squares fit using all of the original predictors. The figure indicates that performing PCR with an appropriate choice of $M$ can result in a substantial improvement over least squares, especially in the left-hand panel. However, by examining the ridge regression and lasso results in Figures 6.5, 6.8, and 6.9, we see that PCR does not perform as well as the two shrinkage methods in this example.

The relatively worse performance of PCR in Figure 6.18 is a consequence of the fact that the data were generated in such a way that many principal components are required in order to adequately model the response. In contrast, PCR will tend to do well in cases when the frst few principal components are suffcient to capture most of the variation in the predictors as well as the relationship with the response. The left-hand panel of Figure 6.19 illustrates the results from another simulated data set designed to be more favorable to PCR. Here the response was generated in such a way that it depends exclusively on the frst fve principal components. Now the

6.3 Dimension Reduction Methods 259

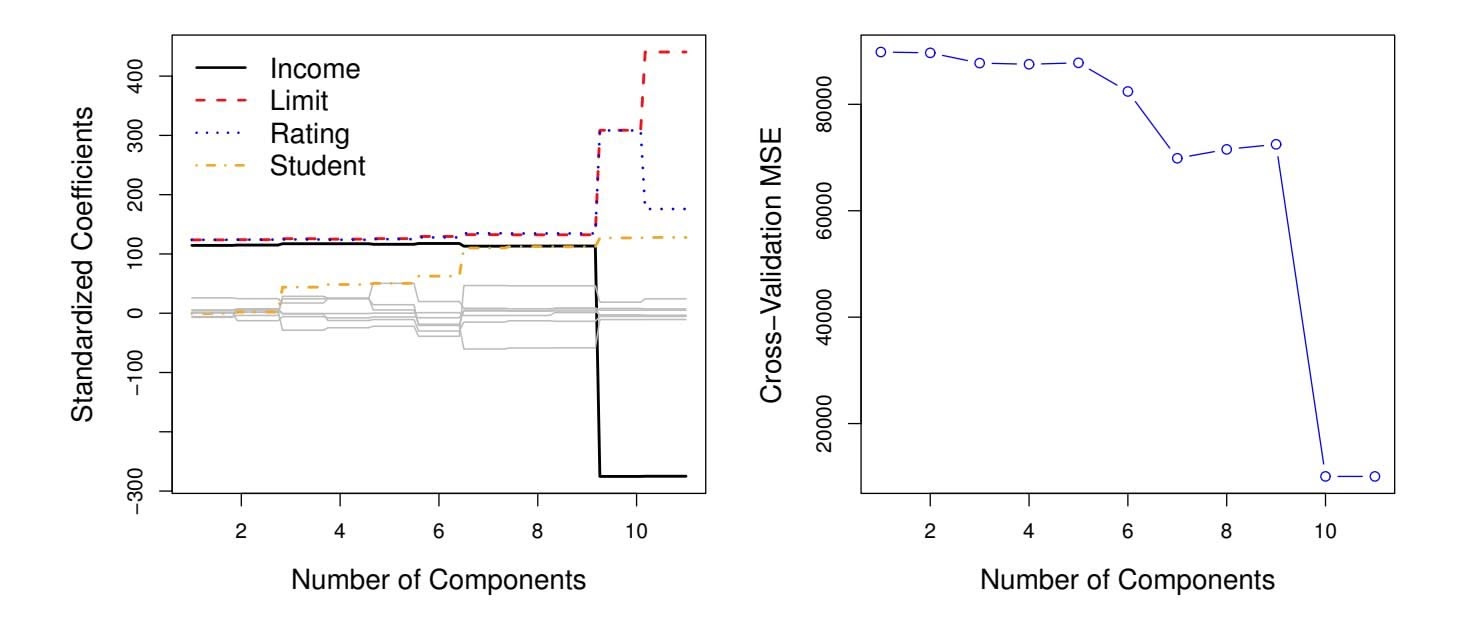

**FIGURE 6.20.** Left: *PCR standardized coeffcient estimates on the* Credit *data set for diferent values of M.* Right: *The ten-fold cross-validation* MSE *obtained using PCR, as a function of M.*

bias drops to zero rapidly as *M*, the number of principal components used in PCR, increases. The mean squared error displays a clear minimum at *M* = 5. The right-hand panel of Figure 6.19 displays the results on these data using ridge regression and the lasso. All three methods ofer a significant improvement over least squares. However, PCR and ridge regression slightly outperform the lasso.

We note that even though PCR provides a simple way to perform regression using *M1 was a linear combination of both pop and ad. Therefore, while PCR often performs quite well in many practical settings, it does not result in the development of a model that relies upon a small set of the original features. In this sense, PCR is more closely related to ridge regression than to the lasso. In fact, one can show that PCR and ridge regression are very closely related. One can even think of ridge regression as a continuous version of PCR!8

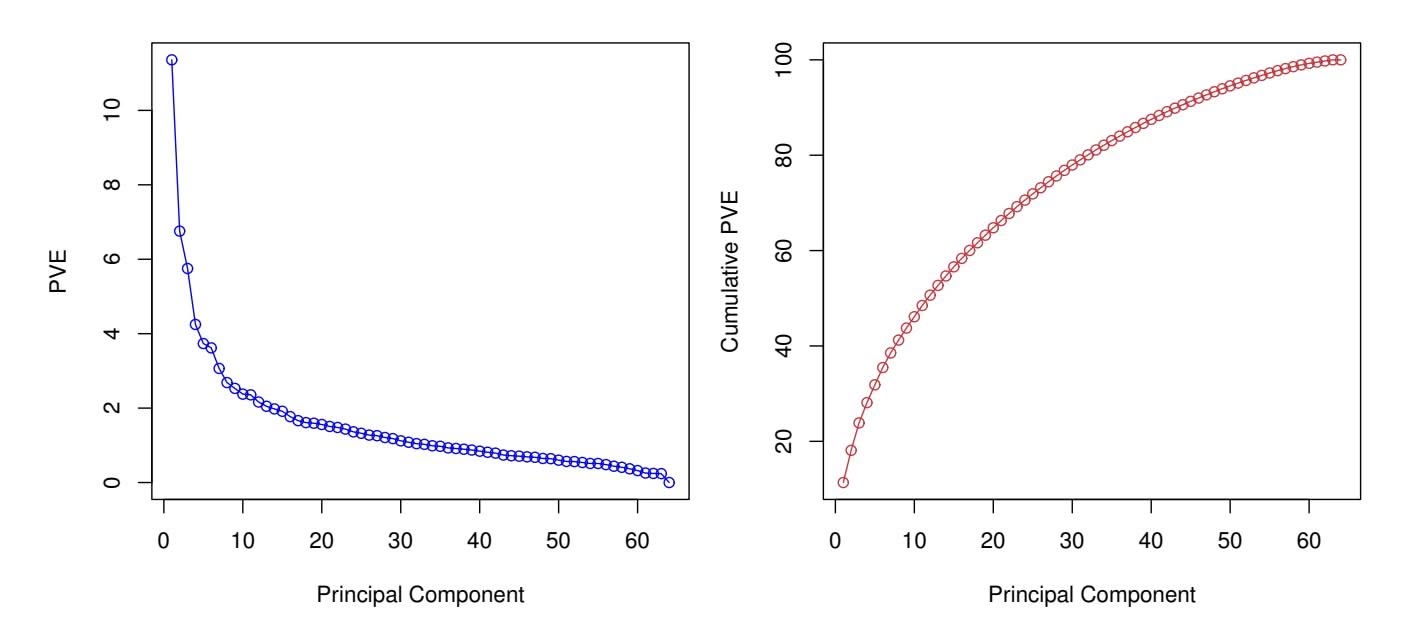

In PCR, the number of principal components, *M*, is typically chosen by cross-validation. The results of applying PCR to the Credit data set are shown in Figure 6.20; the right-hand panel displays the cross-validation errors obtained, as a function of *M*. On these data, the lowest cross-validation error occurs when there are *M* = 10 components; this corresponds to almost no dimension reduction at all, since PCR with *M* = 11 is equivalent to simply performing least squares.

8More details can be found in Section 3.5 of *The Elements of Statistical Learning* by Hastie, Tibshirani, and Friedman.

260 6. Linear Model Selection and Regularization

**FIGURE 6.21.** *For the advertising data, the frst PLS direction (solid line) and frst PCR direction (dotted line) are shown.*

When performing PCR, we generally recommend *standardizing* each predictor, using (6.6), prior to generating the principal components. This standardization ensures that all variables are on the same scale. In the absence of standardization, the high-variance variables will tend to play a larger role in the principal components obtained, and the scale on which the variables are measured will ultimately have an efect on the fnal PCR model. However, if the variables are all measured in the same units (say, kilograms, or inches), then one might choose not to standardize them.

# *6.3.2 Partial Least Squares*

The PCR approach that we just described involves identifying linear combinations, or *directions*, that best represent the predictors *X*1*,...,Xp*. These directions are identifed in an *unsupervised* way, since the response *Y* is not used to help determine the principal component directions. That is, the response does not *supervise* the identifcation of the principal components. Consequently, PCR sufers from a drawback: there is no guarantee that the directions that best explain the predictors will also be the best directions to use for predicting the response. Unsupervised methods are discussed further in Chapter 12.

We now present *partial least squares* (PLS), a *supervised* alternative to partial least squares PCR. Like PCR, PLS is a dimension reduction method, which frst identifes a new set of features *Z*1*,...,ZM* that are linear combinations of the original features, and then fts a linear model via least squares using these *M* new features. But unlike PCR, PLS identifes these new features in a supervised way—that is, it makes use of the response *Y* in order to identify new features that not only approximate the old features well, but also that *are*

partial least

squares

6.4 Considerations in High Dimensions 261

*related to the response*. Roughly speaking, the PLS approach attempts to fnd directions that help explain both the response and the predictors.

We now describe how the first PLS direction is computed. After standardizing the $p$ predictors, PLS computes the first direction $Z_1$ by setting each $\phi_{j1}$ in (6.16) equal to the coefficient from the simple linear regression of $Y$ onto $X_j$ . One can show that this coefficient is proportional to the correlation between $Y$ and $X_j$ . Hence, in computing $Z_1 = \sum_{j=1}^p \phi_{j1}X_j$ , PLS places the highest weight on the variables that are most strongly related to the response.

Figure 6.21 displays an example of PLS on a synthetic dataset with Sales in each of 100 regions as the response, and two predictors; Population Size and Advertising Spending. The solid green line indicates the frst PLS direction, while the dotted line shows the frst principal component direction. PLS has chosen a direction that has less change in the ad dimension per unit change in the pop dimension, relative to PCA. This suggests that pop is more highly correlated with the response than is ad. The PLS direction does not ft the predictors as closely as does PCA, but it does a better job explaining the response.

To identify the second PLS direction we first *adjust* each of the variables for $Z_1$ , by regressing each variable on $Z_1$ and taking *residuals*. These residuals can be interpreted as the remaining information that has not been explained by the first PLS direction. We then compute $Z_2$ using this *orthogonalized* data in exactly the same fashion as $Z_1$ was computed based on the original data. This iterative approach can be repeated $M$ times to identify multiple PLS components $Z_1, \dots, Z_M$ . Finally, at the end of this procedure, we use least squares to fit a linear model to predict $Y$ using $Z_1, \dots, Z_M$ in exactly the same fashion as for PCR.

As with PCR, the number *M* of partial least squares directions used in PLS is a tuning parameter that is typically chosen by cross-validation. We generally standardize the predictors and response before performing PLS.

PLS is popular in the feld of chemometrics, where many variables arise from digitized spectrometry signals. In practice it often performs no better than ridge regression or PCR. While the supervised dimension reduction of PLS can reduce bias, it also has the potential to increase variance, so that the overall beneft of PLS relative to PCR is a wash.

# 6.4 Considerations in High Dimensions

# *6.4.1 High-Dimensional Data*

Most traditional statistical techniques for regression and classifcation are intended for the *low-dimensional* setting in which *n*, the number of ob- lowdimensional servations, is much greater than *p*, the number of features. This is due in part to the fact that throughout most of the feld's history, the bulk of sci-

low-

dimensiona

262 6. Linear Model Selection and Regularization

entifc problems requiring the use of statistics have been low-dimensional. For instance, consider the task of developing a model to predict a patient's blood pressure on the basis of his or her age, sex, and body mass index (BMI). There are three predictors, or four if an intercept is included in the model, and perhaps several thousand patients for whom blood pressure and age, sex, and BMI are available. Hence *n* ≫ *p*, and so the problem is low-dimensional. (By dimension here we are referring to the size of *p*.)

In the past 20 years, new technologies have changed the way that data are collected in felds as diverse as fnance, marketing, and medicine. It is now commonplace to collect an almost unlimited number of feature measurements (*p* very large). While *p* can be extremely large, the number of observations *n* is often limited due to cost, sample availability, or other considerations. Two examples are as follows:

- 1. Rather than predicting blood pressure on the basis of just age, sex, and BMI, one might also collect measurements for half a million *single nucleotide polymorphisms* (SNPs; these are individual DNA mutations that are relatively common in the population) for inclusion in the predictive model. Then *n* ≈ 200 and *p* ≈ 500*,*000.

- 2. A marketing analyst interested in understanding people's online shopping patterns could treat as features all of the search terms entered by users of a search engine. This is sometimes known as the "bag-ofwords" model. The same researcher might have access to the search histories of only a few hundred or a few thousand search engine users who have consented to share their information with the researcher. For a given user, each of the *p* search terms is scored present (0) or absent (1), creating a large binary feature vector. Then *n* ≈ 1*,*000 and *p* is much larger.

Data sets containing more features than observations are often referred to as *high-dimensional*. Classical approaches such as least squares linear highdimensional regression are not appropriate in this setting. Many of the issues that arise in the analysis of high-dimensional data were discussed earlier in this book, since they apply also when *n>p*: these include the role of the bias-variance trade-of and the danger of overftting. Though these issues are always relevant, they can become particularly important when the number of features is very large relative to the number of observations.

We have defned the *high-dimensional setting* as the case where the number of features *p* is larger than the number of observations *n*. But the considerations that we will now discuss certainly also apply if *p* is slightly smaller than *n*, and are best always kept in mind when performing supervised learning.

high-dimensional

6.4 Considerations in High Dimensions 263

# *6.4.2 What Goes Wrong in High Dimensions?*

In order to illustrate the need for extra care and specialized techniques for regression and classifcation when *p>n*, we begin by examining what can go wrong if we apply a statistical technique not intended for the highdimensional setting. For this purpose, we examine least squares regression. But the same concepts apply to logistic regression, linear discriminant analysis, and other classical statistical approaches.

When the number of features *p* is as large as, or larger than, the number of observations *n*, least squares as described in Chapter 3 cannot (or rather, *should not*) be performed. The reason is simple: regardless of whether or not there truly is a relationship between the features and the response, least squares will yield a set of coeffcient estimates that result in a perfect ft to the data, such that the residuals are zero.

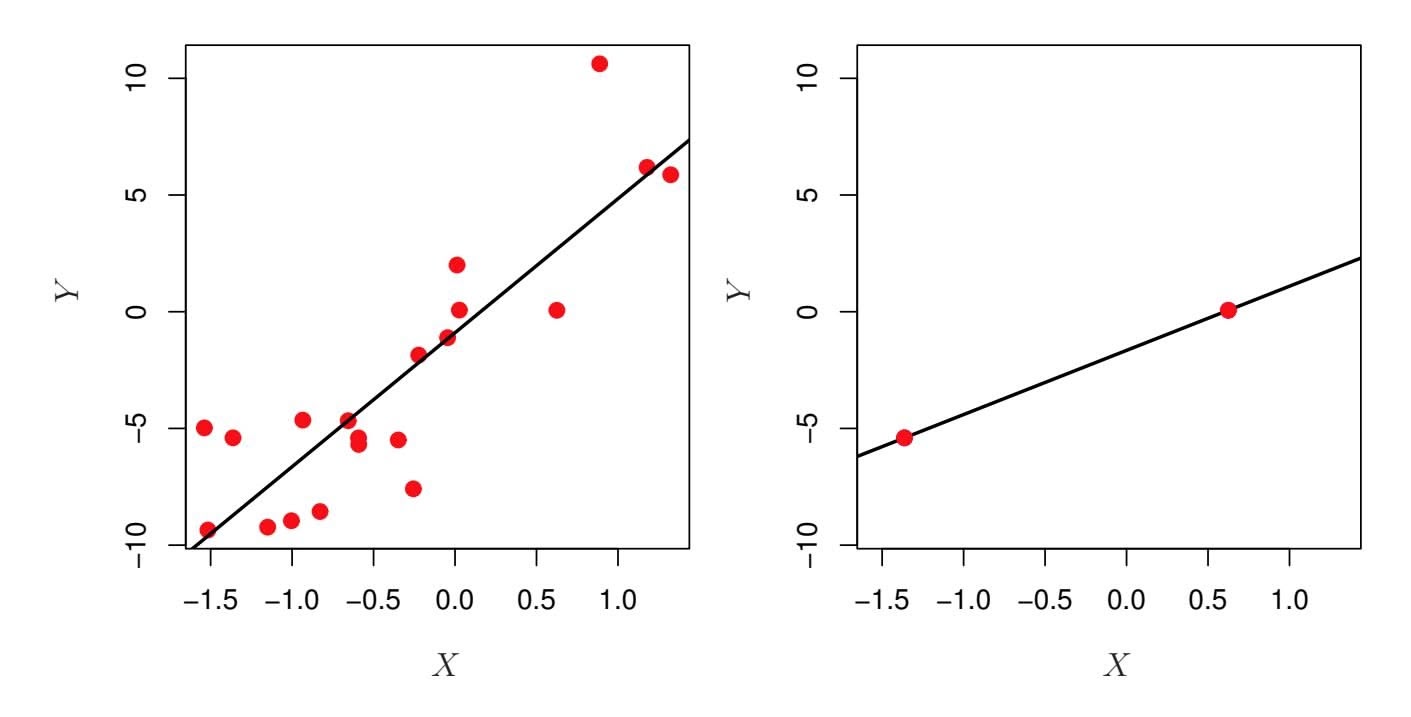

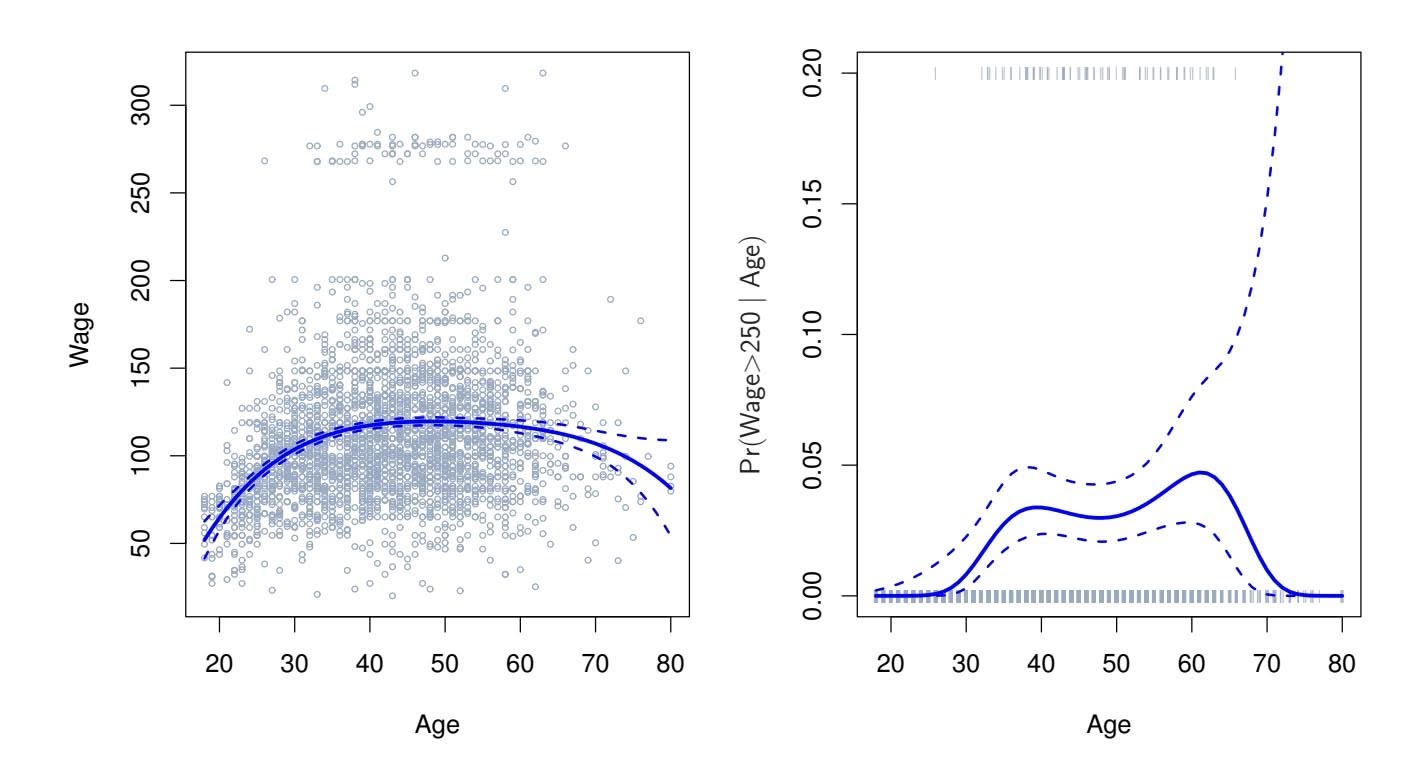

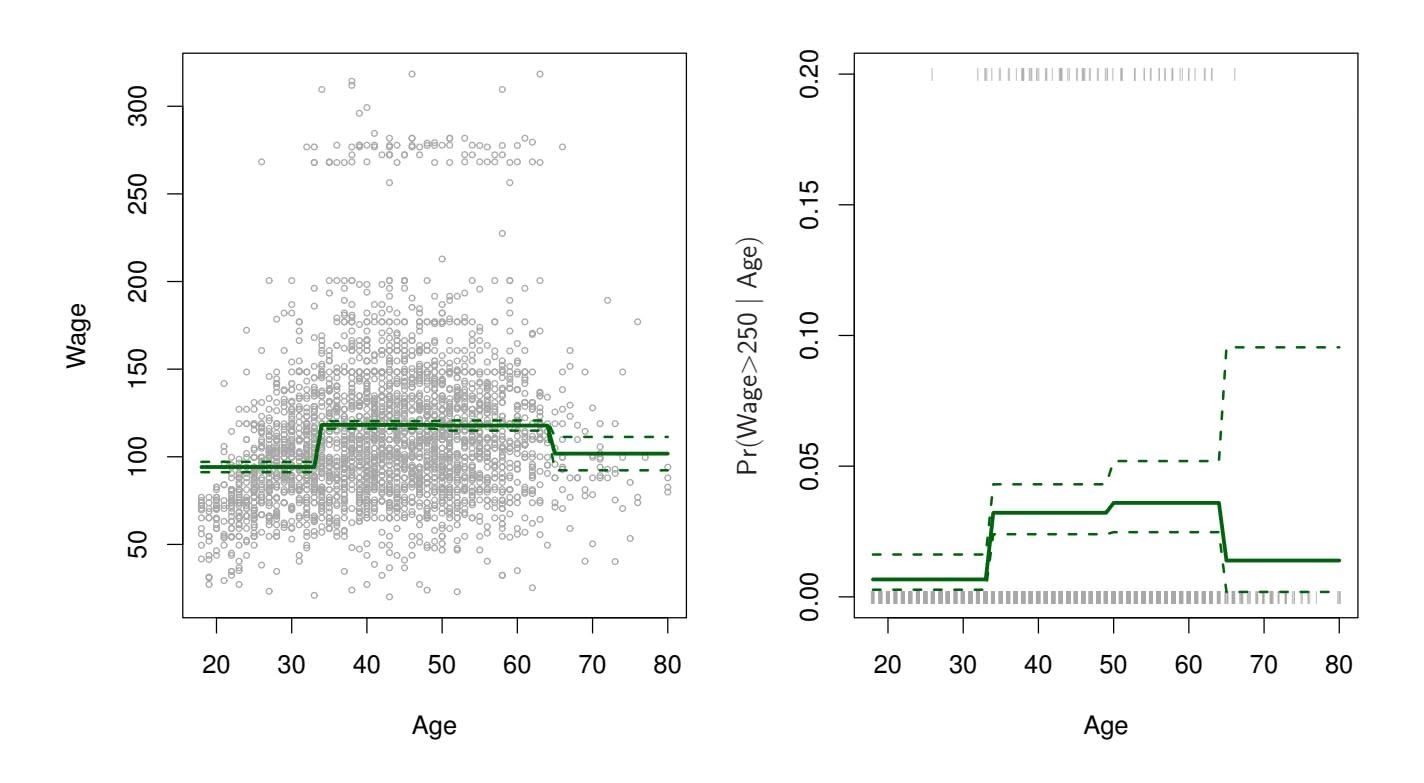

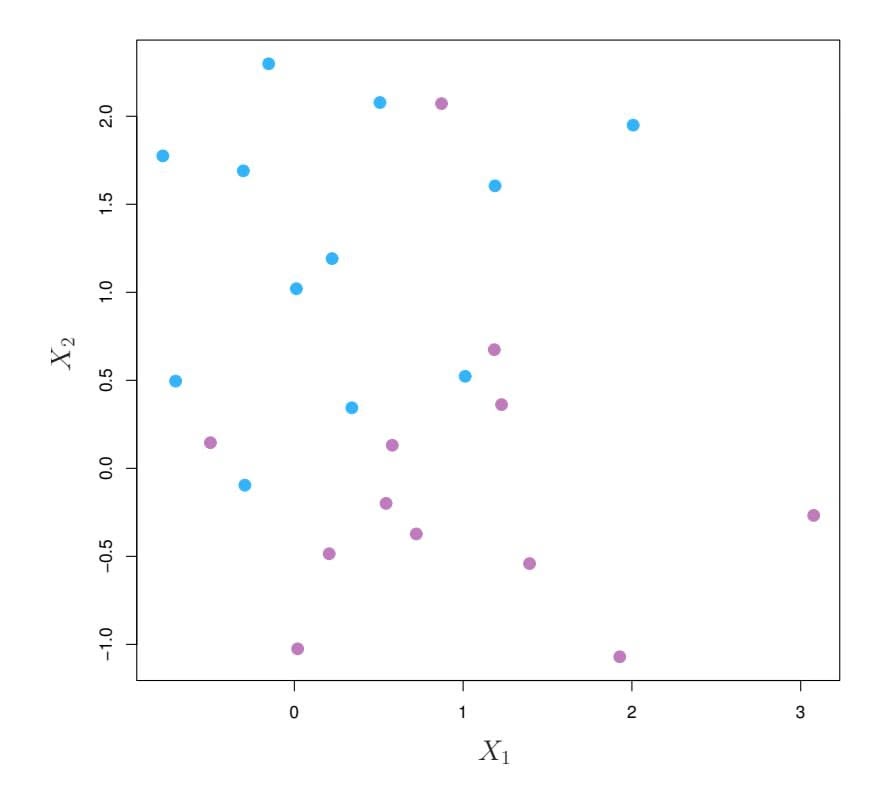

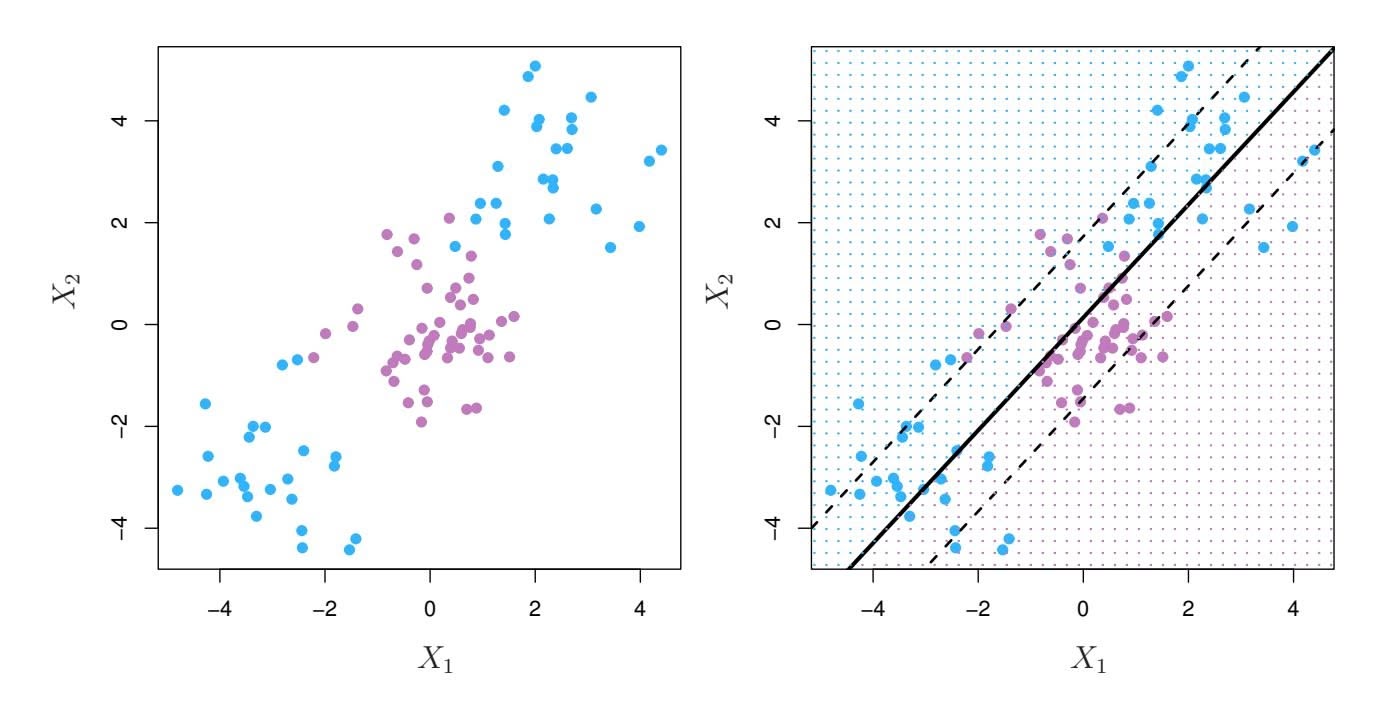

An example is shown in Figure 6.22 with $p = 1$ feature (plus an intercept) in two cases: when there are 20 observations, and when there are only two observations. When there are 20 observations, $n > p$ and the least squares regression line does not perfectly fit the data; instead, the regression line seeks to approximate the 20 observations as well as possible. On the other hand, when there are only two observations, then regardless of the values of those observations, the regression line will fit the data exactly. This is problematic because this perfect fit will almost certainly lead to overfitting of the data. In other words, though it is possible to perfectly fit the training data in the high-dimensional setting, the resulting linear model will perform extremely poorly on an independent test set, and therefore does not constitute a useful model. In fact, we can see that this happened in Figure 6.22: the least squares line obtained in the right-hand panel will perform very poorly on a test set comprised of the observations in the left-hand panel. The problem is simple: when $p > n$ or $p \approx n$ , a simple least squares regression line is too *flexible* and hence overfits the data.

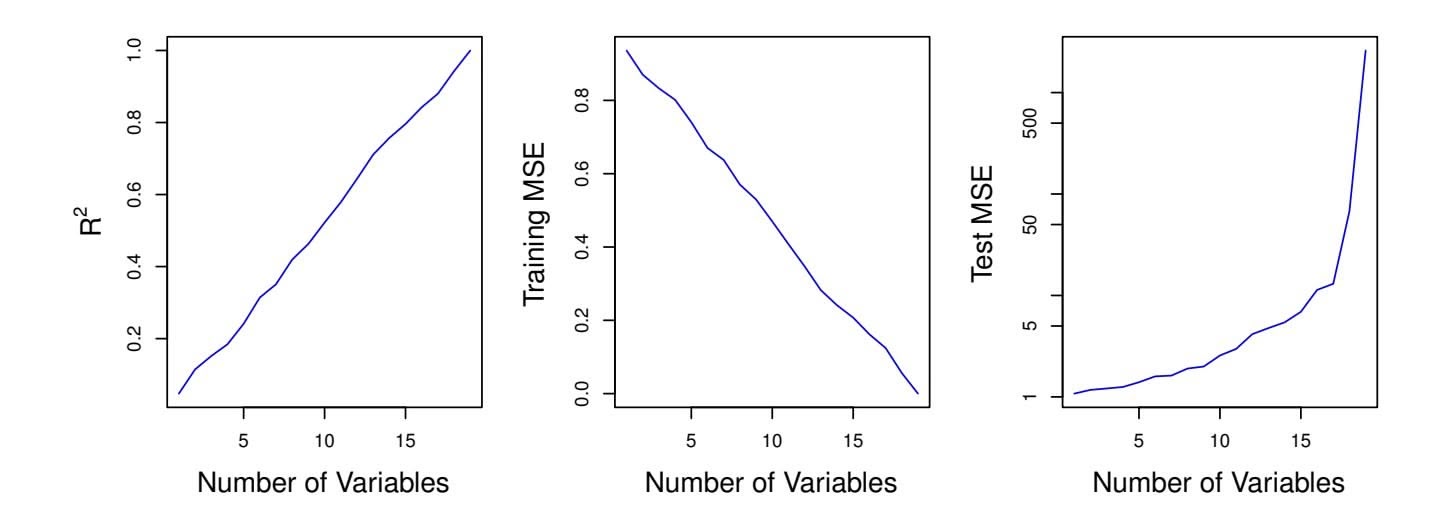

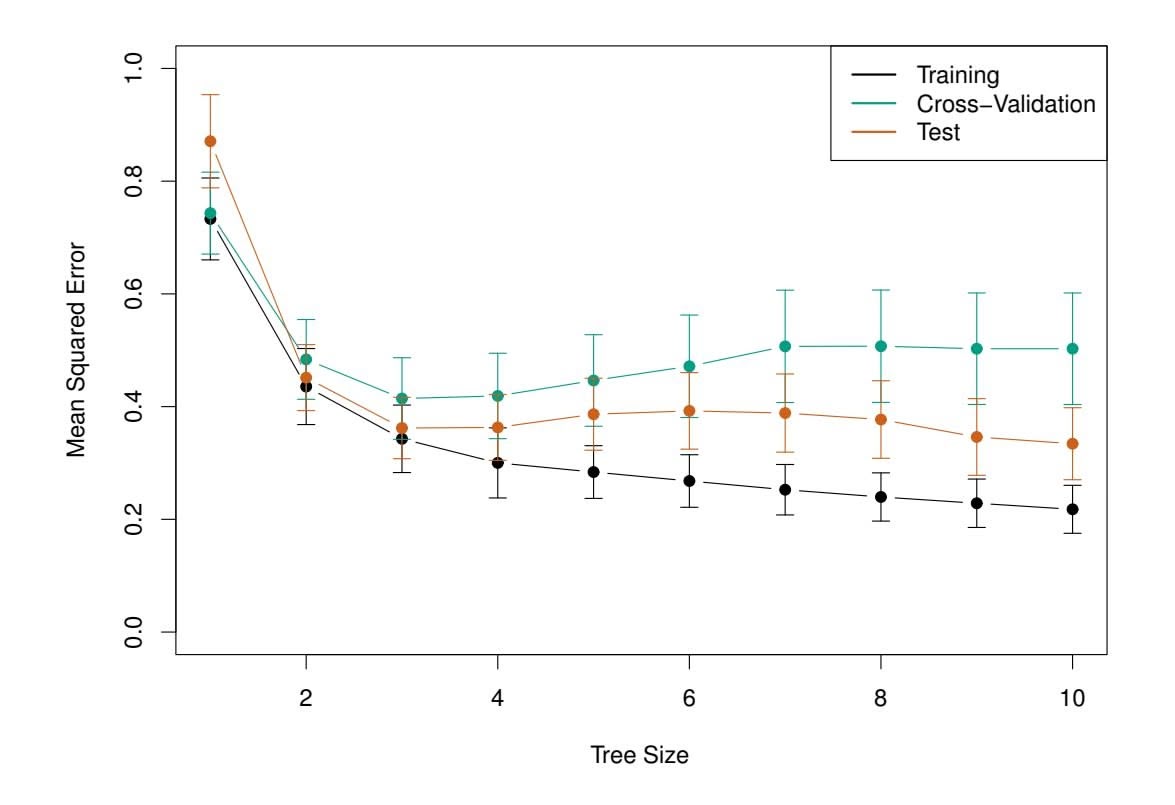

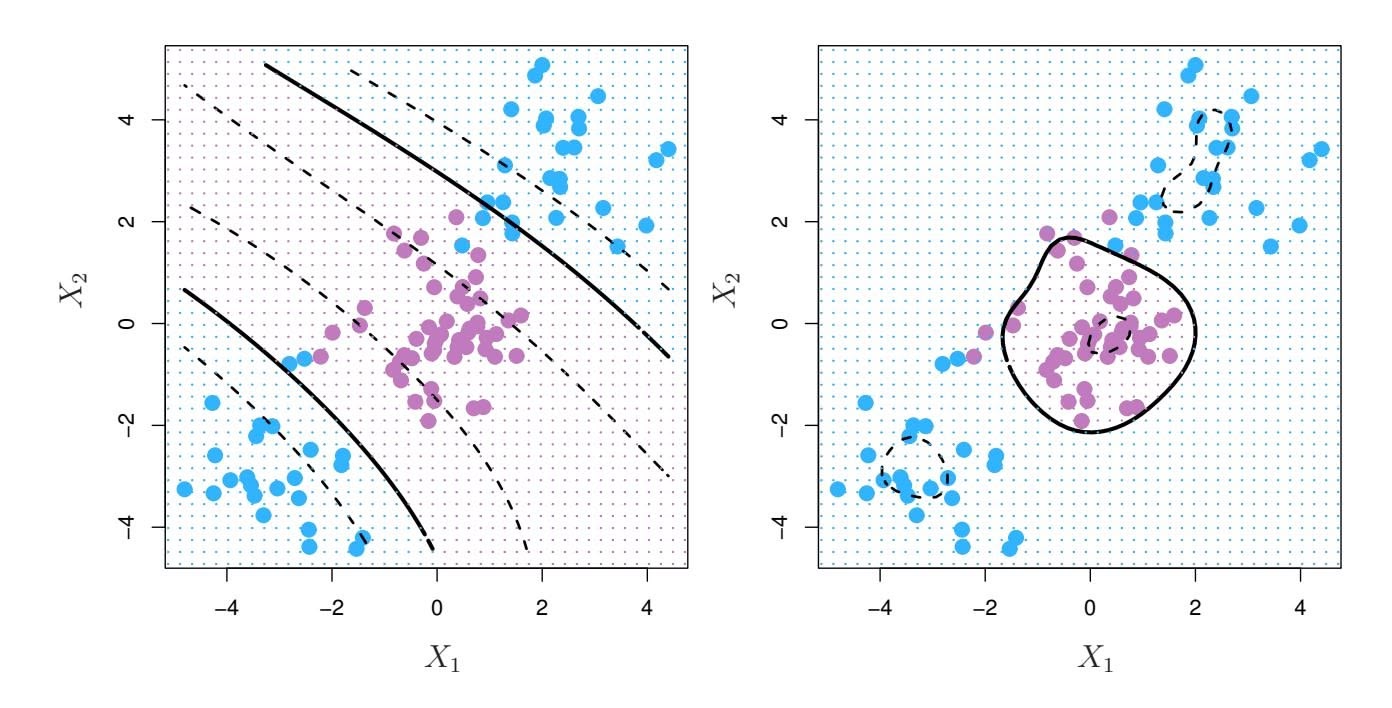

Figure 6.23 further illustrates the risk of carelessly applying least squares when the number of features *p* is large. Data were simulated with *n* = 20 observations, and regression was performed with between 1 and 20 features, each of which was completely unrelated to the response. As shown in the fgure, the model *R*2 increases to 1 as the number of features included in the model increases, and correspondingly the training set MSE decreases to 0 as the number of features increases, *even though the features are completely unrelated to the response*. On the other hand, the MSE on an *independent test set* becomes extremely large as the number of features included in the model increases, because including the additional predictors leads to a vast increase in the variance of the coeffcient estimates. Looking at the test set MSE, it is clear that the best model contains at most a few variables. However, someone who carelessly examines only the *R*2 or the training set MSE might erroneously conclude that the model with the greatest number of variables is best. This indicates the importance of applying extra care

264 6. Linear Model Selection and Regularization

**FIGURE 6.22.** Left: *Least squares regression in the low-dimensional setting.* Right: *Least squares regression with n* = 2 *observations and two parameters to be estimated (an intercept and a coeffcient).*

when analyzing data sets with a large number of variables, and of always evaluating model performance on an independent test set.

In Section 6.1.3, we saw a number of approaches for adjusting the training set RSS or $R^2$ in order to account for the number of variables used to fit a least squares model. Unfortunately, the $C_p$ , AIC, and BIC approaches are not appropriate in the high-dimensional setting, because estimating $\hat{\sigma}^2$ is problematic. (For instance, the formula for $\hat{\sigma}^2$ from Chapter 3 yields an estimate $\hat{\sigma}^2 = 0$ in this setting.) Similarly, problems arise in the application of adjusted $R^2$ in the high-dimensional setting, since one can easily obtain a model with an adjusted $R^2$ value of 1. Clearly, alternative approaches that are better-suited to the high-dimensional setting are required.

# *6.4.3 Regression in High Dimensions*

It turns out that many of the methods seen in this chapter for ftting *less fexible* least squares models, such as forward stepwise selection, ridge regression, the lasso, and principal components regression, are particularly useful for performing regression in the high-dimensional setting. Essentially, these approaches avoid overftting by using a less fexible ftting approach than least squares.

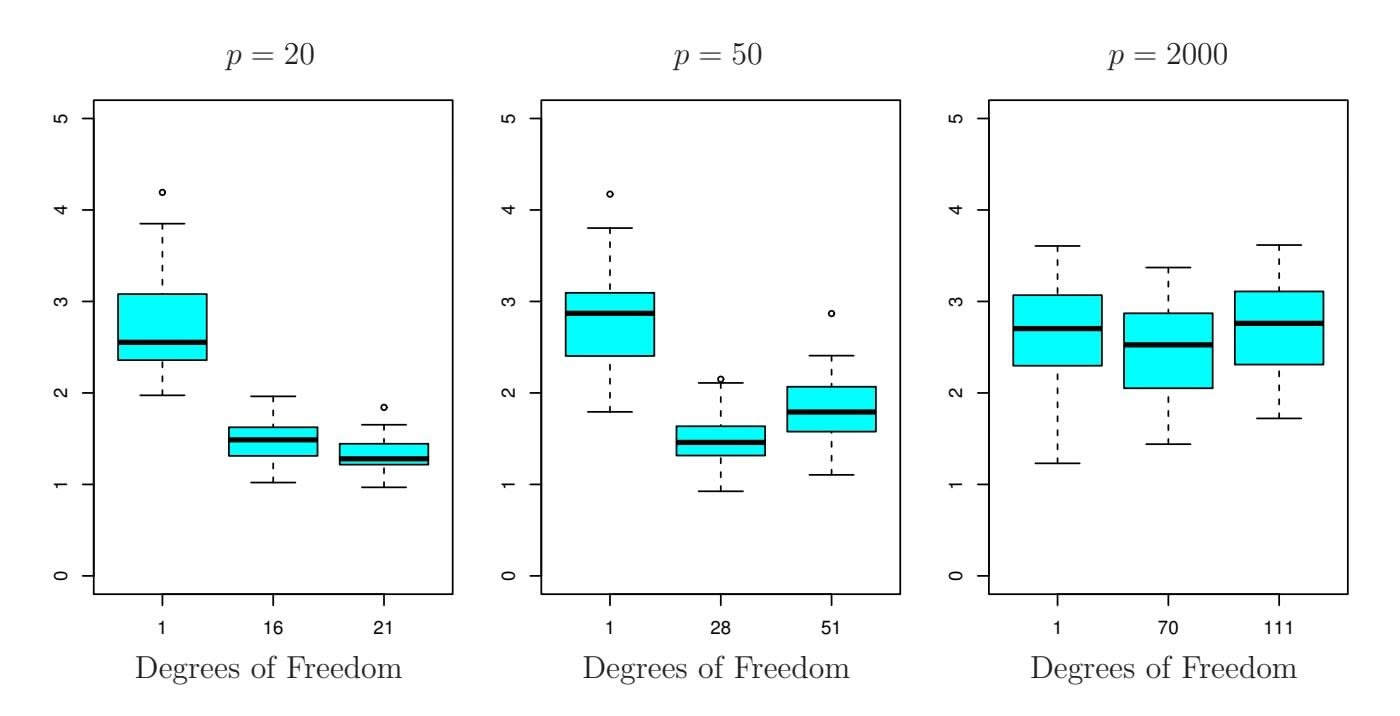

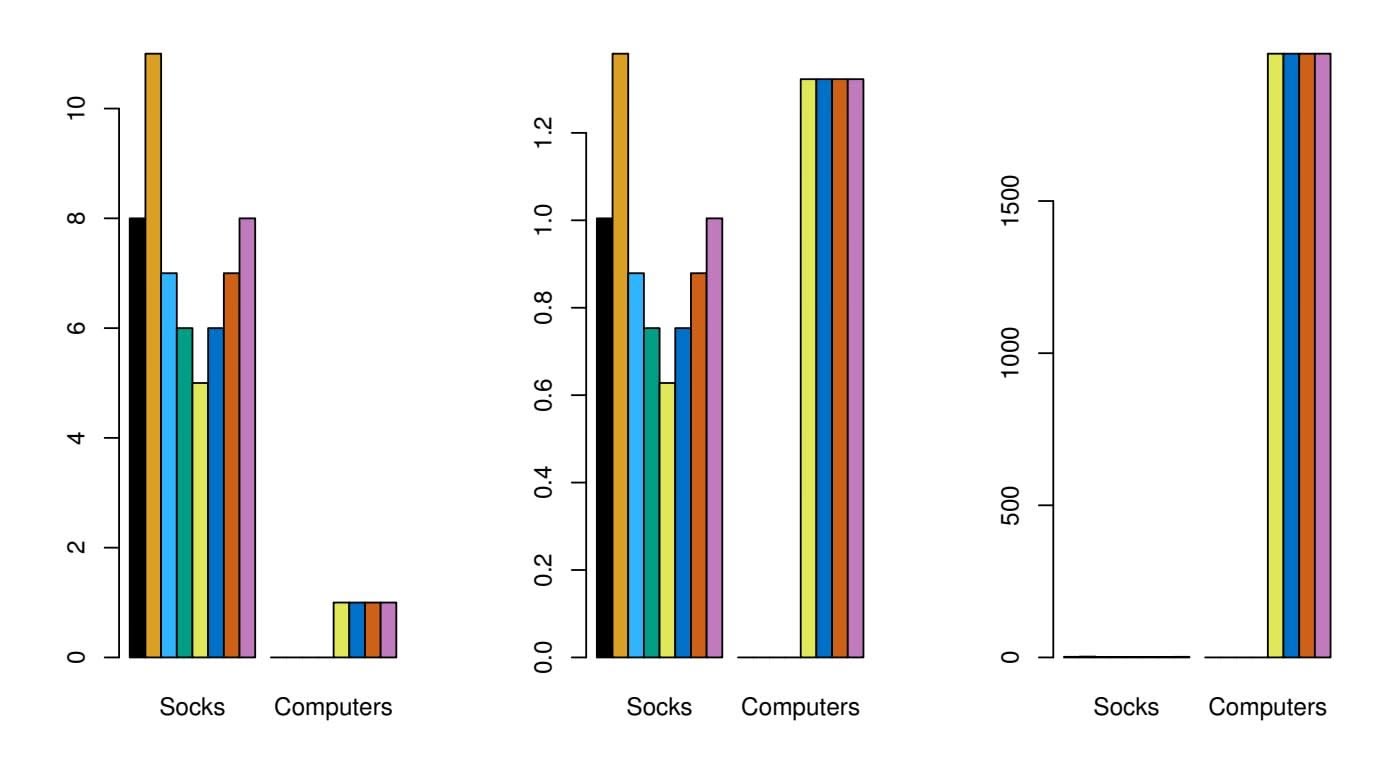

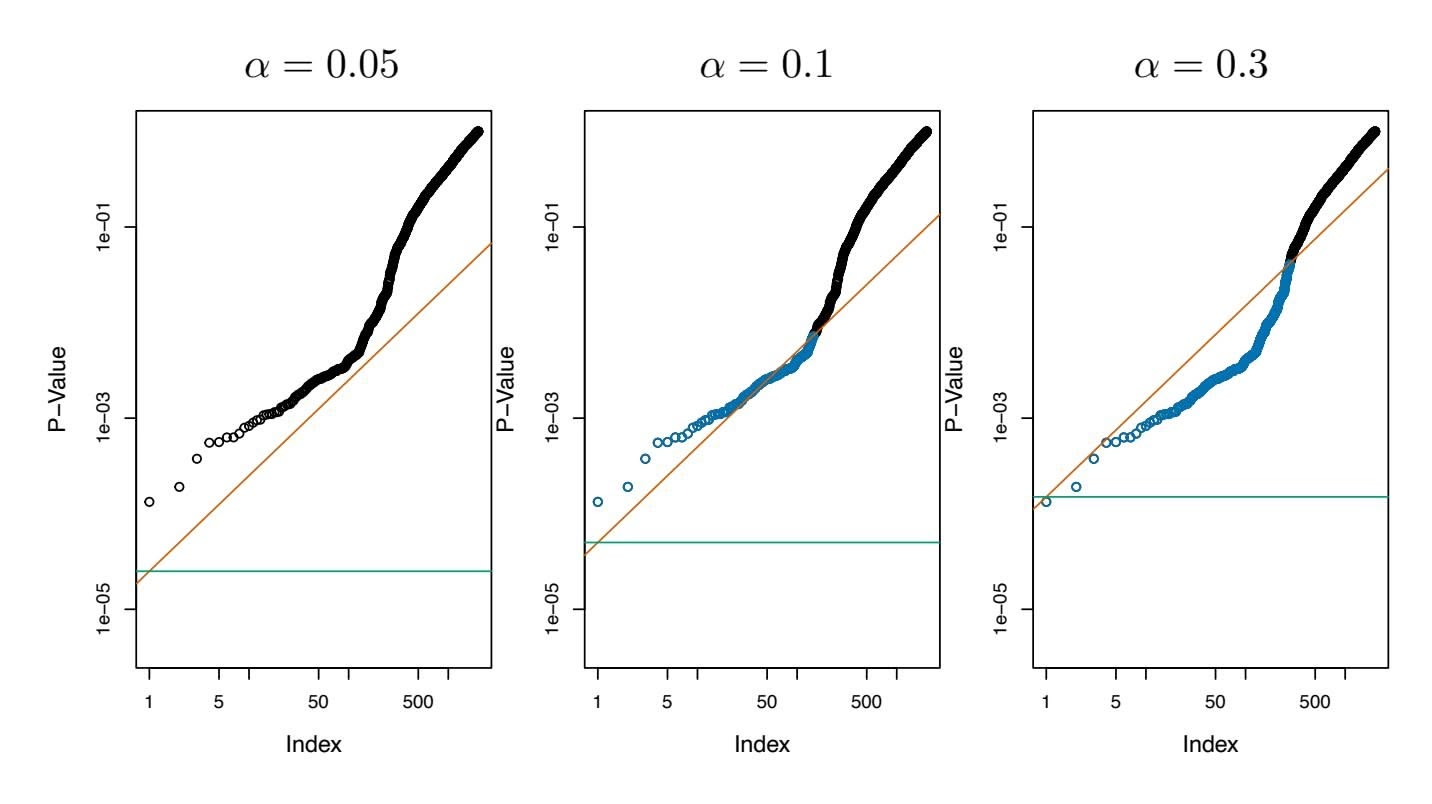

Figure 6.24 illustrates the performance of the lasso in a simple simulated example. There are $p = 20$ , $50$ , or $2,000$ features, of which 20 are truly associated with the outcome. The lasso was performed on $n = 100$ training observations, and the mean squared error was evaluated on an independent test set. As the number of features increases, the test set error increases. When $p = 20$ , the lowest validation set error was achieved when $λ$ in (6.7) was small; however, when $p$ was larger than the lowest validation

6.4 Considerations in High Dimensions 265

**FIGURE 6.23.** *On a simulated example with n* = 20 *training observations, features that are completely unrelated to the outcome are added to the model.* Left: *The R*2 *increases to 1 as more features are included.* Center: *The training set MSE decreases to 0 as more features are included.* Right: *The test set MSE increases as more features are included.*

set error was achieved using a larger value of λ. In each boxplot, rather than reporting the values of λ used, the *degrees of freedom* of the resulting lasso solution is displayed; this is simply the number of non-zero coeffcient estimates in the lasso solution, and is a measure of the fexibility of the lasso ft. Figure 6.24 highlights three important points: (1) regularization or shrinkage plays a key role in high-dimensional problems, (2) appropriate tuning parameter selection is crucial for good predictive performance, and (3) the test error tends to increase as the dimensionality of the problem (i.e. the number of features or predictors) increases, unless the additional features are truly associated with the response.

The third point above is in fact a key principle in the analysis of highdimensional data, which is known as the *curse of dimensionality*. One might curse of dimensionality think that as the number of features used to ft a model increases, the quality of the ftted model will increase as well. However, comparing the left-hand and right-hand panels in Figure 6.24, we see that this is not necessarily the case: in this example, the test set MSE almost doubles as *p* increases from 20 to 2,000. In general, *adding additional signal features that are truly associated with the response will improve the ftted model*, in the sense of leading to a reduction in test set error. However, adding noise features that are not truly associated with the response will lead to a deterioration in the ftted model, and consequently an increased test set error. This is because noise features increase the dimensionality of the problem, exacerbating the risk of overftting (since noise features may be assigned nonzero coeffcients due to chance associations with the response on the training set) without any potential upside in terms of improved test set error. Thus, we see that new technologies that allow for the collection of measurements for thousands or millions of features are a double-edged sword: they can lead to improved predictive models if these features are in

curse of dimensionality

266 6. Linear Model Selection and Regularization